Get to Know Splunk Machine Learning Environment (SMLE)

Platform John Reed

We call it Splunk Machine Learning Environment (SMLE). SMLE is a purpose-built environment, bringing the power of data science and machine learning to production workloads for our Splunk customers. We support a seamless end-to-end ML journey with development, deployment, monitoring, and management — eliminating disjointed solutions with a new, streamlined experience optimized for productivity.

Across almost every industry, data scientists are playing an increasingly key role in customers’ IT, Security, and DevOps ecosystems, so we tackled that head-on with SMLE. SMLE includes all of the tools enabling data scientists to be productive, while at the same time enabling our community of Splunk admins, data analysts, app users, and SPL experts to explore data in an interactive, iterative manner. Coupled with a robust and growing set of MLOps capabilities, we are putting the power of ML in the hands of Splunk users.

Leveraging the cloud remains one of Splunk’s top priorities as we help our customers along their digital transformation journeys. Accordingly, it was a natural fit that the first iteration of SMLE be delivered as a cloud offering. We call it SMLE Labs.

Beta Program Updates

Since our announcement at .conf20, we have seen tremendous participation in the SMLE Labs beta program. Hundreds of users have tested the product, some running 1000+ models in parallel against tens of millions of rows of data without breaking a sweat. Beyond just putting SMLE Labs to the test, our beta customers are providing valuable feedback which feeds straight into our roadmap. Model management, supported libraries, UI, and documentation are just a few of the areas where SMLE is improving thanks to our engaged and active customers.

To extend the reach of SMLE Labs to all Splunk users, we are looking forward to launching a Customer Advisory Board in February to share our progress and learn from you. This is a great opportunity to connect directly with the product and engineering teams at Splunk and let your voice be heard. Check out the link at the end of this blog for more information on how to sign up.

SMLE Labs Walkthrough

Now that you know a little about SMLE Labs, the rest of this blog is designed to showcase the various capabilities at a high level by walking you through the environment, step-by-step. SMLE Labs includes two powerful experiences. The first is SMLE Studio, a native Jupyter notebooks environment where you can train custom ML models, experiment with built-in Streaming ML capabilities, or build sophisticated SPL pipelines right in the Splunk ecosystem. The second is a set of operationalization capabilities available in the SMLE Labs console that simplify model deployment, monitoring, and management of your models and pipelines.

Opening SMLE Labs, you land on the console dashboard page (above left) where you have quick links to your recently opened notebooks as well as helpful metrics on your most recently published models and deployed pipelines. You can navigate throughout the SMLE Labs environment, or you can dive into SMLE Studio (above right) to start experimenting and building.

SMLE Studio offers you a variety of capabilities in a unique and powerful package. First, you can leverage the flexibility of Jupyter notebooks to experiment and develop custom pipelines, including popular use cases like ML, advanced analytics, or security. Second, you can take advantage of the most common frameworks like TensorFlow, PyTorch, and scikit-learn to easily train custom models in a familiar way. Third, you can embed pre-trained Streaming ML algorithms with a single command, turning a basic query into a ML-powered pipeline. Finally, you can combine the power of Python and R with SPL, truly bringing the power of ML into your Splunk environment.

Our sample notebooks are a great place to get started with each of these capabilities, with plenty of end-to-end examples. You can train a model, test out a pipeline, or familiarize yourself with the built-in Streaming ML algorithms, all in pre-configured tutorials. You can borrow bits and pieces for your own use cases, or you can start from scratch.

As an example, here is one sample notebook that walks you through building a TensorFlow model using Python and SPL2. You can use this notebook as a starting point to build your own custom model, injecting your own code and business logic. All of the logic to pull training data, experiment, and even publish the model is built right in to help you jump-start your project.

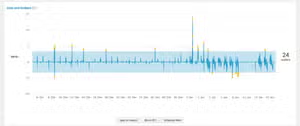

With SMLE, not only can you train your own models, but also you can leverage any one of our many built-in Streaming ML algorithms right inside your notebook. This notebook explores one of our Streaming ML models — drift detection, and then visualizes the output on a graph. What’s unique about this example is the results from the SPL query are passed directly into a Python object, where they are then graphed on an imported library.

Remember that SMLE Studio is just one part of the SMLE experience. Once you have built your models, deploying, managing them, and monitoring them has historically been a challenge. We simplified many of these MLOps tasks with powerful dashboards, monitoring, and an easy-to-use UI to deploy and run the content that is created.

Stepping out of SMLE Studio, the Model Management page in the SMLE Labs console provides a list of all the models that you have published, with your most recently published models at the top. You can open each model to see additional details and metadata (like expected input and output fields), delete them, or choose “run” to deploy one.

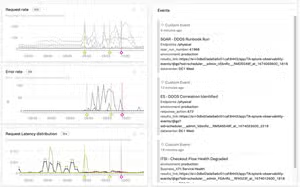

Next, you will want to see how your deployed model is doing. You can go straight to the monitoring page to keep an eye on your model run, or you can view aggregate-level metrics to see important information related to your environment, plan for capacity, and more.

In just a few screens, you were able to train, deploy, and monitor a custom model while exploring the many capabilities in SMLE Labs. And for all our dark-mode fans, you can switch seamlessly between light and dark mode throughout SMLE Labs and SMLE Studio.

Get Started

With support for any combination of Python, R, and SPL2, SMLE brings ML and data science into the Splunk search and query ecosystem. By developing their ML pipelines with SMLE Labs, Splunk users are getting to production 3-5x faster than using external environments, shifting from iteration to operation faster than ever.

While we are currently at capacity for our beta program, by signing up you will have the opportunity to join our Customer Advisory Board, as well as be the first to hear about product announcements and updates. We are excited to bring SMLE to all of you, and we are just getting started!

Resources

- Interested in SMLE? Sign up for our Customer Advisory Board and interest list

- Machine Learning Guide: Choosing the Right Workflow

- Using SMLE and Streaming ML to Detect Anomalies

- SMLE Product Brief

This article was co-authored by John Reed, Principal Product Manager for Machine Learning, and Mohan Rajagopalan, Senior Director of Product Management for Machine Learning.

Related Articles

Custom Anomaly Detection with Splunk IT Service Intelligence and Machine Learning Toolkit v3.2 - Part 2

Driving Data Innovation With MLTK v5.3