What is Splunk Virtual Compute (SVC)?

Platform Samir ViraniOrganizations today are experiencing a rapid increase not only in data volume but also in the diversity of use cases for which data is used. In such environments, a monolithic, one-size-fits-all approach to pricing may not always scale with the value created by the data platform. To address this growing challenge, Splunk Cloud Platform gives you the flexibility to choose from two pricing options aimed at optimizing value for your specific use cases: Ingest Pricing and Workload Pricing.

How Should an Organization Choose Between Ingest and Workload Pricing?

Ingest Pricing is a volume-based approach that scales with the amount of data brought into Splunk Cloud Platform per day, whereas Workload Pricing scales with capacity purchased in anticipation of the resources needed for search and analytics processing of your data. Therefore, Ingest Pricing is ideal for companies whose data sets are discrete and who have an understanding of their data volume and search patterns in advance. For example, if you expect to search your data extensively, Ingest Pricing is a suitable approach.

On the other hand, the Workload Pricing gives you the flexibility to bring more data into Splunk Cloud Platform without worrying about incurring high costs driven by high volumes of data with low requirements on reporting. For example, if you expect that a large chunk of your data will be searched infrequently, but you still want the ability to correlate it across other data in your platform, Workload Pricing is the right option. This model is also ideal for organizations who are looking for “option value” of putting data into the platform with no clear search expectation.

Let us take a closer look at the components of Workload Pricing, what SVCs are, and how to size, monitor, and manage workloads as your data requirements evolve with Splunk.

The Workload Based Pricing Components of Splunk Cloud Platform

In the Workload Pricing model, the price you pay is a function of the resources needed to drive the workloads you want to accomplish, as well as the storage needed for the data you want to analyze:

- Splunk Virtual Compute (SVCs): The compute, input/output, and memory resources required to support search and ingest workloads

- Storage Blocks: The number of terabytes of storage required to capture and to retain your data for the required amount of time (your data retention policies)

More About SVC

Splunk Virtual Compute (SVC) is a unit of compute and related resources that provides a consistent level of search and ingest equal to the SVC performance benchmark. It is based on two major parts of the Splunk Cloud Platform: Indexers and Search Heads.

Examples of workloads are compliance storage, basic reporting, and continuous monitoring. To power these workloads, SVCs utilize two primary factors: search and ingest. Each workload has its own profile, which can be classified anywhere between high-ingest/low-search and low-ingest/high-search. See the descriptions and graphic below for some of the most popular workload profiles:

Compliance storage: Storage management is the process of ensuring an organization's storage systems and its data are fully protected in accordance with the organization's security requirements (compliance). The primary purpose is to retain data for compliance purposes, i.e., have them available in case the need for future analysis/investigation arises. Compliance storage use cases assume very little search and therefore have low workload requirements for each GB processed.

Data lake: A data lake is a flexible storage repository that can hold raw structured and unstructured data. The intent of a data lake use case is to have data from various sources available and make them accessible as needed. Access is assumed to be infrequent but more frequent than data stored for compliance reasons. Workload requirements are still relatively low but higher than for compliance storage.

Basic reporting: The process of collecting and formatting raw data and translating it into a digestible format to assess organizational performance. This data is infrequently searched or utilized and is used for fixed scheduled reporting and/or view only dashboards. Therefore, basic reporting use cases have medium workload requirements per GB processed.

Ad-hoc investigation: Ad-hoc investigation is performed by business users on an as-needed basis to address data analysis needs that are not met by the business's static, regular reporting. End users engage in ad-hoc investigation when specific insights need to be extracted. Search volume is relatively high and less predictable, leading to higher workload requirements per GB processed.

Continuous monitoring: Continuous monitoring provides real-time visibility into an organization's IT environment. Continuous monitoring is characterized by scheduled and frequent searches of specific data sets. This drives very high search activity, is the most workload intensive use case, and requires the largest amount of resourcing per GB of data volume monitored.

More About Storage Blocks

Storage Blocks form the second part of the Workload Pricing equation. The amount of storage space needed is driven by your data volume and how long it is stored for. Splunk Cloud Platform offers the following storage types in 500 GB blocks to account for a diverse set of use cases and retention schemes:

- DDAS, Dynamic Data Active Searchable: readily searchable data in Splunk Cloud.

- DDAA, Dynamic Data Active Archive: Splunk: managed data archive that automates the rehydrating of data back into Splunk Cloud.

See this blog, Dynamic Data: Data Retention Options in Splunk Cloud, to learn more about DDAA and DDSS.

How is SVC Utilization Measured?

SVC utilization measurements for each machine are captured every few seconds. SVC usage for your Splunk Cloud Platform environment is calculated by aggregating these utilization measurements across all the search tiers and index tiers for each hour. You can view your hourly SVC consumption anytime on the Cloud Monitoring Console (CMC).

SVC Sizing: How Many Do I Need?

The total number of SVCs you will need will be equal to the maximum compute, i/o, and memory resources used during your peak window of usage.

Many organizations have demand spikes on the platform throughout the day, which can be described as a period of very high usage followed by a long period of low usage. For example, the minute after midnight tends to see a demand spike—given that all daily, hourly, minute by minute searches often overlap at that time—like in the image below.

These workloads can be managed by spreading out any workload that does not need to necessarily overlap. Other factors that drive workload can also be optimized, such as search usage, apps, and the number of users. When your sustained workload is approaching your allotted number of SVCs consistently throughout the day, this is an indicator that you need a higher amount of SVCs to tackle that load.

Workload Pricing and Sizing for New Customers

Ingest Pricing is based on data volume only (single dimension) and can therefore be a simple and easy approach to get started on the Splunk Cloud Platform.

Workload Pricing is two dimensional (based on data volume and search intensity) and inherently requires a more granular understanding of your needs. But it can be more economical if your workload profile includes lower search use cases.

If you are new to Splunk, we recommend that you begin by identifying your anticipated workloads and the expected daily data volume for each workload. Remember, each profile is based on two primary factors: search and ingest. The combination of those factors is what drives your SVC usage. Based on historical patterns of existing SVC customers, your search profile will likely be the biggest driver of SVC usage. The table below shows the types of searches that customers execute for different use cases and the ingest volume range in relation to the SVCs needed to accomplish each. We included ranges because they are based on usage statistics of a cohort of customers, rather than a single data point. SVC usage may vary based on the complexity of the data ingested and searches executed.

Estimated GB/day per SVC range by use case (based on usage statistics of multiple customers)

Premium Solution - ES or ITSI

(High workload)

You can use these example ranges as approximate guidelines for sizing your SVC needs. Your Splunk sales team can help you estimate the appropriate GB/Day per SVC for your workloads. We use these estimates to help size your SVC purchase. As an example, if your primary use case was compliance storage, you may bring in around 35 to 45 GB/day per 1 SVC.

You can use the Splunk sizing calculator to estimate the number of SVCs you will need based on how efficiently you believe you can operate Splunk. While the Splunk sizing calculator gives you a good estimate on the number of SVCs you will need, we recommend connecting with your Splunk account team for additional details.

Existing Splunk Enterprise Customer Sizing

For existing on-premises or bring-your-own-license (BYOL) Splunk Enterprise customers, the Splunk Cloud Migration Assessment App will help you collect data points and automate your assessment. This is extremely useful if you already have a Splunk deployment that is addressing several different use cases.

Existing Splunk Cloud Platform Ingest Customer Sizing

Existing Splunk Cloud Platform ingest customers looking to migrate to workload can work directly with their Splunk account team. Splunk Cloud Platform already has all of the metrics needed to assist your team with the recommendation.

To Sum It Up

Ingest Pricing scales with the volume of data ingested in the platform, and is suitable for organizations with predictable data usage needs. Workload Pricing is aligned with the compute, i/o, and memory resources used for processing data. With Workload Pricing, organizations have the flexibility to ingest a lot more data upfront and can explore different use cases, with complete monitoring of usage through the CMC.

Read Workload Pricing and SVCs: What You Can See and Control for additional details on what you can see and control in the CMC.

*This blog was originally published on September 13, 2021; last updated on May 26, 2023.

Related Articles

Improving Security: Updates to Classic (SimpleXML) Dashboards Containing External Links or Content

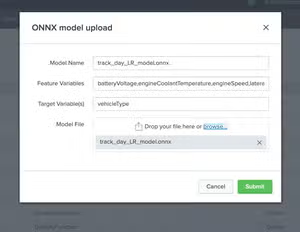

Bring More ML to Splunk: Inference Externally Trained ONNX Models in MLTK 5.4.0