Splunk and AWS: Monitoring Metrics in a Serverless World

Bill Bartlett (fellow Splunker) and I had the distinct pleasure of moving some workloads from AWS EC2 over to a combo of AWS Lambda and AWS API Gateway. Between the dramatic cost savings, and wonderful experience of not managing a server, making this move was a no brainer (facilitated as well by great frameworks like Zappa). Both services are pretty robust, and while perhaps not perfect, to us they are a beautiful thing.

While we were using Splunk to monitor several EC2 servers with various bits of custom code via the Splunk App and Add-On for AWS, we realized (ex post facto) that while Lambda was supported out of the box by the Add-On, API Gateway was not. What is an SE to do?! Much like AWS services, Splunk Add-Ons are also a thing of beauty that make the process of gathering and taming your data very simple. The Splunk Add-On for AWS is no exception. In fact, it’s very feature-rich, seamlessly supporting data collection from new AWS services. The rest of the blog will detail this short journey, and hopefully help you integrate Splunk into your AWS “serverless” infrastructure.

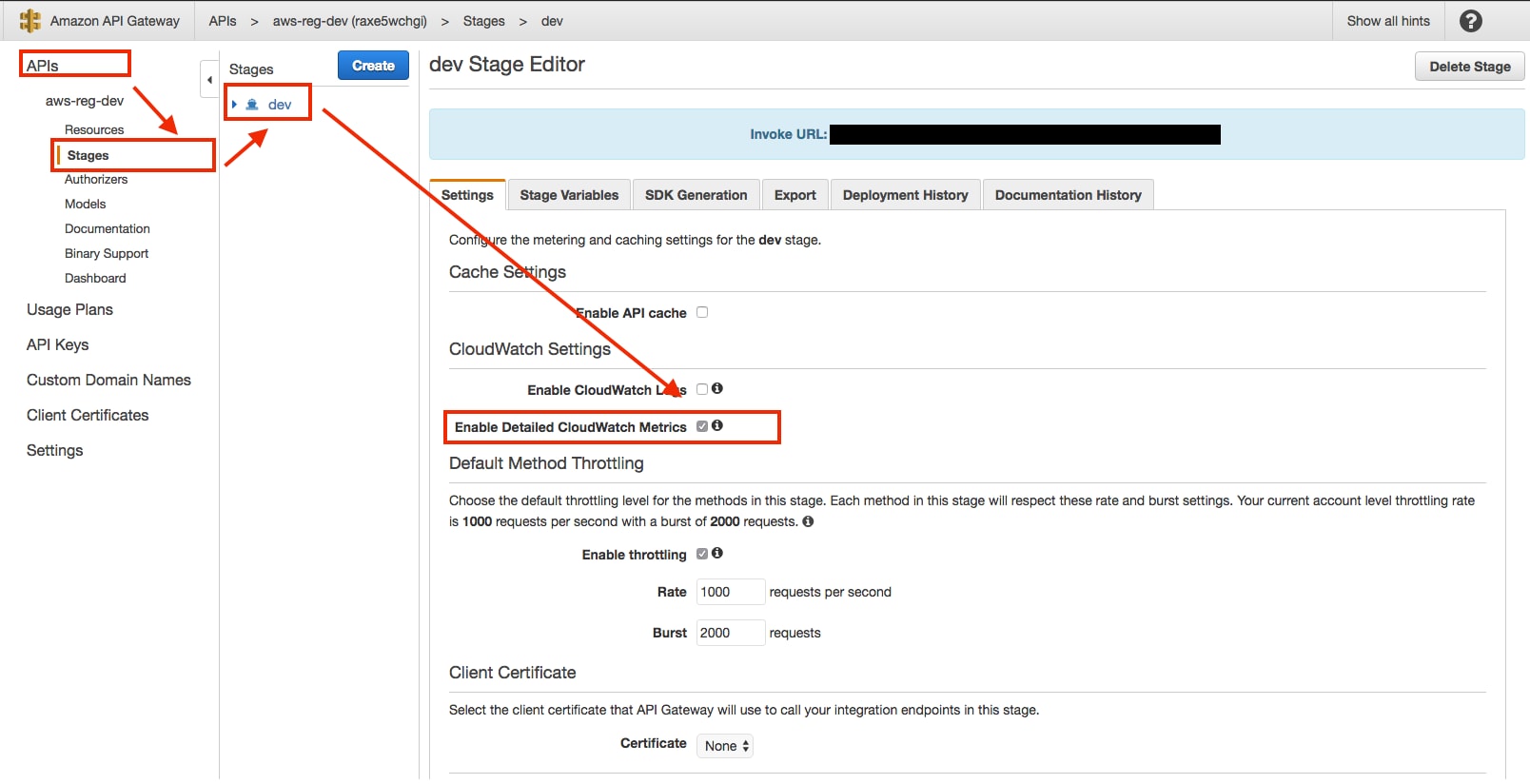

First, we will need a quick primer on how to set this up on the AWS side so the Add-On has something to gather. In the AWS console, go to:

“Services”>”API Gateway”>select the API you want to turn on metrics for>go to the “stage” sub-menu of that API>select the stage you want to add metrics to>check the box next to “Enable Detailed CloudWatch Metrics”.

Repeat this step for any API and associated “stages” you see fit.

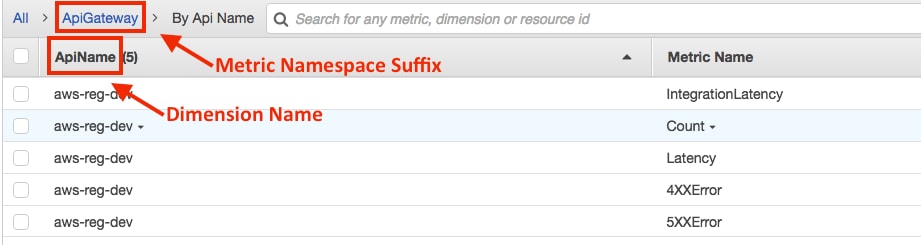

Next we will want to review the various naming conventions that CloudWatch uses so we can properly configure things in the Splunk add-on. In your AWS console go to:

Services>CloudWatch>Metrics>you should be on the ”All Metrics” tab>scroll to “AWS Namepaces” section>select “ApiGateway”>Select “By Api Name”.

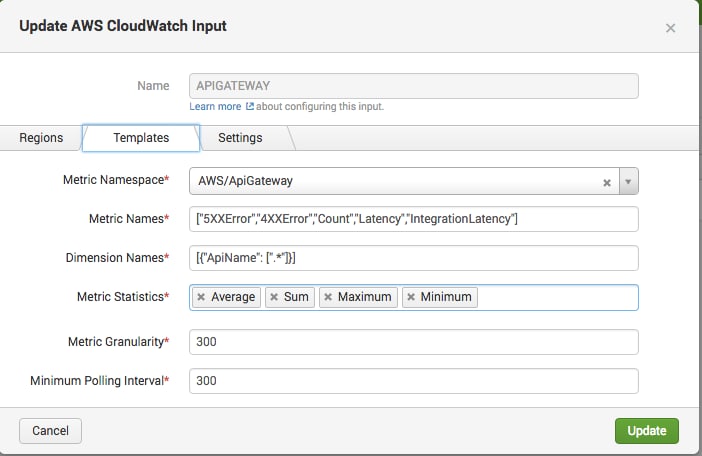

The “ApiName” field is going to be our “Dimension Names” value. Note that the syntax is similar to an array of JSON objects – with a regular expression sprinkled in (give me metrics for all my APIs by “name” of the API):

[{“ApiName”: [“.*”]}]

The “Metric Name” will be those metric names we saw in the AWS console, formatted as an array:

[“5XXError”,”4XXError”,”Count”,”Latency”,”IntegrationLatency”]

The Metric Namespace we can just use the namespace as provided by Amazon, specifically:

“AWS/ApiGateway”

Note that this is not part of the out of the box list provided by the add-on, but because the Splunk add-on is awesome, we can add new namespaces with a little configuration, and it will know how to collect metrics on them.

The “Metric Statistics” you can just grab from the picklist (they are fairly universal across the different namespaces). I personally like having:

Average, Sum, Maximum and Minimum

In the Splunk Add-On UI, you should have something similar to the below.

The rest of the fields are up to you, but generally speaking, using 300 for “Metric Granularity” and “Minimum Polling Interval” should be sufficient for your first go (and you can adjust as needed). Please reference the Splunk documentation for what all these things mean in detail.

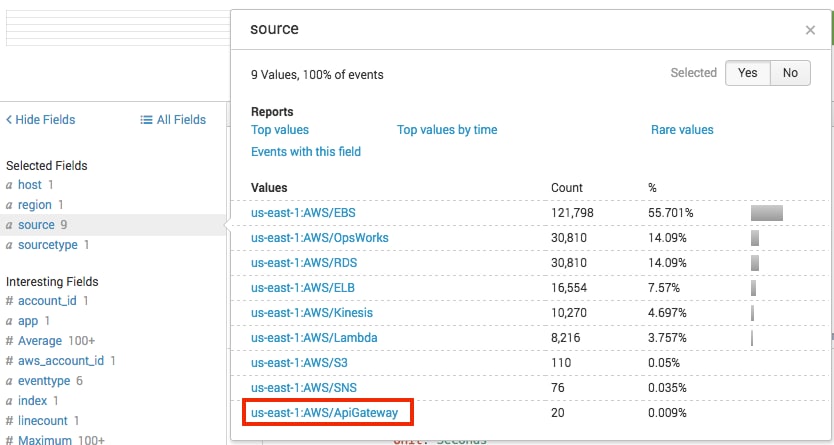

Now that both AWS and the Add-On are configured, we can start looking at what data is flowing in. It will be helpful to understand how the AWS add-on classifies the various data, which is informed by how we configured the input. Open to a search page on your Splunk instance and type in the following:

earliest=-60m "sourcetype = aws:cloudwatch"

You should see some data, and if you’ve been using the Add-On previously, then likely we have more than just ApiGateway data from CloudWatch. This is where our “source” key becomes very helpful, as the Add-On does the heavy lifting of assigning an intuitive name for source.

The convention is typically <aws region>:<metric namespace>, so when exploring the data, you can narrow your searches very quickly by simply using a search such as this:

"source=*:AWS/ApiGateway"

This should return CloudWatch metric data across all regions for ApiGateway. You should see something similar to the screenshot below after a few minutes of the input running.

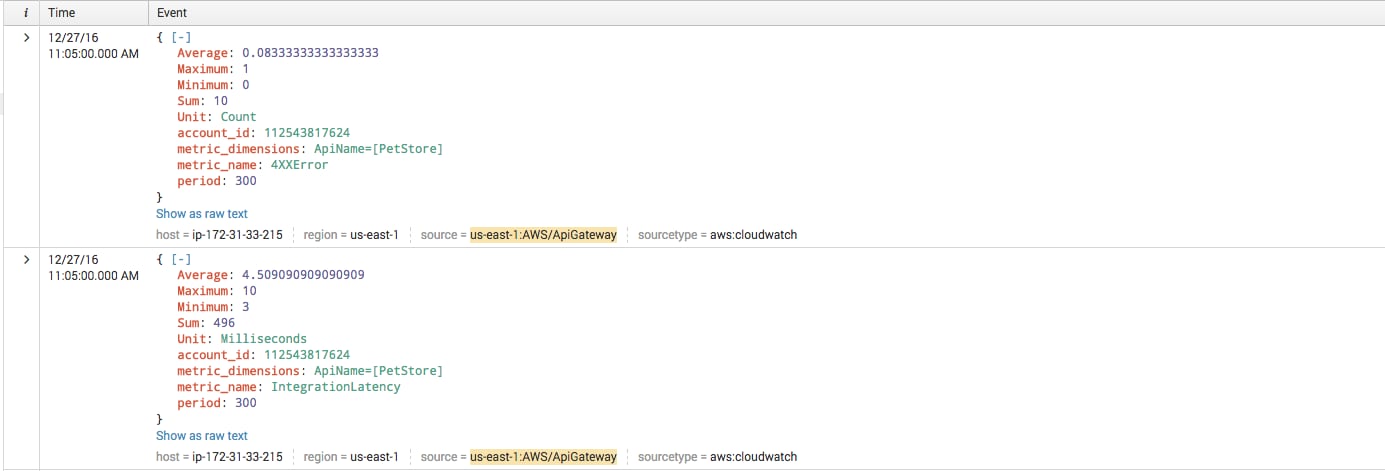

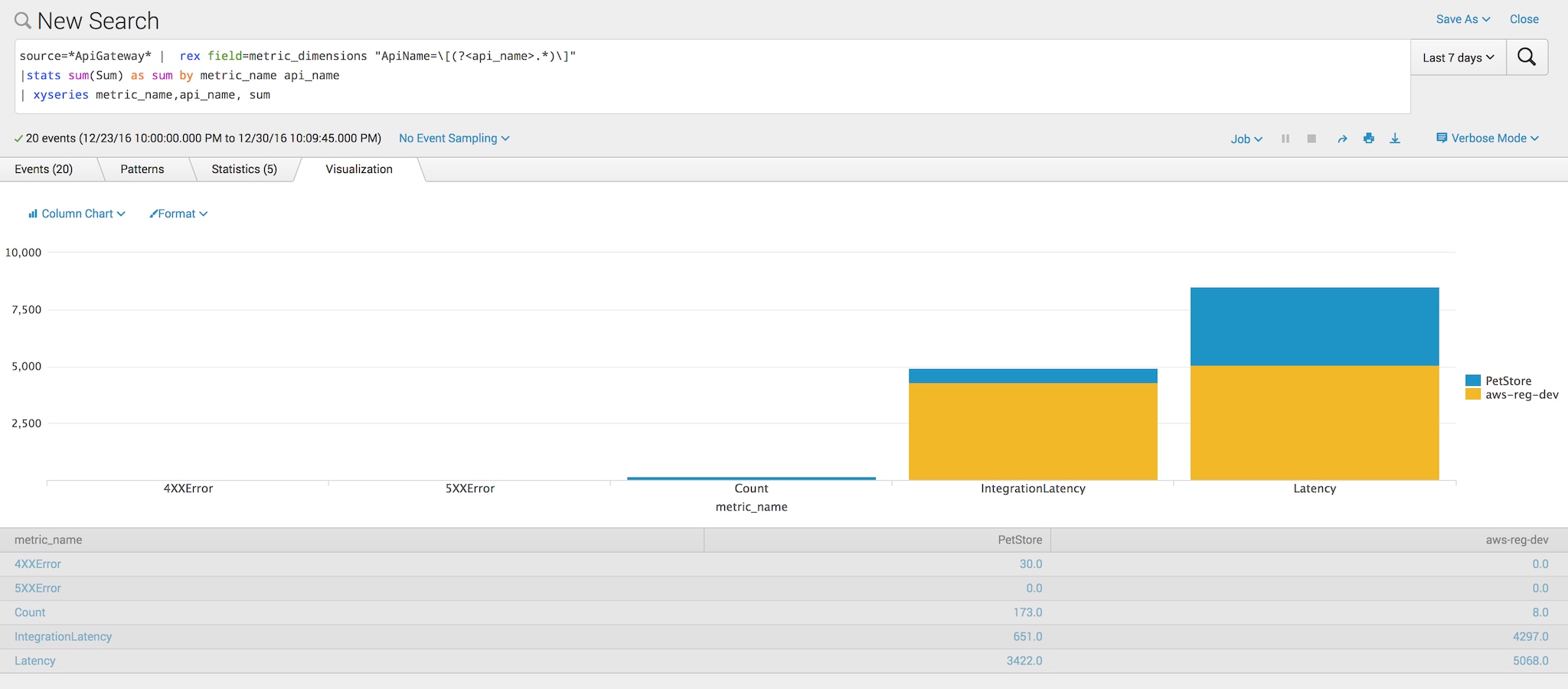

Now that we have API Gateway data flowing, let’s create a few simple searches to explore the data. First, it will be helpful to look at the different metric values across our “metric dimensions”, which is the name of our API in the AWS console:

source=*ApiGateway*

| rex field=metric_dimensions "ApiName=\[(?<api_name>.*)\]"

|stats sum(Sum) as sum by metric_name api_name

| xyseries metric_name,api_name, sum

In this case, Splunk is summing the “Sum” value of each metric name by the API name. The “rex” command is simply there for cosmetic reasons to make the API name easier to read. The resulting visualizations should look something like the following, with your API names substituted, and different distributions of values, etc.:

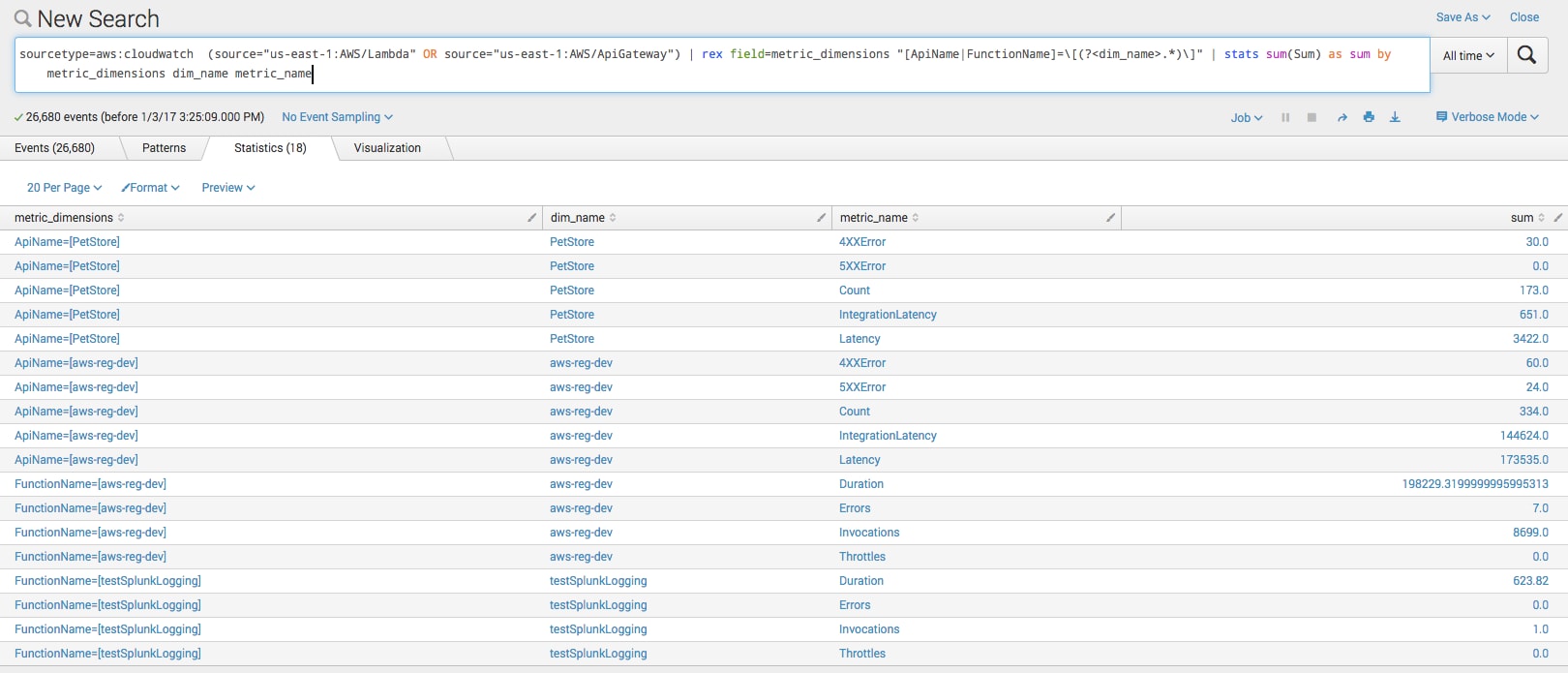

Another search that might be interesting is mashing up our Lambda and API Gateway data to see the different metrics next to each other.

sourcetype=aws:cloudwatch (source="us-east-1:AWS/Lambda" OR source="us-east-1:AWS/ApiGateway")

| rex field=metric_dimensions "[ApiName|FunctionName]=\[(?<dim_name>.*)\]"

| stats sum(Sum) as sum by metric_dimensions dim_name metric_name

We can search by our intuitive “source” key again, this time by region. The “rex” command has been modified to grab the API Name in the case of API Gateway metrics, or the “Function Name”, in the case of Lambda metrics. The reason this works is mainly because Zappa automagically sets the API name and the Lambda function name to the same thing, so YMMV with this search.

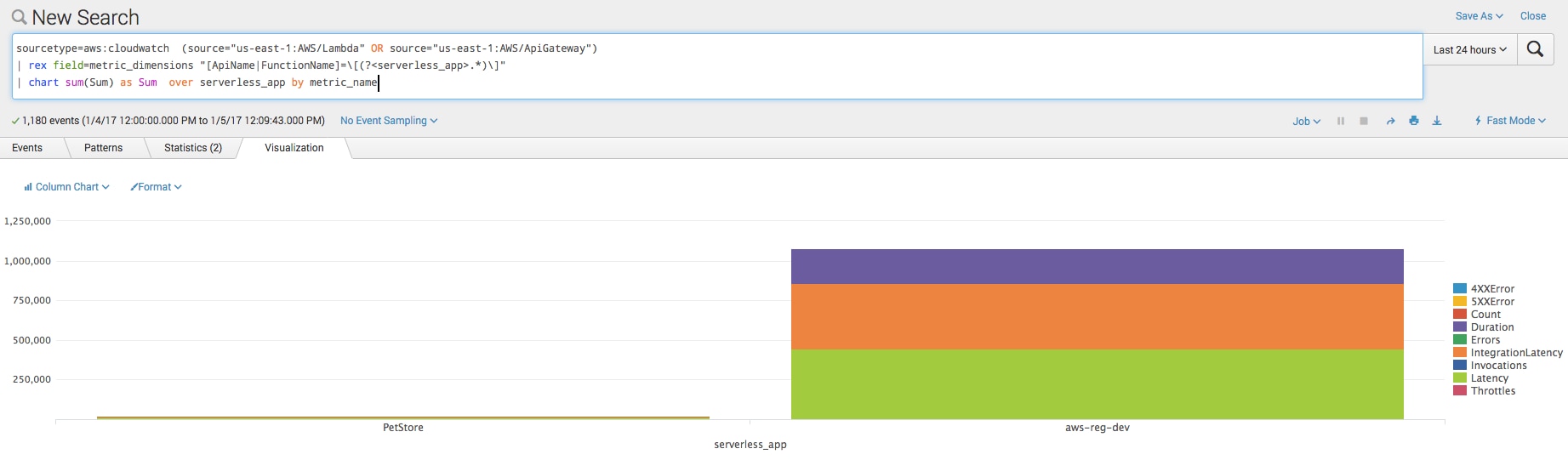

To take the previous example futher, lets look at grouping our metrics together as a “serverless app” where the Lambda function name and API name are the same. In this example, the “chart” command gives us a nice way to group things together.

sourcetype=aws:cloudwatch (source="us-east-1:AWS/Lambda" OR source="us-east-1:AWS/ApiGateway")

| rex field=metric_dimensions "[ApiName|FunctionName]=\[(?<serverless_app>.*)\]"

| chart sum(Sum) as Sum over serverless_app by metric_name

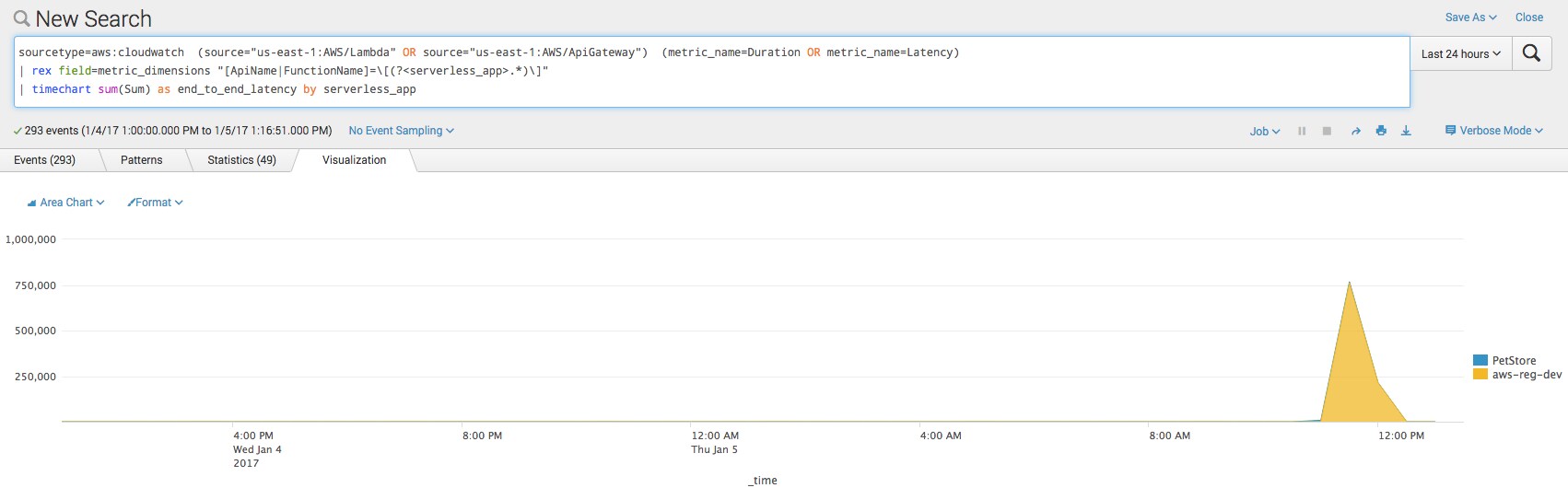

If we want to focus on a specific metric, e.g. “Latency”, we can leverage that same grouping and look at “end-to-end” latency from the API Gateway to our Lambda function. In this example, “Duration” is considered latency.

An important note to consider is that any external services called within the Lambda function contribute to the duration of the function. If your Dynamo table, for example, is having problems then it’s likely to cause a spike in “Latency.”

sourcetype=aws:cloudwatch (source="us-east-1:AWS/Lambda" OR source="us-east-1:AWS/ApiGateway") metric_name=Latency

| rex field=metric_dimensions "[ApiName|FunctionName]=\[(?<serverless_app>.*)\]"

| timechart sum(Sum) as end_to_end_latency by serverless_app

Troubleshooting

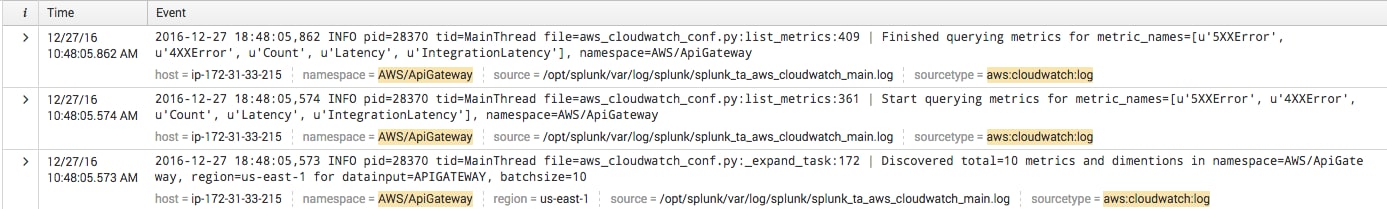

We can take a look at the Splunk Add-On’s internal logs to ensure we are collecting data. As a handy search:

"index=_internal sourcetype="aws:cloudwatch:log" namespace=AWS/apigateway"

You should see results similar to the below:

We’re excited about the wide range of possibilities that ‘serverless’ architectures on AWS present. In closing, we hope to have shown you equally compelling opportunities to utilize Splunk to monitor and visualize your serverless environments on AWS.

-Kyle & Bill

----------------------------------------------------

Thanks!

Kyle Champlin

Related Articles

About Splunk

The world’s leading organizations rely on Splunk, a Cisco company, to continuously strengthen digital resilience with our unified security and observability platform, powered by industry-leading AI.

Our customers trust Splunk’s award-winning security and observability solutions to secure and improve the reliability of their complex digital environments, at any scale.