Deep Learning Toolkit 3.7 and 3.8 - What’s New?

Platform Philipp DriegerWe are excited to share the latest advances around the Deep Learning Toolkit App for Splunk (DLTK). Earlier this year, Splunk’s Machine Learning Toolkit (MLTK) was updated with some important changes. Please refer to the blog post Driving Data Innovation with MLTK v5.3 and the official documentation to learn more about what changes were made and most importantly how they may affect you, especially if you run MLTK models in production. For DLTK those changes also required a few updates which was a great opportunity to bring in even more features. Let’s have a look at them at a glance:

- Custom Certificates

- Integration with Splunk Observability

- Container operations dashboard

- Benchmarks for different dataset sizes

- Update of all images and new algorithms

Custom Certificates

DLTK allows for various cloud, on-prem or hybrid deployment scenarios with Splunk Cloud, Splunk Enterprise and your container environments. In order to better protect and secure those architectures, many customers asked for the flexibility to integrate DLTK with their own certificates. In DLTK 3.8 the custom SSL certificates you have defined on your container side get automatically recognized and used for HTTPS communication between Splunk and your container environment. Optionally, you can further enforce hostname checking and point to your custom certificates chain on the Splunk side. This should provide you with enough flexibility and options to better set up and secure your DLTK deployments.

Integration with Splunk Observability

As DLTK relies on container based machine learning or deep learning algorithms that can flexibly run on Docker, Kubernetes, OpenShift or similar container environments, you can easily see what’s happening with Splunk’s Observability Suite. Learn more in the recently published State of Observability report 2022.

In the screenshot above you see an example of the CPU utilization in a DLTK Kubernetes cluster. From an infrastructure perspective, you can analyze how your compute and memory footprint looks and which models are the top consumers. From a deployment perspective, you can see the latency of your machine learning endpoints and services. And from a development perspective, you can spot bottlenecks in your algorithm's design and optimize your code. All in all, you get better observability of your DLTK based machine learning operations. Since DLTK 3.8 you can also auto-instrument all your containers with one simple setup configuration.

Container Operations Dashboard

Related to machine learning operations you can further monitor and analyze what’s going on with your DLTK containers:

- What’s the activity on your clusters?

- What are the most frequently used algorithms?

- What are the typical runtime durations of your operations?

- What are possible errors that have occurred?

If you add the various logs from Splunk and your container environment you can get a quite comprehensive picture of your machine learning operations, especially if you add the Observability aspects from above as well!

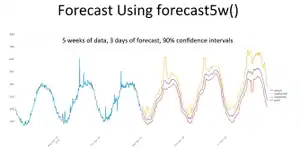

Benchmarks for Different Dataset Sizes

The datasets you may want to process can vary in their shapes and sizes - as so often it depends on the use case and the available data. Still, you can get a rough idea of what processing times would look like if you had a simple comparison chart. This is what the benchmark statistics above should provide you with. The listed benchmarks above are for purely informative purposes to provide you with a better understanding of the generic runtime behavior of DLTK for different typical dataset sizes. Please note that other algorithms or dataset sizes can lead to very different runtime behavior and therefore should be investigated on a case by case basis and within the constraints of the available compute infrastructure and setup. Furthermore, the currently available benchmarks only profile single-instance “development” DLTK deployments that do not utilize any parallelization or distribution strategies. This should provide you with a simple baseline and an estimate for basic algorithms operating on typical small to medium-sized datasets.

Update of All Images and New Algorithms

With DLTK 3.8 all pre-build container images have been updated and made available on GitHub. In case you want to extend or modify an existing one or build a completely new one, this should be fairly easy to do. Lastly, a new specialized image was added to the DLTK which is focused on River, a python package for online machine learning algorithms. We will discuss more details in the next blog article.

Another recent new algorithm example added is a basic Hidden Markov Model (HMM) utilizing the pomegranate python package. HMMs are structured probabilistic models that form a probability distribution of sequences, as opposed to individual symbols. It is similar to a Bayesian network in that it has a directed graphical structure where nodes represent probability distributions, but unlike Bayesian networks, in that, the edges represent transitions and encode transition probabilities, whereas in Bayesian networks edges encode dependence statements. A strength of HMMs is that they can model variable length sequences whereas other models typically require a fixed feature set. The example above shows a simple HMM extracted from punctuations from log data. By applying it, we can retrieve information about the probability of a given punctuation sequence and can utilize this to spot possible structural anomalies in log data ingests. Remember, this is just one of many applications that involve modeling of sequences. In cybersecurity, I feel there are plenty of use cases that potentially can benefit from such an approach.

If you have not downloaded the Deep Learning Toolkit App for Splunk yet, I hope this article motivates you to do so. I’m also happy to hear your feedback and ideas! Find me on LinkedIn and feel free to reach out to me.

Happy Splunking,

Philipp

Related Articles

Removing Python® 2 from New Splunk Cloud and Splunk Enterprise Releases Starting Fall 2021

Smarter Noise Reduction in ITSI