Using eval to Calculate, Appraise, Classify, Estimate & Threat Hunt

I hope you're all enjoying this series on Hunting with Splunk as much as we enjoy bringing it to you.

This article discusses a foundational capability within Splunk — the eval command. If I had to pick a couple of Splunk commands that I would want to be stuck on a desert island with, the eval command is up there right next to stats and sort.

(Part of our Threat Hunting with Splunk series, this article was originally written by John Stoner. We’ve updated it recently to maximize your value.)

The eval command for hunting

eval allows you to take search results and perform all sorts of, well, evaluations of the data. The eval command can help with all this and more:

- Conditional functions, like if, case and match

- Mathematical functions, like round and square root

- Date and time functions

- Cryptographic functions, like MD5, SHA1, SHA256, SHA512

- And so much more!

To do justice to the power of eval would take many pages, so today I am going to keep it to four examples, below.

Setting our hypothesis

As discussed throughout this blog series, the building and testing of hypotheses is so important when hunting. With that in mind, let’s dive straight into an example where eval is incredibly useful.

For this hunt, I am hypothesizing that abnormally long process strings are of interest to us. With that in mind, let’s start hunting.

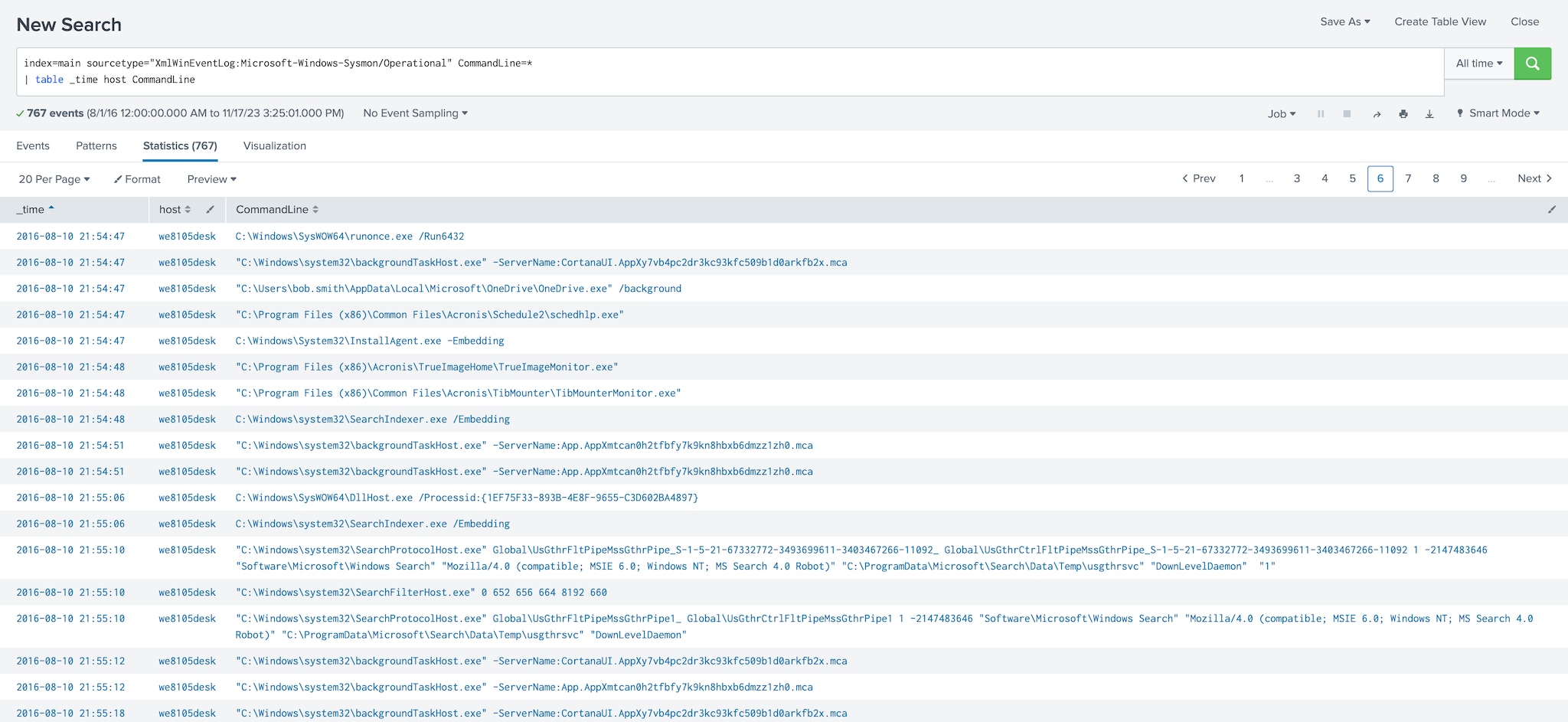

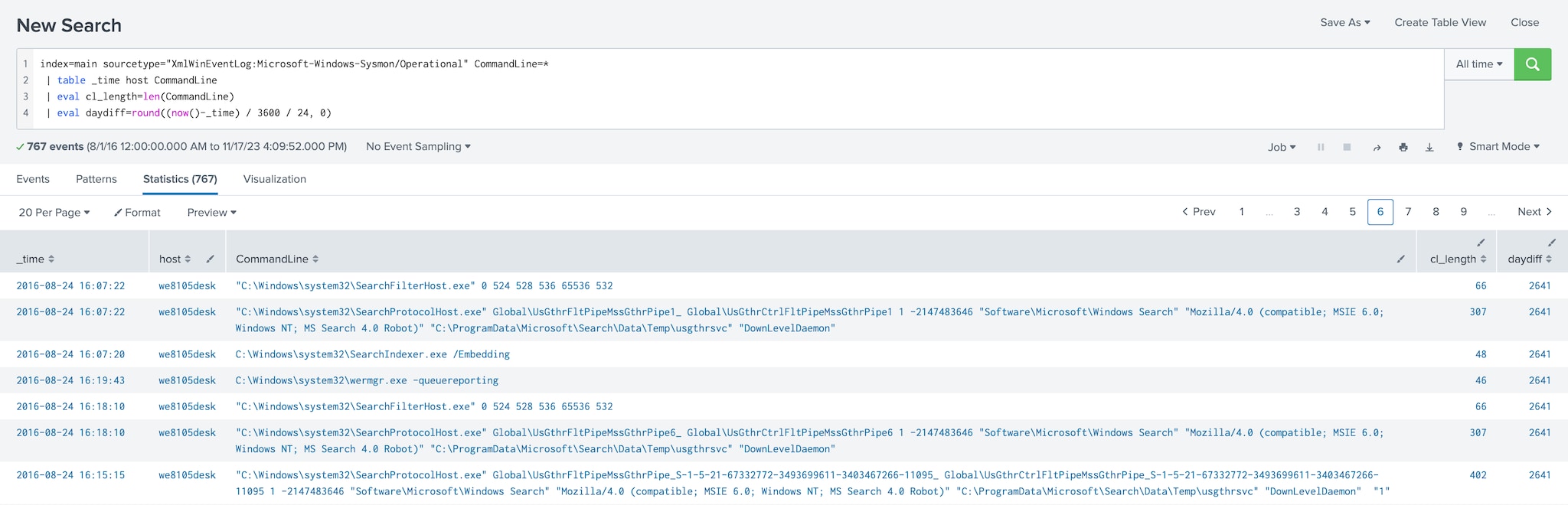

For this initial search, I will leverage Microsoft Sysmon data because of its ability to provide insight into processes executing on our systems. As always, it's important to focus the hunt on data sets that are relevant. My initial search of Sysmon isolated on process, time and host would look something like this:

index=main sourcetype="XmlWinEventLog:Microsoft-Windows-Sysmon/Operational" CommandLine=* | table _time host CommandLine

Basically, I am searching the Sysmon data and using the table command to put it into easy to read columns.

Calculating the length of a string

With the initial search in place, I can start using eval .

My hypothesis states that long command line strings are of concern due to their ability to harbor badness within them. I want to establish which—if any—hosts have long process strings executing and if they do, I want to know when they executed.

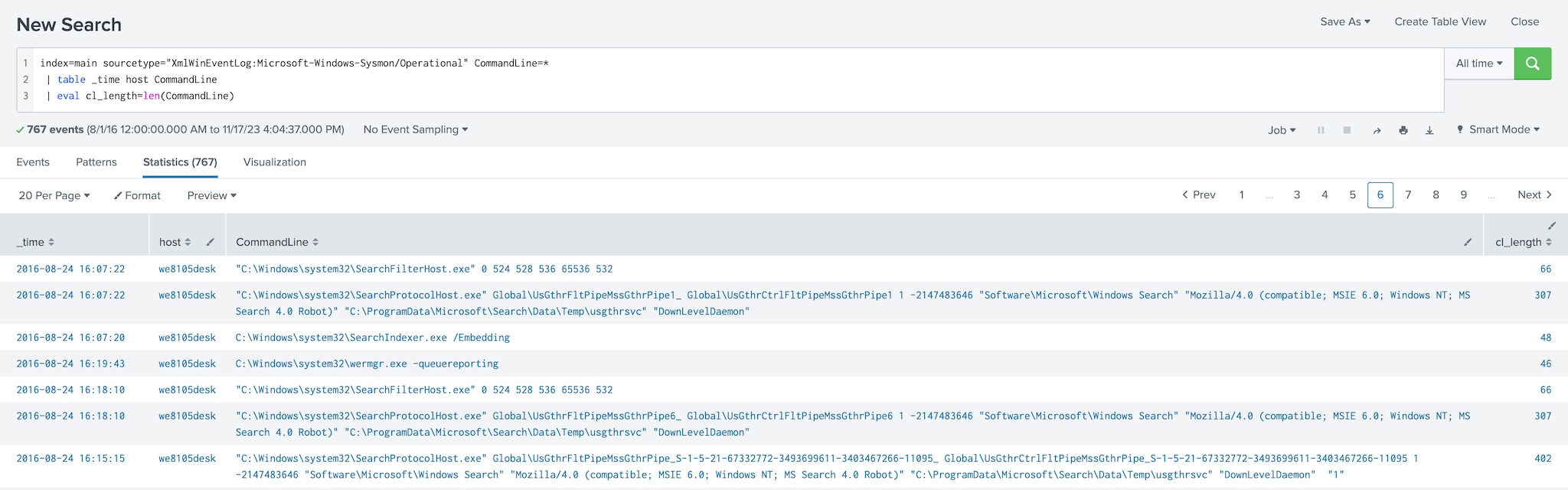

To determine the length of a string, I will use eval to define a new field that I will call cl_length and then I will call a function. The output of that function will reside within our new field. My function will be len(CommandLine) where len is short for the length of the field in parenthesis, in this case the field CommandLine.

index=main sourcetype="XmlWinEventLog:Microsoft-Windows-Sysmon/Operational" CommandLine=*& | table _time host CommandLine | eval cl_length=len(CommandLine)

Notice that an additional column has been added on the far right that shows my newly created calculated field length.

If I want to continue to evolve this search, I can apply statistical methods to the data:

- As discussed in our threat hunting stats command tutorial, I can calculate average, standard deviation, maximum, minimum and more on a numeric value while grouping by other field values like host.

- The sort and where commands can also be used to filter out data below your defined threshold and bring the longest (or shortest) strings to the top.

Calculating time between two events

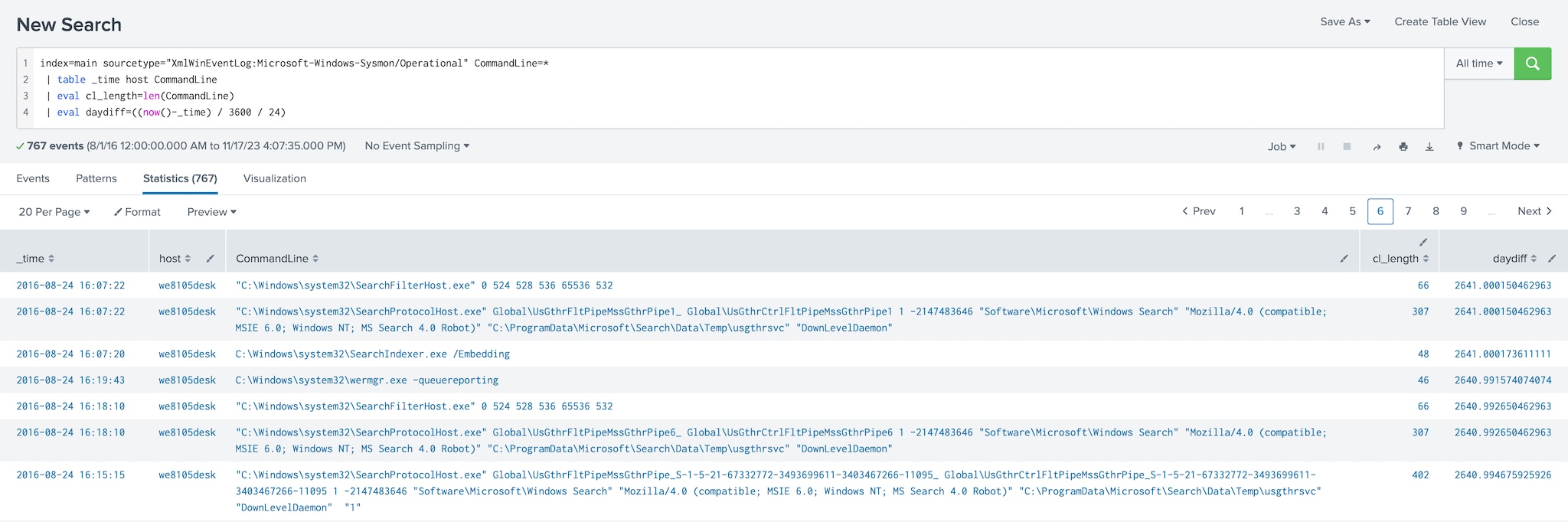

Another way eval can be used for hunting is by calculating the time elapsed between different events and applying functions to date/time values. Continuing with my earlier hypothesis and applying the sort command, I can see that I have a long command line string on a specific host and I want to calculate how many days have passed since that command line string of interest executed on the system.

Using eval, I can do this easily. I will create a new field called daydiff. I want to compare the _time of the event with today’s date to calculate the number of days since this long string ran. My search with the new eval command would look like something like this:

index=main sourcetype="XmlWinEventLog:Microsoft-Windows-Sysmon/Operational" CommandLine= | table _time host CommandLine | eval cl_length=len(CommandLine) | eval daydiff=((now()-_time) / 3600 / 24)

Let’s dissect this a bit. We're taking the current time, represented as now(), and subtracting the event time (_time) to get the difference in the number of seconds.

However, I don’t want to know that 38,526,227 seconds (as I am writing this) have elapsed since the event occurred — I want to know the number of days.

Rounding a calculated value

To determine the number of days that we have had this exposure, I need to convert seconds to days. This is easily done by dividing the number of seconds by 3,600 to get hours and then by another 24 to get our result in days. Could I just divide by 86,400? Of course, but I like to do things more complicated.

Now that I have the number of days, I don’t want to provide a decimal answer. Leadership probably doesn’t care that my answer is 27.3 days of exposure.

In this circumstance, an integer answer is probably sufficient, so I can use the round condition within the same eval command to round our answer off to the nearest integer.

To do this, I set the decimal argument within the round command to 0. With the round command added, this calculated value goes into the field I created called daydiff. This new field can then be used in additional searches built onto this search as well as alerts and reporting.

index=main sourcetype="XmlWinEventLog:Microsoft-Windows-Sysmon/Operational" CommandLine=* | table _time host CommandLine | eval cl_length=len(CommandLine) | eval daydiff=round((now()-_time) / 3600 / 24, 0)

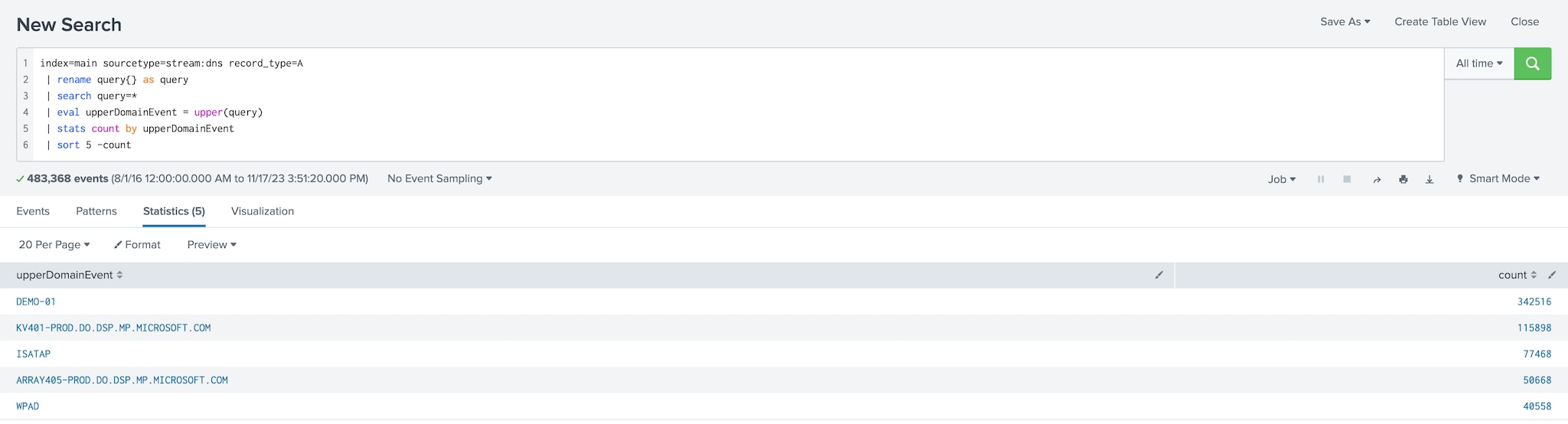

Converting fields to all upper or lower case

Eval can also be used for text manipulation. I may want to correlate events with a list of domains, however the lookup that we have been provided has all of the domains in uppercase. To ensure that matches are identified, the upper and lower functions can be used in conjunction with the eval command to provide a way to get data prepared and then use the lookup command or some other transformational command to perform the desired correlation.

The example below shows the use of the upper function to convert our query in DNS to all uppercase. That's pretty self-explanatory.

index=main sourcetype=stream:dns record_type=A

| rename query{} as query

| search query=*

| eval upperDomainEvent = upper(query)

| stats count by upperDomainEvent

| sort 5 -count

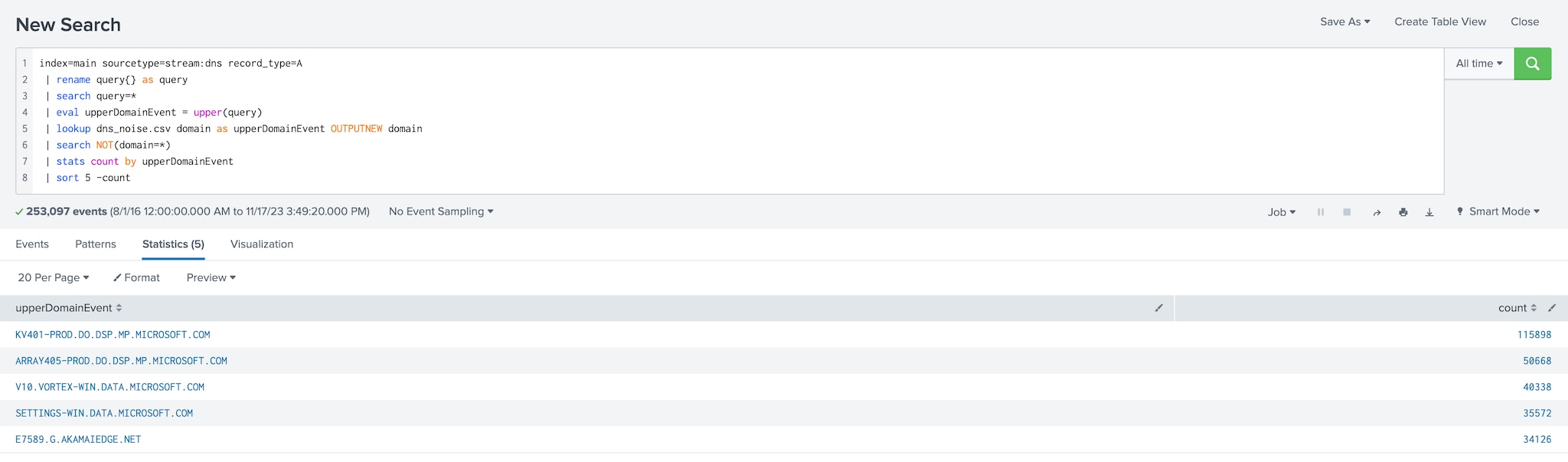

To eliminate the values that are in the dns_noise.csv file, I then add the lookup command and an additional search command to filter down to the queries that are not on the list and we can see a bit of a different result.

index=main sourcetype=stream:dns record_type=A

| rename query{} as query

| search query=*

| eval upperDomainEvent = upper(query)

| lookup dns_noise.csv domain as upperDomainEvent OUTPUTNEW domain

| search NOT(domain=*)

| stats count by upperDomainEvent

| sort 5 -count

You may be looking at this last example and asking why I brought this up. I mention the eval text conversion working with lookups because, if I'm hunting, the last thing I want to get caught up in is a lot of noise that is not important to my hunt.

Being able to use an allowlist (fka whitelist) and get rid of the noise is very important.

Extend this example with parsing

This short example can be extended using some of the tips that David Veuve described in his original article on UT_parsing Domains Like House Slytherin. By using the URL Toolbox to extract domains from DNS queries and URLs, you can then apply the lookup command to compare the extracted domain to the list of domains that you have — just remember that case sensitivity matters!

Conclusion

As you can see from this post, there are many uses for the eval command. To be clear, I only scratched the surface of its power. If you have the Splunk Quick Reference Guide, you'll notice one entire page of the tri-fold covers eval and its associated commands.

Hunting with eval unlocks additional capabilities that provides analysts a way to convert data, isolate strings, match IP addresses, commute values and many other capabilities.

Happy hunting! :-)

Related Articles

About Splunk

The world’s leading organizations rely on Splunk, a Cisco company, to continuously strengthen digital resilience with our unified security and observability platform, powered by industry-leading AI.

Our customers trust Splunk’s award-winning security and observability solutions to secure and improve the reliability of their complex digital environments, at any scale.