What is Infrastructure Monitoring?

Splunk Infrastructure Monitoring

The famous phrase “Houston, we’ve had a problem” isn’t a one off event for space missions or Tom Hanks — its a regular occurrence for most IT teams! Today’s IT teams are peppered with alerts indicating that something has gone amiss in their production environments.

Visibility of uptime and performance is an essential part of ensuring that your IT infrastructure can power applications to meet business needs and deliver value for users.

So, exactly what infrastructure monitoring is might be straightforward to you. Yet the journey of monitoring your infrastructure starts long before systems go live. You’ll plan, integrate, test as required beforehand, all to make sure that the right quality of alerts and events are generated and shared with the right teams at the right time. This enables effective response across the business.

In this article we will review the approaches, tools, and challenges associated with infrastructure monitoring, as well what the future holds for this space.

Infrastructure monitoring today

Despite advances in technology such as distributed architecture, automated replication, and self-healing systems that enable high availability, the harsh reality is that IT systems still let us down once in a while.

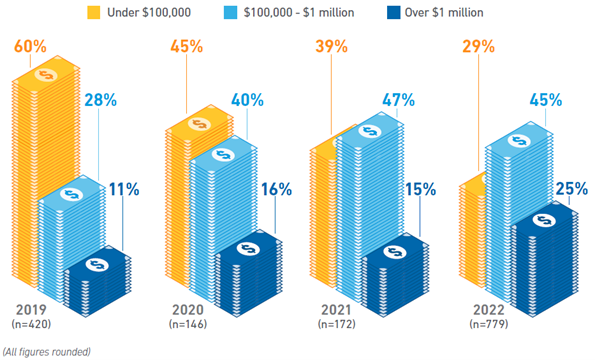

The Uptime Institute in their 2023 survey reported that outage rates have been gradually falling in recent years. It’s not all good news, however: the cost impact of outages is increasing, with more than two-thirds of all outages costing more than $100,000.

Outages result from not only from technology elements but are also caused by the actions of third-party providers, human error and management failures. For these reasons, it is essential that the right infrastructure monitoring capabilities be deployed to better and faster detect issues before they snowball into critical incidents.

Cost of most recent downtime, 2019-2022 (Source)

How monitoring infrastructures works: What to monitor & how to approach

Infrastructure monitoring involves the ongoing observation, identification, categorization and analysis of significant changes in state affecting infrastructure components.

The key domains that are checked from infrastructure events generated include:

- Uptime: Whether the given component is powered, responsive and executing the expected functionality. For example: devices in a rack may be off due to a power issue at a data center.

- Accessibility: Whether the component is reachable, based on the configuration and functionality of network connectivity (routers, switches, firewalls and ports). For example, trying to ping a server may return "Request timed out" or "Could not reach destination host."

- Capacity: Whether the component processes or data are constrained based on the dimensions of its constituents, e.g. memory, CPU utilization, storage, or bandwidth. For example, an overloaded router may result in dropped packets.

- Performance: Whether the component is functioning in line with expected standards with regard to responsiveness, as well as speed, accuracy and completeness while processing transactions. For example, a database may exhibit slow read/write speeds, or return errors when processing queries.

- Security: Whether the component is maintaining the prescribed levels of confidentiality and integrity of the data contained within it. For example, a system account may be compromised, and may attempt to access or process information that is restricted to it.

Most infrastructure elements have native monitoring features that will generate these events on a pre-determined basis.

Learn more about Splunk Infrastructure Monitoring.

Protocols such as ICMP, SNMP and WMI enable monitoring by facilitating the collection and organization of such events, which can then be directed to a monitoring tool or a designed-for-purpose monitoring system. This then allows for opportunities for:

- Real time viewing

- Aggregation for purposes of correlation and longer term analysis

Agent vs. agentless monitoring

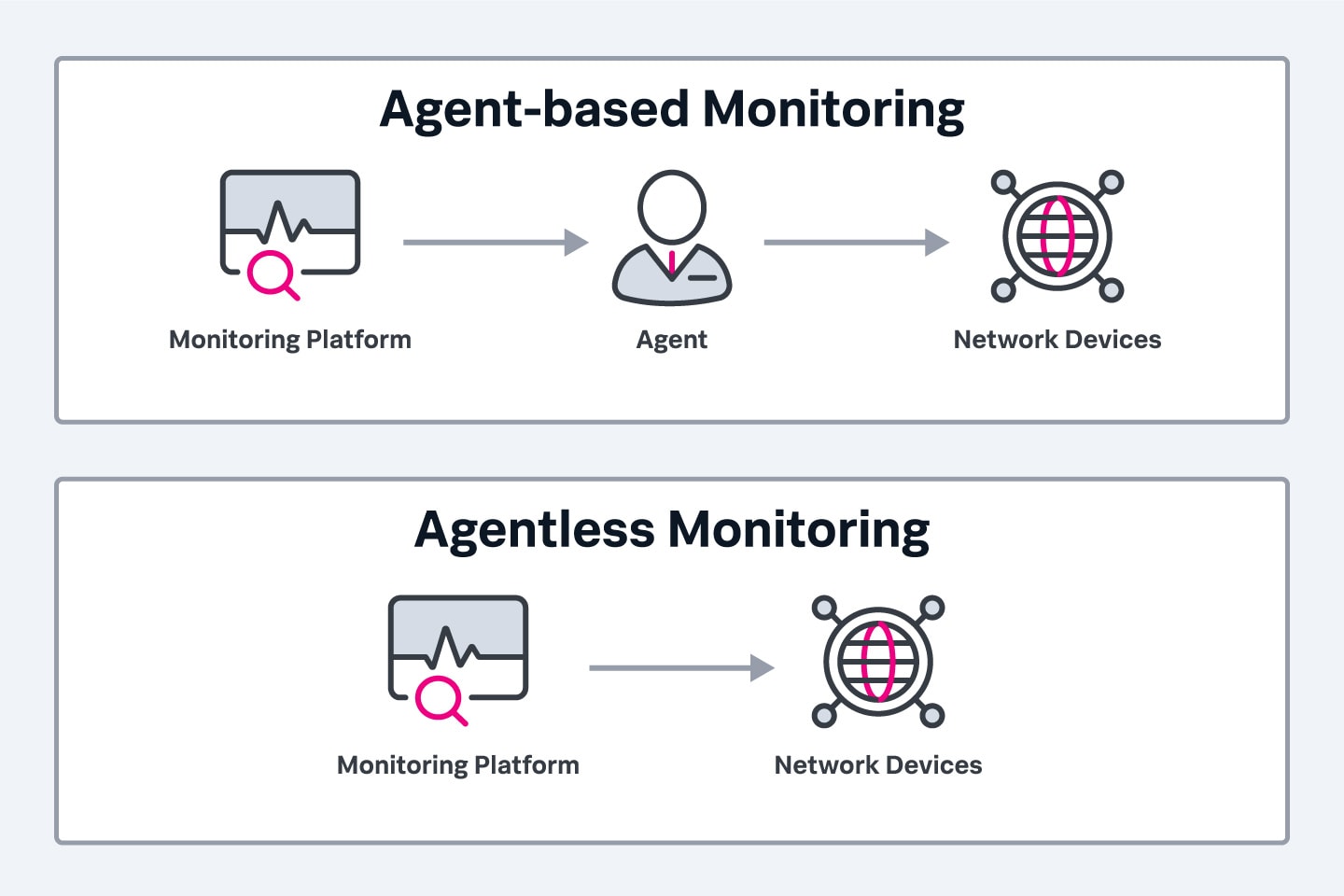

In some instances, organizations may add custom-built instrumentations to capture specific events that may not be generated by standard protocols or tools. Infrastructure elements are usually polled via agent to extract events — or may automatically send notifications to a monitoring system (agentless).

- Agent monitoring: Agent-based monitoring is platform-specific, offering deeper data collection but with proprietary limitations that can hinder migration. Ensure your monitoring system is compatible with the agents used in your infrastructure.

- Agentless monitoring: Agentless monitoring uses protocols like SNMP, WMI, SSH, and NetFlow to collect system data without additional agents. It supports devices such as servers, network equipment, storage, and virtual machines (e.g., VMware, Hyper-V). A robust monitoring system can centrally manage these components.

However, some monitoring systems involve the installing of agents that are lightweight software applications — these send more in-depth monitoring data. Of course, this comes with an overhead of managing the agents including configuration and updates.

The importance of thresholds

Because infrastructure systems will generate a ton of events on a regular basis, thresholds are crucial to act as a filter so that only the most pertinent alerts are acted upon. Setting these thresholds is a critical exercise, and that’s for a fairly obvious reason:

- Manufacturers of infrastructure may have set pre-determined thresholds.

- System administrators within your specific organization may want their own distinct thresholds based on the nature (and their knowledge) of their environment.

For example, a threshold of 80% capacity may be inconsequential for a cloud based designed to automatically scale — but that same 80% may be dangerous for a legacy system that requires significant planning and action to provision additional capacity.

An incorrect threshold may result in vital system statuses being overlooked, or the administrators being overwhelmed by inconsequential alerts.

Usually, the categorization of events will inform the thresholds settings, and these are usually three groups:

- Informational events: These do not require an action. Examples include: when the system is up, or that a transaction processed successfully.

- Warning events: These may or may not require an action. For instance, database capacity hitting 80%.

- Exceptional events: These warrant an action, for example backup failed or virtual instance is unresponsive.

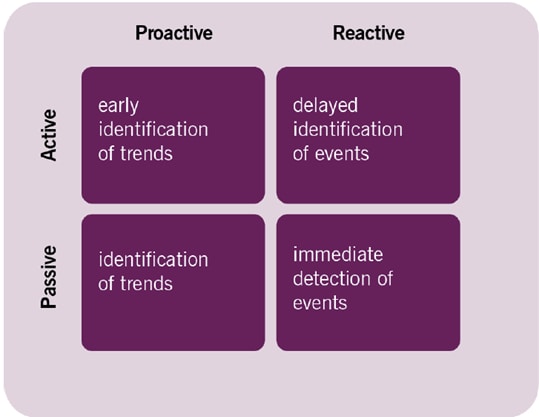

Active vs. passive, reactive vs. proactive monitoring

According to ITIL® 4, active monitoring involves monitoring tools interrogating infrastructure components, while passive monitoring involves the components themselves sending collective notifications to the monitoring systems on a predetermined schedule or trigger.

Based on these two approaches, an organization can determine a strategy that is either proactive or reactive in nature.

- Reactive monitoring is the most common approach by most, where a system administrator waits to receive the actual alert and then acts on it.

- Proactive monitoring would involve interrogation and analysis of system metrics before they gain any form of significance.

For instance, analysis and correlation of system logs to uncover trends that point to an anomaly such as errors that are generated by specific queries, or related to specific components.

In this scenario, a combination of both proactive and reactive approaches is your best bet for a holistic view of infrastructure status and performance.

Active vs Passive, Proactive vs Reactive Monitoring (ITIL 4)

Challenges with IT monitoring & tools

As organizations drive digital transformation initiatives through cloud based systems, one of the challenges encountered is the visibility across the entire infrastructure stack, particularly in hybrid environments that include on-premise data centers.

Lack of full visibility

The complexity that is introduced across multiple cloud providers as a result of many moving parts that are constantly changing due to multiple daily deployments and automated provisioning means that having an exact picture of the environment becomes a tough exercise. In addition, getting tools that can visualize the entire architectural or service model view across all infrastructure elements is difficult and costly.

(Don’t worry: Splunk makes it easy for you.)

Compounding data sources

Another hurdle that organizations have to deal with is the ever increasing volume of data that is spewed by infrastructure components.

The greater the number of constituent elements monitored and their frequency of probing, the more time and effort needs to be spent filtering, classifying and analyzing data. (And with the law of diminishing returns at work, the greater the monitoring data the lower the return from it.)

Yes, observability solutions have emerged to do most of the heavy lifting in aggregation and analysis of logs, metrics, and traces. But these solutions alone aren’t enough: you’ll still need significant human resources such as a NOC team at the end of the chain to respond and take action.

Finding the right balance between informativity, granularity, and frequency of infrastructure is vital in addressing this challenge.

Infrastructure monitoring best practices

As with anything important, a system is only as effective as its implementation and management. Use the following best practices to set-up an infrastructure monitoring tools that meet the needs of your business:

- Prioritize alerts: Set detailed, urgent notifications to avoid missing critical issues.

- Schedule a trial run: Test your system before an actual emergency to fine-tune it.

- Embrace redundancy: Use both on-premise and cloud solutions, and monitor all data centers for added security.

- Use support services: Leverage vendor support to resolve issues efficiently.

- Check your metrics: Regularly review performance metrics and adjust thresholds as needed for optimal results.

The future of monitoring

Infrastructure monitoring tools that are engineered with generative AI capability will be at the forefront of driving proactive monitoring for full stack environments. With the capacity to automatically process, analyze and predict events, the value that such tools provide for organizations in enhancing availability and performance of IT systems is immense. AI systems can both:

- Handle big data quite comfortably.

- Facilitate easier automation of routine tasks related to infrastructure monitoring such as collection, analysis and correlation.

In addition, the more organizations that host systems on the cloud, the more likely that monitoring-as-a-service offerings by cloud service providers will be seen as an option. Since these CSPs will have the resources and tools that are tailored to their environments, it will be a no-brainer for enterprises to outsource to them, rather than go the difficult route of investing in a variety of tools to monitor their infrastructure sprawl.

This means that organizations can focus better on their core objectives of delivering and improving their service offerings, while letting cloud providers carry out the monitoring activities.

- IT Monitoring

- Application Performance Monitoring

- APM vs Network Performance Monitoring

- Security Monitoring

- Cloud Monitoring

- Data Monitoring

- Endpoint Monitoring

- DevOps Monitoring

- IaaS Monitoring

- Windows Infrastructure Monitoring

- Active vs Passive Monitoring

- Multicloud Monitoring

- Cloud Network Monitoring

- Database Monitoring

- Infrastructure Monitoring

- IoT Monitoring

- Kubernetes Monitoring

- Network Monitoring

- Network Security Monitoring

- RED Monitoring

- Real User Monitoring

- Server Monitoring

- Service Performance Monitoring

- SNMP Monitoring

- Storage Monitoring

- Synthetic Monitoring

- Synthetic Monitoring Tools/Features

- Synthetic Monitoring vs RUM

- User Behavior Monitoring

- Website Performance Monitoring

- Log Monitoring

- Continuous Monitoring

- On-Premises Monitoring

- Monitoring vs Observability vs Telemetry

See an error or have a suggestion? Please let us know by emailing splunkblogs@cisco.com.

This posting does not necessarily represent Splunk's position, strategies or opinion.

Related Articles

About Splunk

The world’s leading organizations rely on Splunk, a Cisco company, to continuously strengthen digital resilience with our unified security and observability platform, powered by industry-leading AI.

Our customers trust Splunk’s award-winning security and observability solutions to secure and improve the reliability of their complex digital environments, at any scale.