Three Ways to Get Uptime in No Time

It’s a reality we all have to face: outages are bound to happen. Even as we move toward a world that’s fully automated (think The Jetsons), you’ll still need to monitor and remediate issues when things inevitably break. The issue is that downtime can cause serious harm—IDC reports that the average cost of a critical application failure is $500,000 to $1 million per hour*. Watch the webinar for more details.

Here are three ways you can maximize your uptime—helping you protect your business and satisfy your customers.

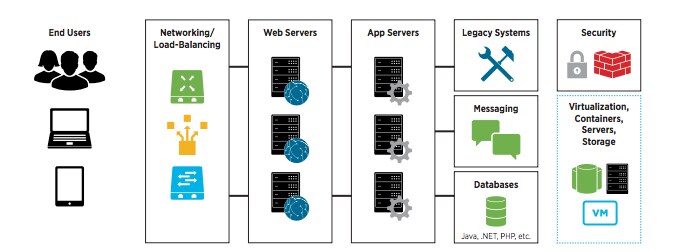

1. Use machine data to quickly find the root cause of the issue. Problems that cause downtime aren’t necessarily the result of bad code. Reports show that downtime caused by application issues account for only 40 percent of all outages. That means there’s a significant chance your downtime could be caused by an issue in the infrastructure. Or worse—the result of a human error. While metrics, logs and data coming in from other tools are all valuable for monitoring, most often, log files become the most authoritative source of data when performing detailed root-cause analysis and troubleshooting. That’s because every instance of a problem is logged, and logs can be designed to provide detailed context on the source of problem.

2. Focus on your customers—and measure what matters to them. We’ve all experienced the spinning wheel of death and, as a result, stopped using a service or application. To prevent your users from this same frustration, you’ll need to monitor and analyze uptime, availability and response time to ensure the performance of your business critical services. Best practice is to collect, correlate and analyze your machine data to gain additional insight into your customer-facing metrics. For example, you’d need to monitor and analyze your application performance in addition to the throughput of the underlying infrastructure to ensure performance. All of that information can be gleaned from mining your machine data.

3. Work to move from reactive to proactive. Fires happen—it’s important to put them out before they get too much to handle. But wouldn’t a better step be to predict the fire before it even happens? This is no longer Jetson-level technology. Leading IT organizations are leveraging machine learning to proactively alert before outages occur. A great example is Zillow: the company is using real-time operational insights and alerting to maintain the service quality of its website. By applying tailored algorithms to your data set, you can get even more insight from your machine data and begin to proactively troubleshoot.

Even though downtime is inevitable, it doesn’t have to be such a painful and costly experience. By correlating, analyzing and visualizing the machine data from across your organization, you’ll have uptime in no time.

Want to learn more about how to maximize your uptime? Watch our Downtime Got You Down? Getting Started With Splunk for Application Management webinar.

Thanks,

Keegan

----------------------------------------------------

Thanks!

Keegan Dubbs

- Splunk MINT: A Complete Introduction

- Splunk Use Cases

- CloudOps: An Introduction to Cloud Operations

- Incident Review: How To Conduct Incident Reviews & Postmortems

- What is Cloud Analytics?

- Cloud Costs: Cloud Cost Management Strategies

- Cloud Migration Basics: A Beginner’s Guide

- Multimodal AI Explained

- Adversarial Machine Learning & Attacks on AIs

- What is PGP (Pretty Good Privacy)?

- CapEx vs OpEx for Cloud, IT Spending, & More

- Defining & Improving Your Security Posture

- IT Spending & Budgets: Trends & Forecasts 2024

- Executive Order (EO) 13960: Use of Trustworthy AI in the Federal Government

- What is Distributed Tracing?

- What is Network Telemetry?

- Edge AI Explained: How It Works, Features, Challenges & More

- AI Risk Management in 2024: What You Need To Know

- The Compliance-as-a-Service (CaaS) Ultimate Guide

- Data Lifecycle Management: A Complete Guide

Related Articles

About Splunk

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.