Chasing a Hidden Gem: Graph Analytics with Splunk’s Machine Learning Toolkit

Do you like gems? Perfectly cut diamonds? Crystal clear structures of superior beauty? You do? Then join me on a 10 minute read about a quest for hidden gems in your data: graphs!

Do you like gems? Perfectly cut diamonds? Crystal clear structures of superior beauty? You do? Then join me on a 10 minute read about a quest for hidden gems in your data: graphs!

Be warned, it is going to be a mysterious journey into data philosophy. But you will be rewarded with artifacts that you can use to start your gemstone mining journey today. And believe it or not, if you've got the latest version of Splunk’s Machine Learning Toolkit, the gems are even closer than you think - so let’s get started!

Photo Credit: Commonwikimedia.org

Through the looking glass

If you have never heard of graphs, think of a network of connected elements. In the last few decades publications from formal graph theory, up to applied (social) network sciences and complex system dynamics have been flourishing. Yet not that many people are aware of the power of graphs - despite the fact that most of us benefit from its real-world applications on a daily basis such as:

- your navigation system that gets you to your meeting on time

- your favorite online shop that provides you with interesting recommendations

- your credit card provider that successfully protects you from fraud.

- And finally, you're probably reading this article on a device that’s connected to a giant graph structure - called the internet.

Long story short, graphs can be very valuable and precious - just like gemstones. So where do you find them? And what does any of this have to do with Splunk?

A Coal Mining Exercise

Almost all data in Splunk can be turned into graphs, and that's possibly something you may not have considered before. In your network traffic data, a source IP connects to a destination IP with attributes like bytes in/out, packets, ports, and other properties. Users log into an interconnected stack of systems, services, devices and applications which are connected with each other. Transactions run from A to B to C and may describe a process that helps you analyze user journeys and business processes in general.

Interestingly, many of these relationships can be easily mined from your raw data with the use of a basic SPL pattern:

... | stats count by source destination

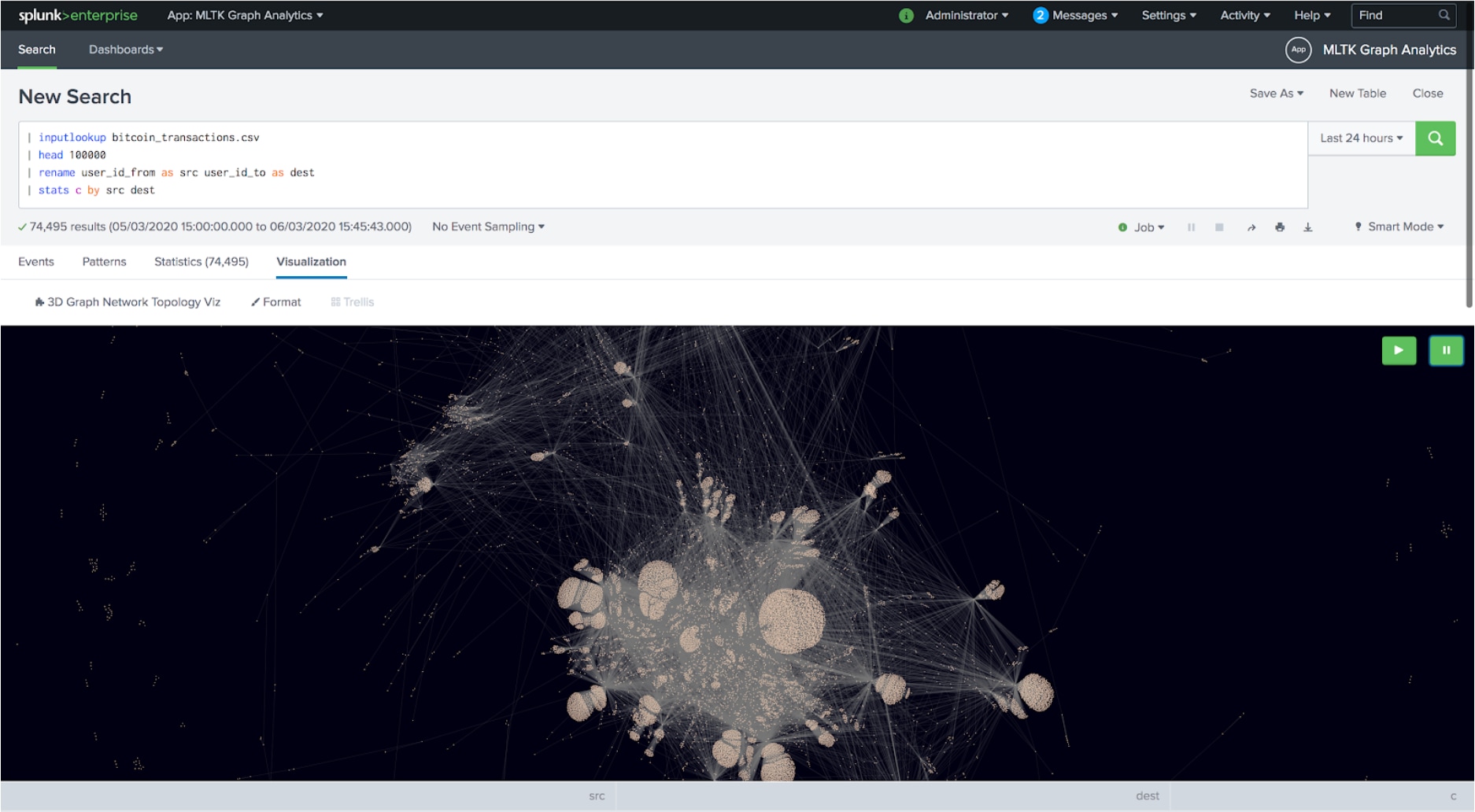

Et Voilá, as you can see above, you have mined your first graph from your raw data in Splunk. With a bit of SPL you have retrieved a so-called edge list representation that can be nicely visualized directly in Splunk, too. A big thanks to Erica for her awesome custom 3D graph visualization free to download on splunkbase!

Now, after this little coal mining exercise you might say: “This was too easy. And I don’t see the diamond yet - just a big hairball!” And you’re right. We need to process the raw diamond even further, so let’s get cutting!

Cutting the Diamond

Typically, you want to extract certain insights from a graph - thet's the real diamonds we are looking for:

- Who is the most influential actor within a network?

- Who has a bridging function between groups and is therefore more likely to connect entities with each other?

- Which communities exist in the graph and who belongs to them?

- What are the shortest pathways between them?

- What happens if nodes or links break down?

- And how do all of these properties change over time?

Each of these questions can be answered with the use of graph algorithms and may lead to particularly important results in security analytics, fraud detection or social network-related analysis. You think that this still sounds too abstract? Ok, let’s talk about algorithms.

Dance with the Algorithms

To provide you with a more tangible example, let’s have a look at a simple example dataset that ships with Splunk’s Machine Learning Toolkit: bitcoin transactions. Simply put, one user (source) transfers a value to another user (destination). All transactions build up a graph over time.

Now, what if there is an entity in the graph that is too influential? Maybe a hidden broker who plays an unfair advantage or a fraudulent actor who is connected to a fraud ring? To answer those questions, typical graph characteristics like centrality measures, path analysis, clustering coefficients or community detection can provide helpful insights. Something else to bear in mind is that those characteristics can significantly improve classical machine learning approaches by using such graph-related measures as additional features.

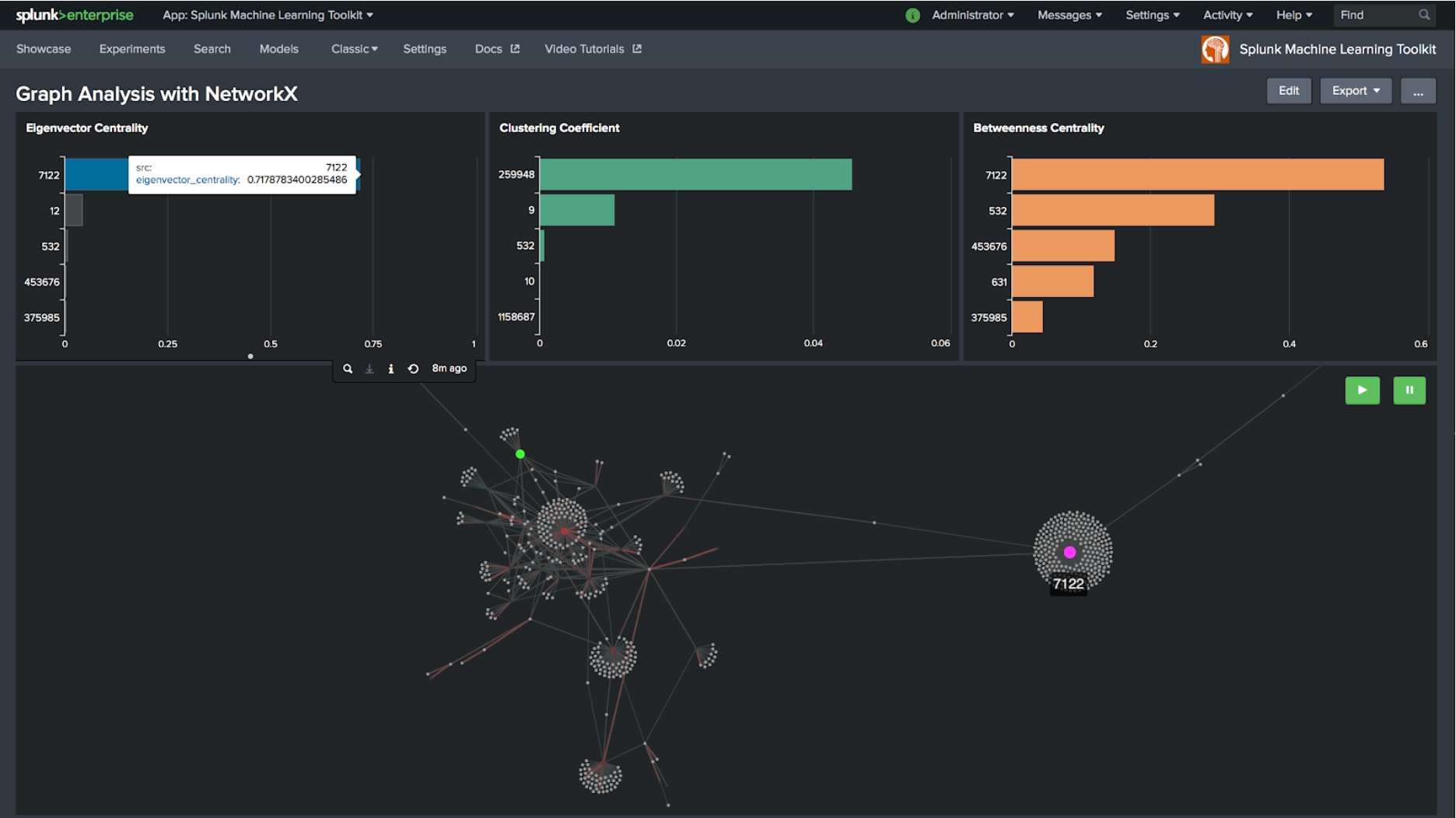

Centrality Measures

The example above shows a subset of the bitcoin transactions and highlights its top 5 nodes which have a high eigenvector centrality, betweenness centrality or clustering coefficient. Clearly, node 7122, shown in pink stands out as it displays a high eigenvector centrality, but also connects the graph structure on the left with another structure off-screen on the right which therefore also leads to the highest betweenness centrality. For analysts those are the diamonds to find as they reveal important patterns in a large dataset and lead to insights for further investigations.

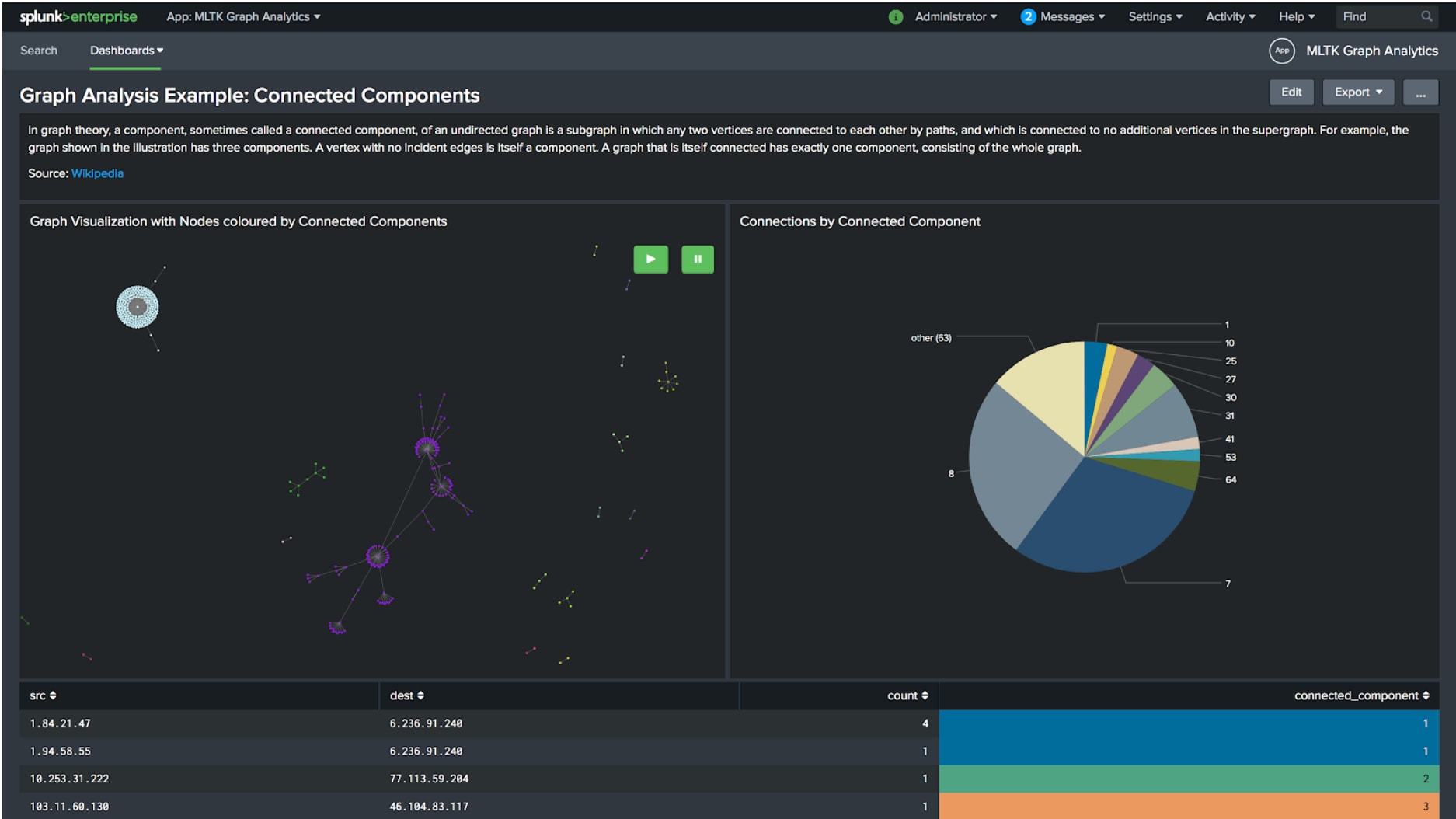

Connected Components and Communities

A following question might be - are there separated parts in the graph? They usually indicate isolated groups of entities which are only connected within their group but not to other groups in the graph. With help of the connected components algorithm, those groups can automatically be detected and labeled. This technique was applied in a security use case presented by Siemens at last year’s .conf19 to calculate anomaly scores within the context of connected systems and entities.

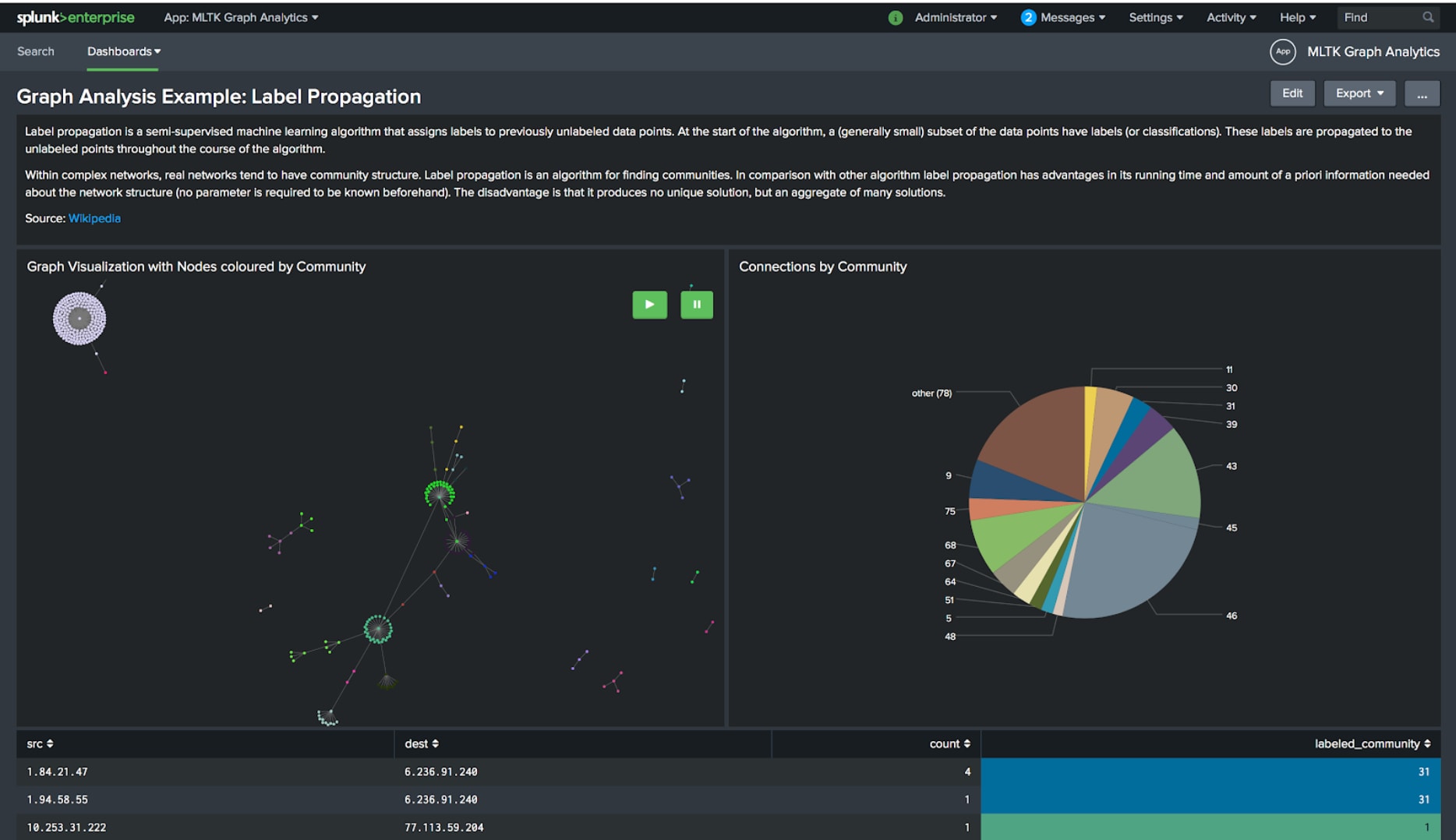

Another interesting approach is label propagation which is a semi-supervised machine learning algorithm. It generates labels to identify communities in a graph and provides an analyst with a structure that can be further processed and analyzed.

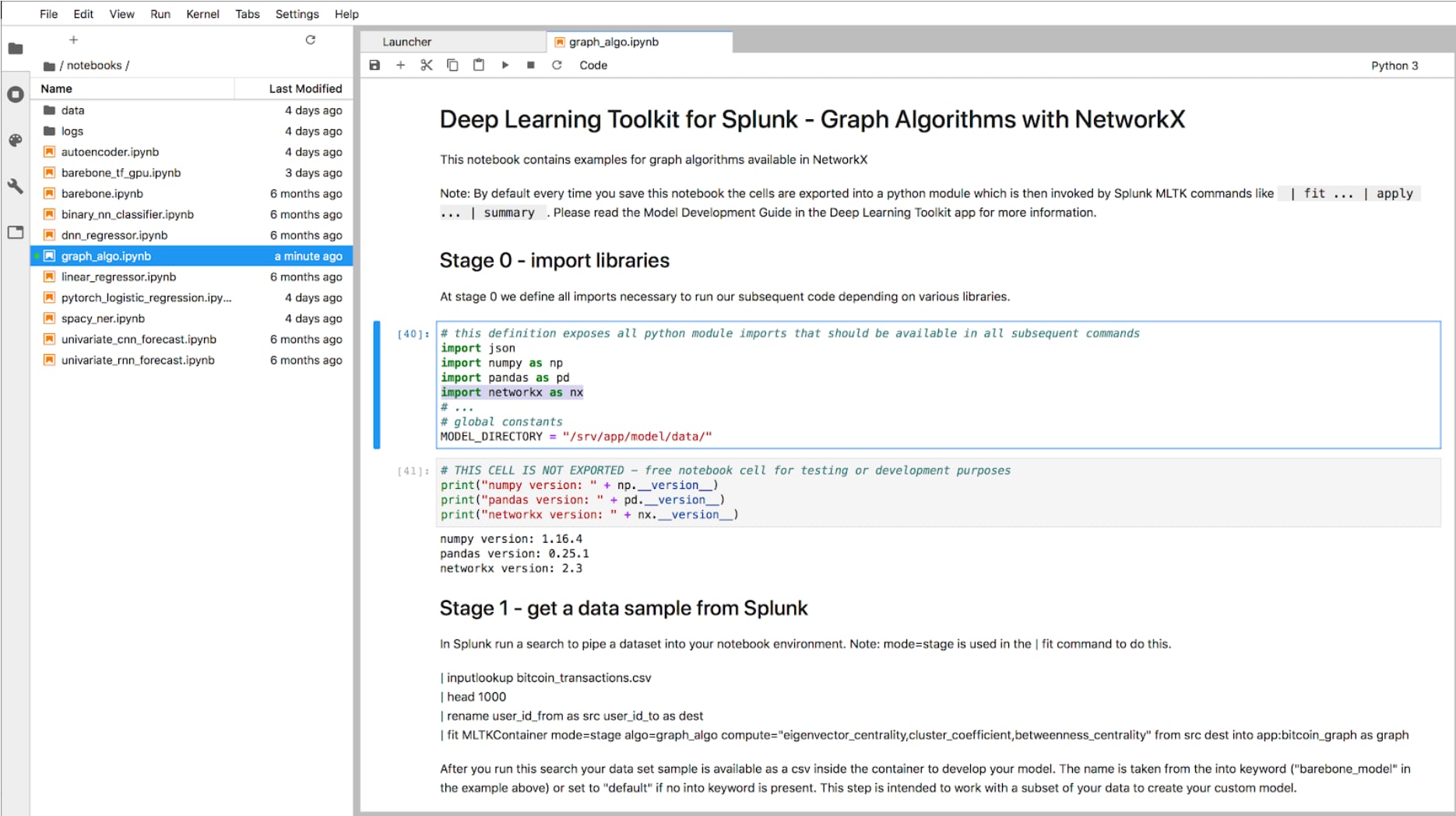

Start Mining your Gems

Now you should hopefully start seeing the hidden gems in your data and get excited about this new type of analytics that you can apply to your data in Splunk. You're probably wondering where you can find these algorithms and functions. The good news is that with the latest release 2.0 of the Python for Scientific Computing Package, you have NetworkX, a library for graph analysis, all at your fingertips and you can simply use it in Splunk! All you need to do is to wrap your algorithms of choice in with the MLSPL API into the Machine Learning Toolkit and you are ready to go. To speed things up, I used the Jupyter Notebook interface provided in the Deep Learning Toolkit to rapidly sketch out the algorithms mentioned above and port them back into MLTK within a few minutes. For your convenience you find the example dashboards and 3 graph algorithms readily packaged in the 3D graph visualization app on splunkbase.

What about Scale?

Lastly, you could run into a serious problem with graphs: scale.

When graphs grow bigger, the computational complexity can get really massive. In such cases, you typically run into 3 scenarios:

- You want to apply computationally very demanding algorithms, so compute is your limiting factor.

- Your graph is so big that you need to distribute it across multiple nodes: memory is your limiting factor.

- A combination of both to distribute compute and memory.

For the first scenario, you might solve the problem by accelerating computations, e.g. by parallelization on GPUs with frameworks like rapids.ai. If that’s the case, check out Anthony’s blog post how to build a GPU accelerated container with rapids.ai for the Deep Learning Toolkit. For the other two cases you would probably need more advanced distributed computing architectures like Spark’s GraphX and connect those to Splunk as presented in this .conf17 talk by Raanan and Andrew.

Finally, if you have the requirement to push on with your graph, you will probably choose a graph store or graph database like Neo4j that you can connect back to Splunk, e.g. using the neo4s app from splunkbase. With all of these possibilities in mind, I hope you have enough hints to get started with a new type of analytics use cases that you can now tackle with Splunk.

Happy Splunking,

Philipp

Related Articles

About Splunk

The world’s leading organizations rely on Splunk, a Cisco company, to continuously strengthen digital resilience with our unified security and observability platform, powered by industry-leading AI.

Our customers trust Splunk’s award-winning security and observability solutions to secure and improve the reliability of their complex digital environments, at any scale.