Splunk and the WEF – Working Together to Unlock the Potential of AI

Use of AI can be critical when developing systems to support social good, with some inspiring examples using Splunk in healthcare and higher education organisations. According to our State of Dark Data report, however, only 15% of organisations admit they are utilising AI solutions today due to lack of skills. So how can we help organisations unlock the potential of AI?

Here at Splunk we are immensely proud of our partnership with the World Economic Forum (WEF), and it is a pleasure to announce the release of the AI Procurement in a Box toolkit after our collaborative project work. This toolkit is designed to address the question above, providing a range of considerations and questions for organisations who are planning on utilising AI capabilities.

In this blog I’d like to summarise some of our high-level considerations if you are thinking about designing, building or procuring an AI enabled capability – linking them directly to sections of the AI procurement in a box toolkit.

AI Procurement in a Box

Before we dive into our considerations I’d like to briefly describe the content of the AI Procurement in a Box toolkit. There are three key documents in the toolkit, namely:

- The toolkit project overview, which describes the background to the project and the intended use for the toolkit;

- The guidelines, which outline some of the key considerations when procuring AI enabled solutions;

- The workbook, which contains a risk assessment, procurement checklist, some example requirements, some kick-starter guides for running an AI procurement and a set of case studies on AI procurements.

In this blog we will mostly be referring to the AI Procurement in a Box workbook.

Setting up for Success

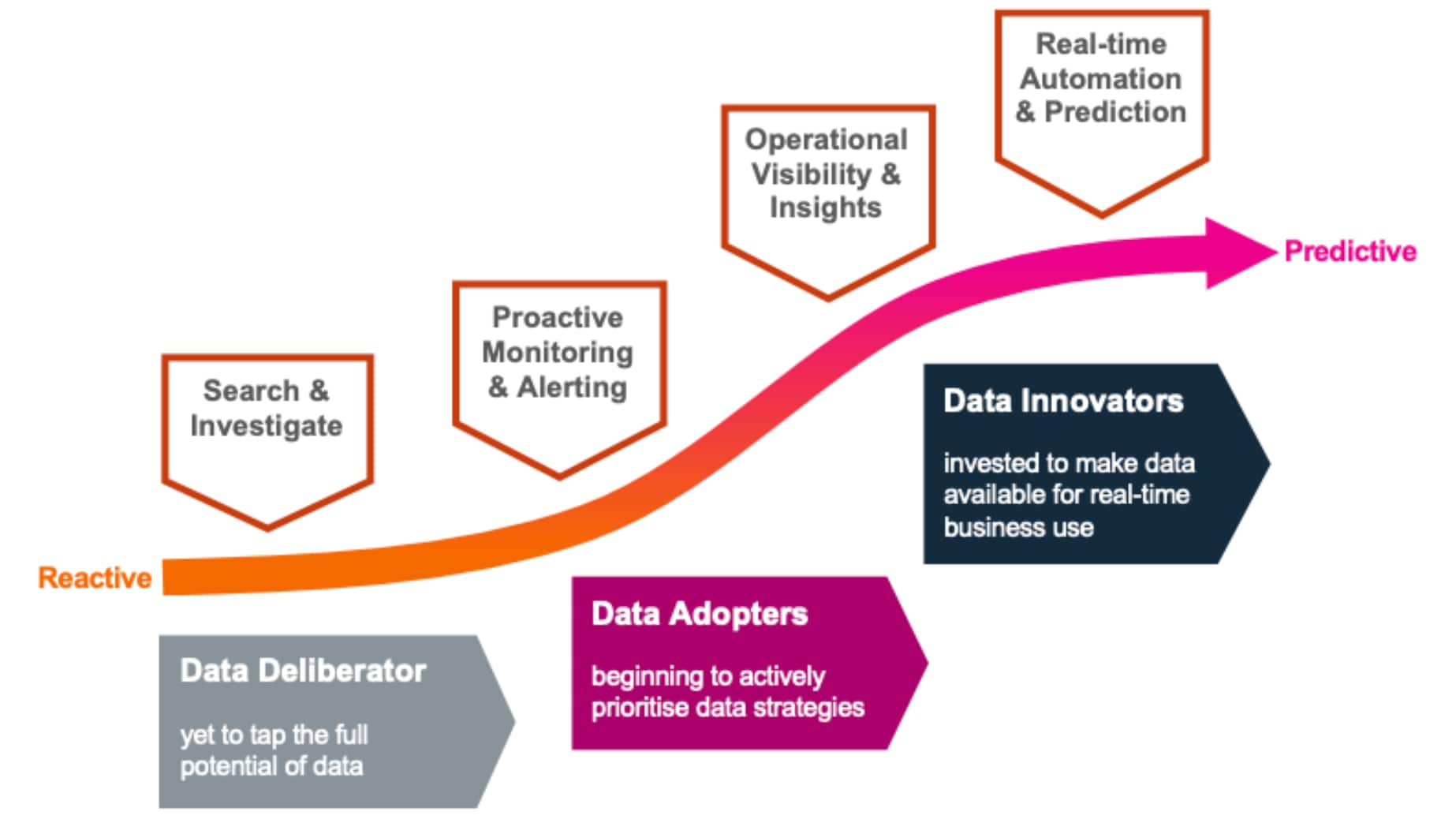

When our customers speak to us about AI, or more accurately, ML they are usually referring to proactive analytics and/or process automation. Successful customers tend to follow a maturity curve as they adopt this technology, moving from reactive to proactive analytics and decision making.

Our recent research on what your data is really worth discovered three levels of maturity with respect to data:

- Data deliberators, organisations that have yet to discover the full potential of data;

- Data adopters, organisations who are actively developing and evolving data strategies; and

- Data innovators, organisations who place a strong strategic emphasis on data and its business value.

In our experience widespread data ingestion sets the stage for the successful delivery of early AI-enabled use cases, which can then trigger a period of sustained growth, wider adoption, and greater innovation.

Regardless of where you sit on this maturity curve, however, successful projects often start with a risk assessment.

Risk Assessments

It may not be particularly exhilarating, but a risk assessment is a great place to start with an AI project. Because AI based capabilities are often less transparent in their processing of data and as a consequence can produce outputs that are difficult to explain or interpret you should first decide how much visibility you need into the data processing of the system. The second key consideration is down to the data that you want to process and how much control you need over the management and curation of the data. This is presented in the risk matrix on page 8 of the workbook.

To summarise, if you can tolerate lower visibility and control you can consider using SaaS or PaaS solutions, which may have a quicker time to value. Conversely, if you need complete control and visibility over the solution it is likely the solution will need to be bespoke, and therefore have a much longer delivery cycle.

For example, if you were looking to procure a solution to help manage the workloads of your IT infrastructure that could auto-balance the processes and optimise performance you may end up in the bottom right of the matrix looking at a SaaS solution given the solution will not process any sensitive data assets and the end users will not need to fully understand the logic of the solution.

There are a great set of questions to consider in the workbook to help make a risk assessment, and here we will summarise some of the key aspects: end users of the system, the data assets that will be used by the system, your organisational data literacy and any potential partnerships that could aid the development of the system.

End Users

Understanding the needs of system users, how they react to the solution, and their skill level is key. The end-user ‘profile’ will determine the quality of system outputs and how fast new techniques are adopted and/or accepted.

The end user ‘profile’ should consider their competency for understanding mathematical principles (i.e. probability) and also how they are likely to emotionally react to the processing and output of the system (e.g. what response do they have to uncovering a previously unknown risk?). This will help to determine the type and granularity of outputs (e.g. visual charts, key metrics etc.) that should be selected and how fast new techniques can be adopted and/or accepted.

These considerations link directly to the Consent and Control elements of the AI Procurement in a Box workbook on page 21, and you should examine them carefully if you want to understand what you might need to support user adoption.

Data Assets

Finding and understanding the data sets your organisation holds and how they can be accessed, combined and processed in accordance with the law and corporate culture will help you determine project scope—what can be achieved with the data and with what controls. According to recent research, 97% of public sector agencies agree that they must improve their ability to ingest, index and cross-correlate disparate data sets to optimize public policy outcomes.

There are a wide variety of areas to consider with respect to your data assets, and pages 20, 21, 22 and 23 of the workbook will help uncover more detailed considerations on Purpose, Consent and Control, Privacy and Cyber Security, and Ethics that can help shape your policies and strategies in this area.

Data Literacy

AI technologies can be complex, and therefore, in order to be successful, it’s critical that the organisation's leadership and operations team be ‘data literate’. This does not mean becoming a data scientist, but understanding the underlying mathematical principles (i.e. probability, accuracy, sampling etc.) and gaining an appreciation of the different benefits and limitations of the main ML techniques. We try to work collaboratively with customers to enhance their internal knowledge of and facility with AI, and we understand that innovation and education go hand in hand. Without a proper foundation, users will not be able to capitalise on the advances in automation and decision making capability provided by AI.

You should think about the explainability and skills elements of the workbook, on pages 24 and 29 respectively, to help guide your strategy around data literacy.

Collaborations and Partnerships

It is essential that the development of AI-based solutions is collaborative. As mentioned above we work closely with our customers to get their feedback on the AI analytics we develop – including running a Machine Learning advisory programme to help co-create capabilities with our customers.

We rely on our customers to help us assess if our products are easy to understand, fit for purpose, readily configurable, and lead to learning and efficiency. Our pre-built, AI-enabled products come with guides to help users get started quickly and help them interpret their results to bring data to every question, every decision and every action.

These are many of the areas that we considered when helping the WEF draft the sample requirements on Explainability, Interoperability, Lifecycle Management and Skills (on pages 24, 26, 28 and 29 of the workbook).

Summary

If you are looking to invest in AI solutions, the AI Procurement in a Box toolkit can help you assess your organization’s present AI maturity and how data can help you identify effective strategies. In particular you should conduct a risk assessment against the visibility and control you need for the solution. Alongside the risk assessment, you should also pay close attention to the end user needs, data assets that will be used by the solution, your organisational data literacy and any potential partnerships that you could draw on to support the development of the solution.

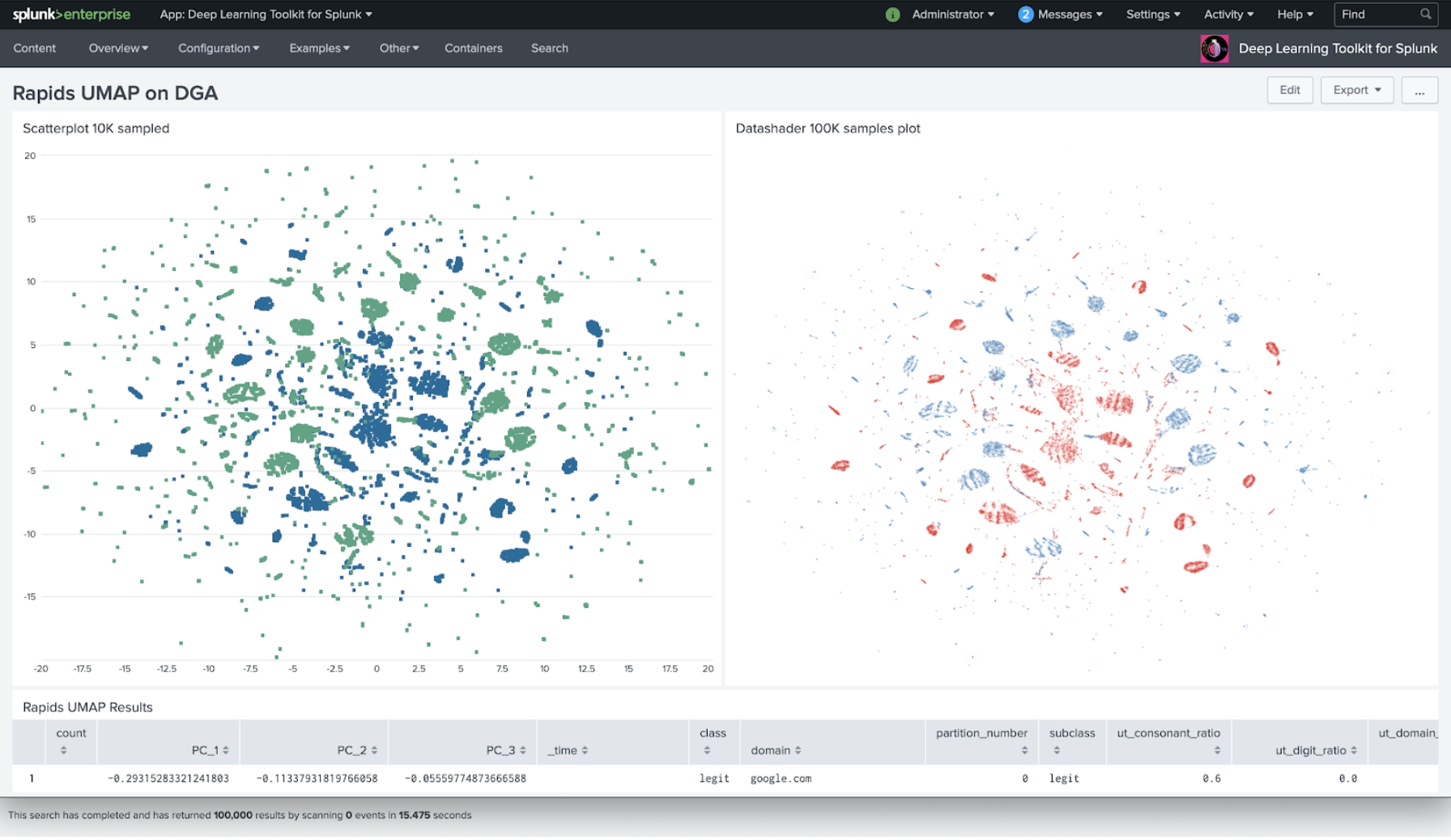

Many thanks to Mark Woods, Gordon Morrison, James Hodge and Lenny Stein for their help drafting this blog - and also to Philipp Drieger for his awesome visualisation using Uniform Manifold Approximation and Projection analysis on malicious and legitimate domain names.

Related Articles

About Splunk

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.