Online Sales Are Up! Ensure Your E-Commerce Platform is Not Being Used for Fraud

Even with tough economic times, e-commerce is up 25% since the beginning of March1. But, fraud has increased as well; according to Malwarebytes online credit card skimming has increased by 26% in March2 alone.

Even with tough economic times, e-commerce is up 25% since the beginning of March1. But, fraud has increased as well; according to Malwarebytes online credit card skimming has increased by 26% in March2 alone.

In our April “Staff Picks for Splunk Security Reading” blog post, I referenced a story about an e-commerce site getting hacked with a “virtual card skimmer” (thanks Matthew Joseff for sharing this with me). The author of the story ends the analysis urging customers to protect themselves with client-side (consumer installed) malware detection/prevention. I have nothing against this approach but not all AV/AM solutions will protect against this type of attack, and the e-commerce site does have a responsibility to protect their systems and their customers’ data. So let’s look at three ways that e-commerce sites can detect these kinds of attacks and protect their customers from data theft.

A quick review of what happened as posted by Malwarebytes:

- 1. The bad guys got access to files on the victim website (speculation is out of date Magento e-commerce software)

- 2. The home page was altered (obfuscated javascript added) to load a hidden PNG image

- 3. This PNG image included malicious javascript code

- 4. Cybersource is used as the merchant processor, and it is a Cybersource checkout page users are legitimately directed to for credit card information

- 5. The javascript in the PNG file created and overlaid an iframe on the checkout page (over the existing CyberSource checkout iframe)

- 6. The malicious checkout page loaded from “deskofhelp[.]com” (registered to a Yandex[.]ru email address)

- 7. Users entered their credit card data into the malicious iframe, received a session timeout message, and were redirected to the real CyberSource checkout iframe

- 8. The bad guys stole payment data:

- First and last name

- Billing address

- Telephone number

- Credit card number

- Credit card expiry date

- Credit card CVV

It is important to note that the malicious iframe is only rendered on the user’s browser from a malicious remote site, there is nothing directly in the merchant web logs to show the customer’s browser made a request to this remote site.

Let’s look at three ways that this attack could have been detected using Splunk, and remember anomaly detection is a core part of fraud detection:

1. OS Monitoring

The first place to start is with OS logs. File change/add/delete are easy to log in most OS. Native solutions, open source and paid solutions can be used to monitor for changes. High value targets like public facing servers should have this monitoring enabled. This would have detected the malicious PNG file added to the system and changes made to the home page file. Both events should have been a red flag to the security, DevOps and/or content management teams as this would be unexpected.

Splunk has Technical Add-Ons (TA) freely available on Splunkbase to make monitoring and alerting on these events easy, and there are many Splunk and third-party blog posts covering how to audit file systems in windows and linux.

2. Website Metrics

Another area of detection would be around website metrics. Average checkout time and cart abandonment should be known metrics. This is usually of prime concern to marketing and site reliability teams. Either the customer took twice as long to finish the checkout process, or they quit after the timeout message. Either way, the metrics for these different events should have shown a large increase. There is a website metric app on Splunkbase that can provide some good details, but some of these metrics can be easily calculated with simple searches.

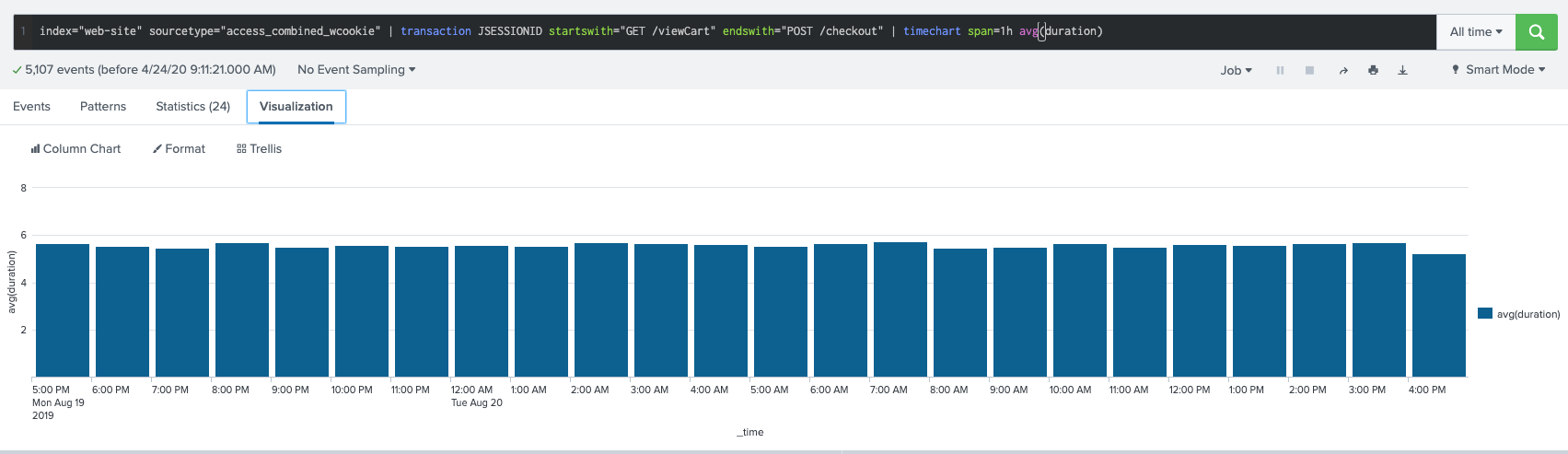

For example, using apache access logs we can see other ways to find suspicious activity. Monitoring checkout time is easy if you know the page before and after and calculate the difference between the 2. I took some liberty with some sample data as I have no real payment processor data, but by calculating the time between the cart view, and post to checkout page, I can calculate the time between two main events and show an hourly average:

index="web-site" sourcetype="access_combined_wcookie" | transaction JSESSIONID startswith="GET /viewCart" endswith="POST /checkout" | timechart span=1h avg(duration)

My data shows about a 5.5 second average for my “checkout process”. The checkout time for a real e-commerce site may be 30 seconds or longer, but the fictitious error page and second checkout process, would greatly increase the average checkout time.

My data shows about a 5.5 second average for my “checkout process”. The checkout time for a real e-commerce site may be 30 seconds or longer, but the fictitious error page and second checkout process, would greatly increase the average checkout time.

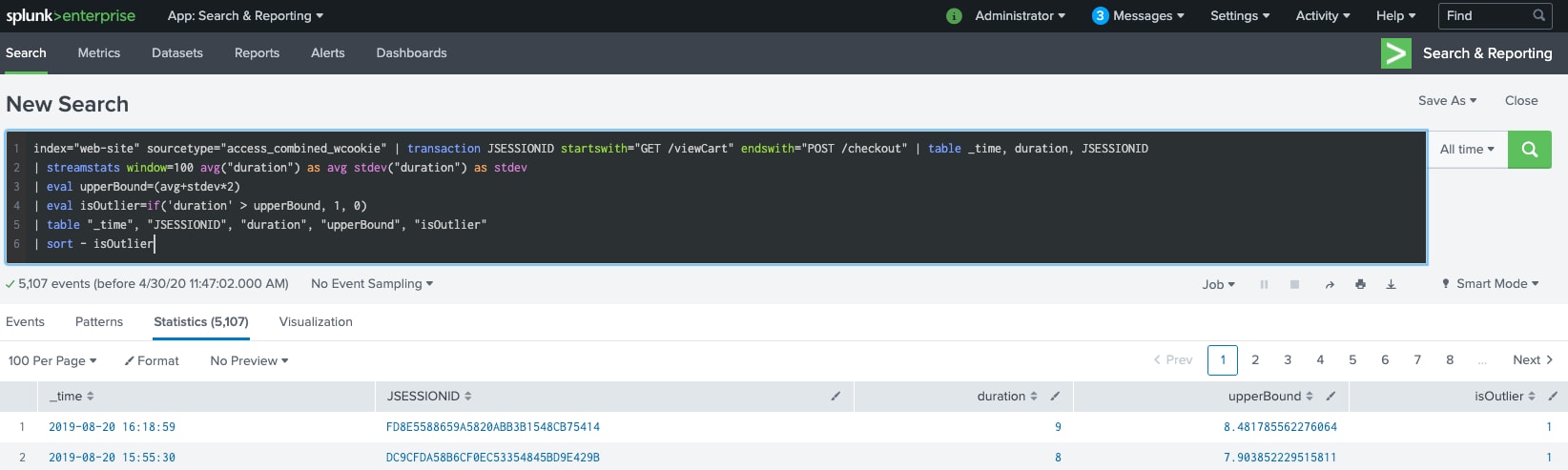

In addition, we can use some cool math powers of Splunk to find any transactions that took longer than “time + 2x standard deviations” of an average checkout by calculating the standard deviation of the previous 100 transactions and adding it (or some multiple of standard deviation) to the average transaction time, and comparing to the actual transaction time:

index="web-site" sourcetype="access_combined_wcookie" | transaction JSESSIONID startswith="GET /viewCart" endswith="POST /checkout" | table _time, duration, JSESSIONID

| streamstats window=100 avg("duration") as avg stdev("duration") as stdev

| eval upperBound=(avg+stdev*2)

| eval isOutlier=if('duration' > upperBound, 1, 0)

| table "_time", "JSESSIONID", "duration", "upperBound", "isOutlier"

| sort - isOutlier

And through a few clicks of the GUI, this could be scheduled as a recurring search (every few minutes) and alert when the outlier condition is met. Splunk supports many different types of alerts including (not limited to) email, scripts, and webhook.

And through a few clicks of the GUI, this could be scheduled as a recurring search (every few minutes) and alert when the outlier condition is met. Splunk supports many different types of alerts including (not limited to) email, scripts, and webhook.

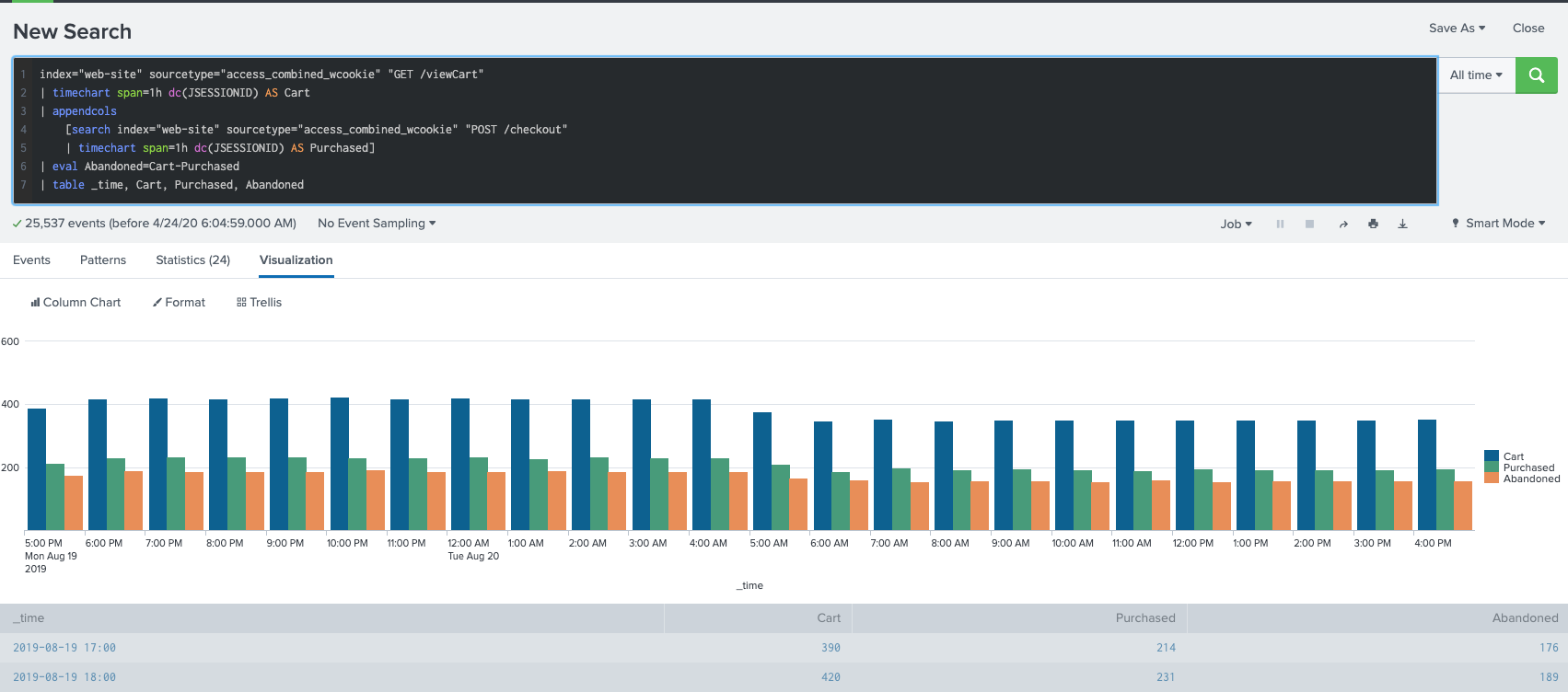

Also, the fictitious error page should impact customer experience and lead to an increased rate of cart abandonment. Subtracting successful purchases from total cart access show us our cart abandonment. Here is one way to display this data, but there are many more depending on what your logs look like:

index="web-site" sourcetype="access_combined_wcookie" "GET /viewCart"

| timechart span=1h dc(JSESSIONID) AS Cart

| appendcols

[search index="web-site" sourcetype="access_combined_wcookie" "POST /checkout"

| timechart span=1h dc(JSESSIONID) AS Purchased]

| eval Abandoned=Cart-Purchased

| table _time, Cart, Purchased, Abandoned

Counts of resources accessed is another common metric and allows marketing teams and others to determine if pages are hard to find, not interesting, or if images may need to be cached for more frequent access. The malicious PNG file should have appeared from nowhere and should have made someone ask why it had a high access rate. Even though the file was hidden on the page, the http request would be logged.

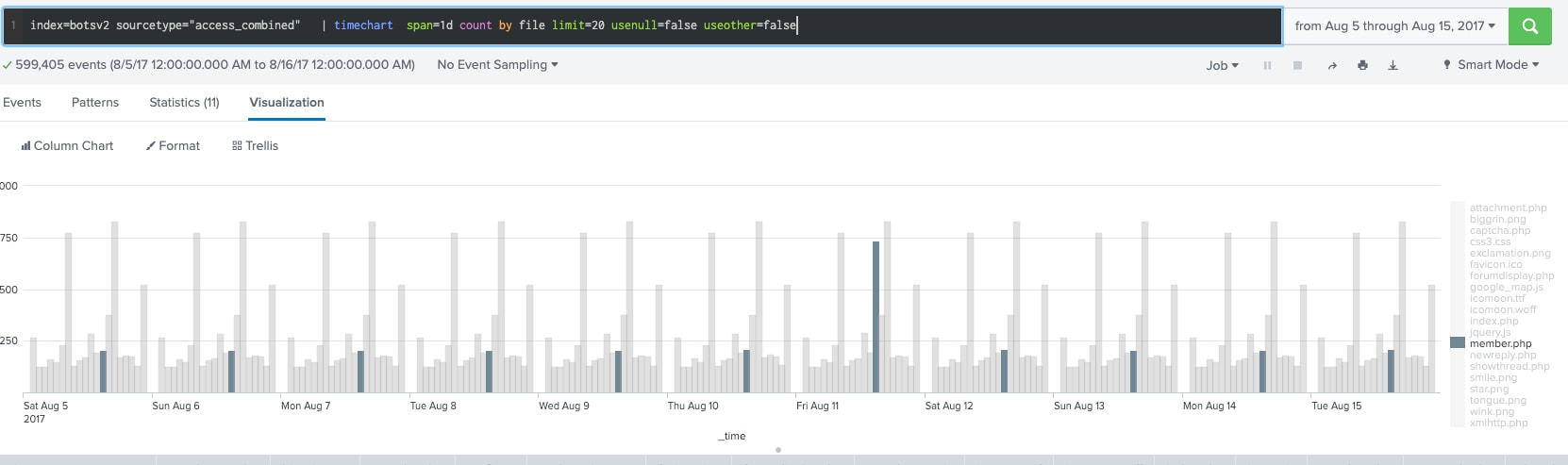

A simple search using some sample data of an apache access log showing web resources accessed over time is below; notice how the file member.php has a huge spike one day:

index=botsv2 sourcetype="access_combined" | timechart span=1d count by file limit=20 usenull=false useother=false

3. Website Monitoring

3. Website Monitoring

Finally, another method that could have detected this compromise would be via automated website monitoring. Scripted monitoring of a website to ensure everything is working end to end is a common tactic that has been used for years to ensure site reliability. When third party resources (payment processors) are part of a site, it is essential to make sure everything is working and these outside vendors are adhering to any SLAs. Selenium web driver is an open source solution that enables scripted testing of a complete transaction of the e-commerce site. Logging the details from this client monitoring system, and enterprise proxy logs would have shown some important events:

- The session timeout message, although fictitious, should have generated an event as the page was unexpected (legitimate timeouts should alert too)

- A hash of the fake checkout page, or fake session timeout page would not have matched the hash of the expected page

- DNS requests from the client (captured on client or network) would have indicated an unknown domain and DNS request (deskofhelp[dot]com)

- The criminals didn’t localize their malicious iFrame for the non-English sites. Testing the ES or FR sites would have returned an English checkout page — again, an unexpected result that should generate an alert

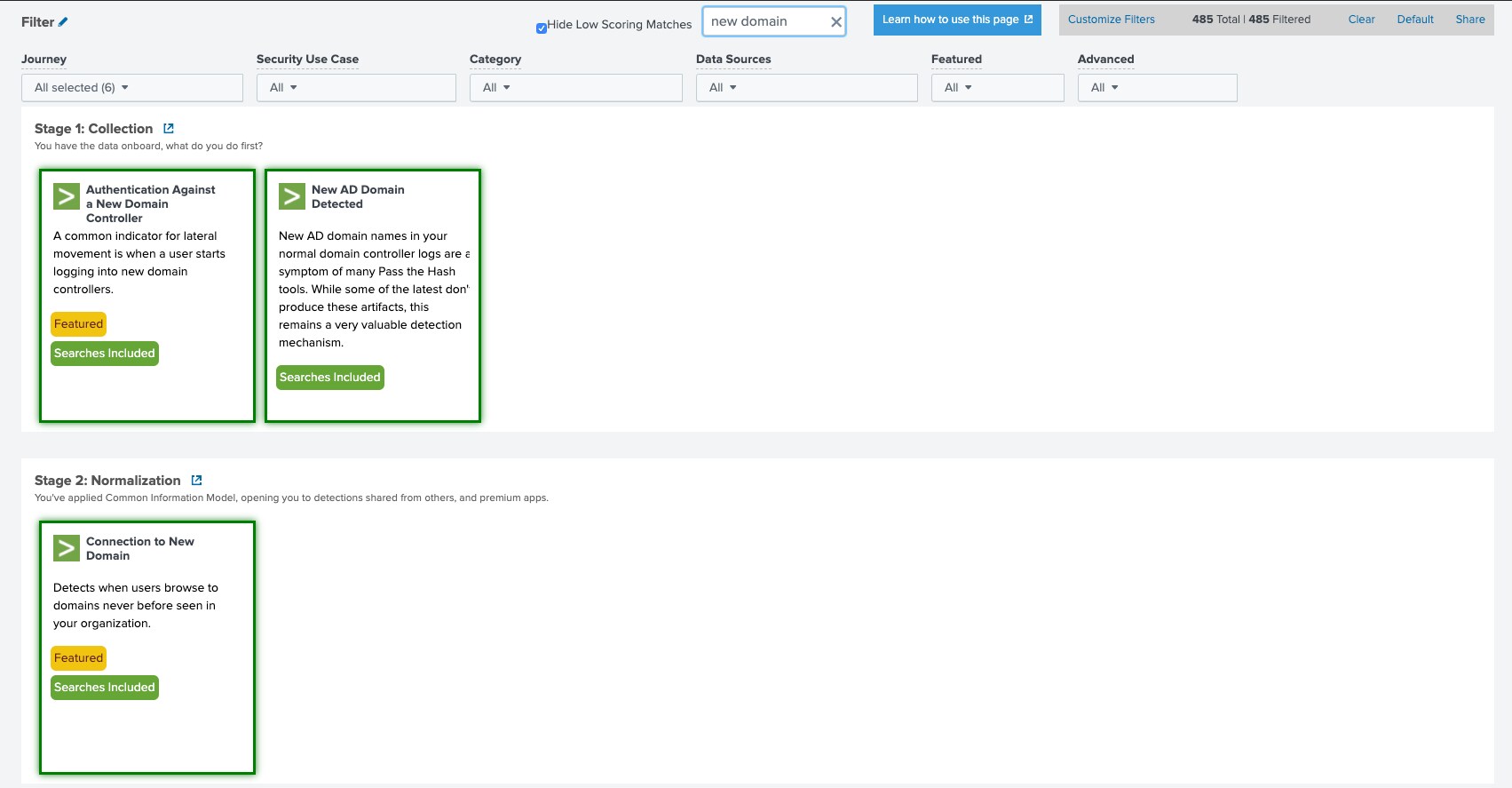

These are not new detection solutions, and they have been previously covered in website monitoring and detecting new domains blog posts.

Based on the “detecting new domains” blog post referred to above, this detection was added to the freely available Splunk Security Essentials App. You can find this search by searching for the term “new domain”

Some of you may have noticed that some of the techniques and references I have linked to are a few years old. This should underscore the point that attacks don’t need to be new or chic in order to be effective, and that tried and true monitoring controls are still very effective.

Some of you may have noticed that some of the techniques and references I have linked to are a few years old. This should underscore the point that attacks don’t need to be new or chic in order to be effective, and that tried and true monitoring controls are still very effective.

The different detection techniques shown above are not all related to security. IT Ops, Marketing, Fraud Ops all regularly use Splunk. If Splunk were being used by just one of these teams, this breach would have been caught much sooner than it was. Imagine if all teams were using Splunk Enterprise and collaborating with shared data.

Thanks to James Brodsky for help in brainstorming these detection scenarios.

1. https://www.digitalcommerce360.com/2020/04/01/us-ecommerce-sales-rise-25-since-beginning-of-march/

2. https://blog.malwarebytes.com/cybercrime/2020/04/online-credit-card-skimming-increases-by-26-in-march/

----------------------------------------------------

Thanks!

Andrew Morris

Related Articles

About Splunk

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.