Digital Resilience Pays Off

Download this e-book to learn about the role of Digital Resilience across enterprises.

In my last blog we focussed on some of the problems with Artificial Intelligence (AI) and public trust that can be compounded by organisational issues such as dark data. This time round we’re going to look at a couple of examples that demonstrate how AI can be used as a force for good.

Over the past few months we have been working with the World Economic Forum (WEF) to test out some of the guidance on AI that we have been drafting with them. There have been a lot of lively debates as the use of AI is clearly divisive, especially when it comes to image processing.

If we look at the UK there has been controversy recently over police using facial recognition techniques on CCTV footage to support the fight against crime. The use of these techniques has encouraged the UK’s Information Commissioner, Elizabeth Denham, to open an investigation into whether use of image recognition is being used appropriately in this case.

There are even more sinister examples of where image processing can be easily misled, such as this example of tricking an algorithm into thinking a row of rifles was actually a helicopter. With examples like this it’s not difficult to imagine dystopian world such as the one described in this article about deepfakes.

These are cautionary tales for applying deep learning, but thankfully at Splunk we don’t focus on image processing – we’re all about machine data!

When it comes to machine data there are a wealth of use cases where applying machine learning has definitely had a positive impact on people’s lives.

If you speak to a well-travelled security or fraud analyst about how they effectively detect threats something that will often come up in conversation is the term ‘outlier’. There are numerous different ways to detect anomalies in data sets, but I’d suggest you start with this technical walkthrough on statistical outliers before moving on to more advanced techniques (described as flavours of ice cream of course!).

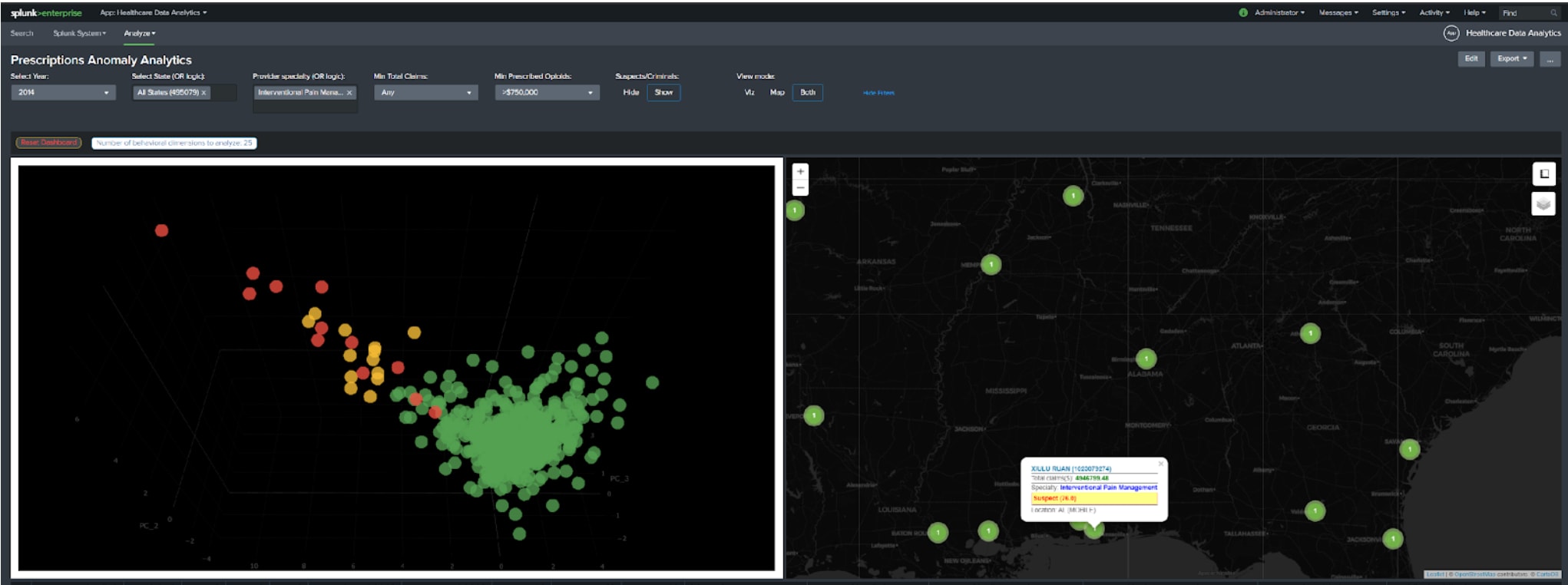

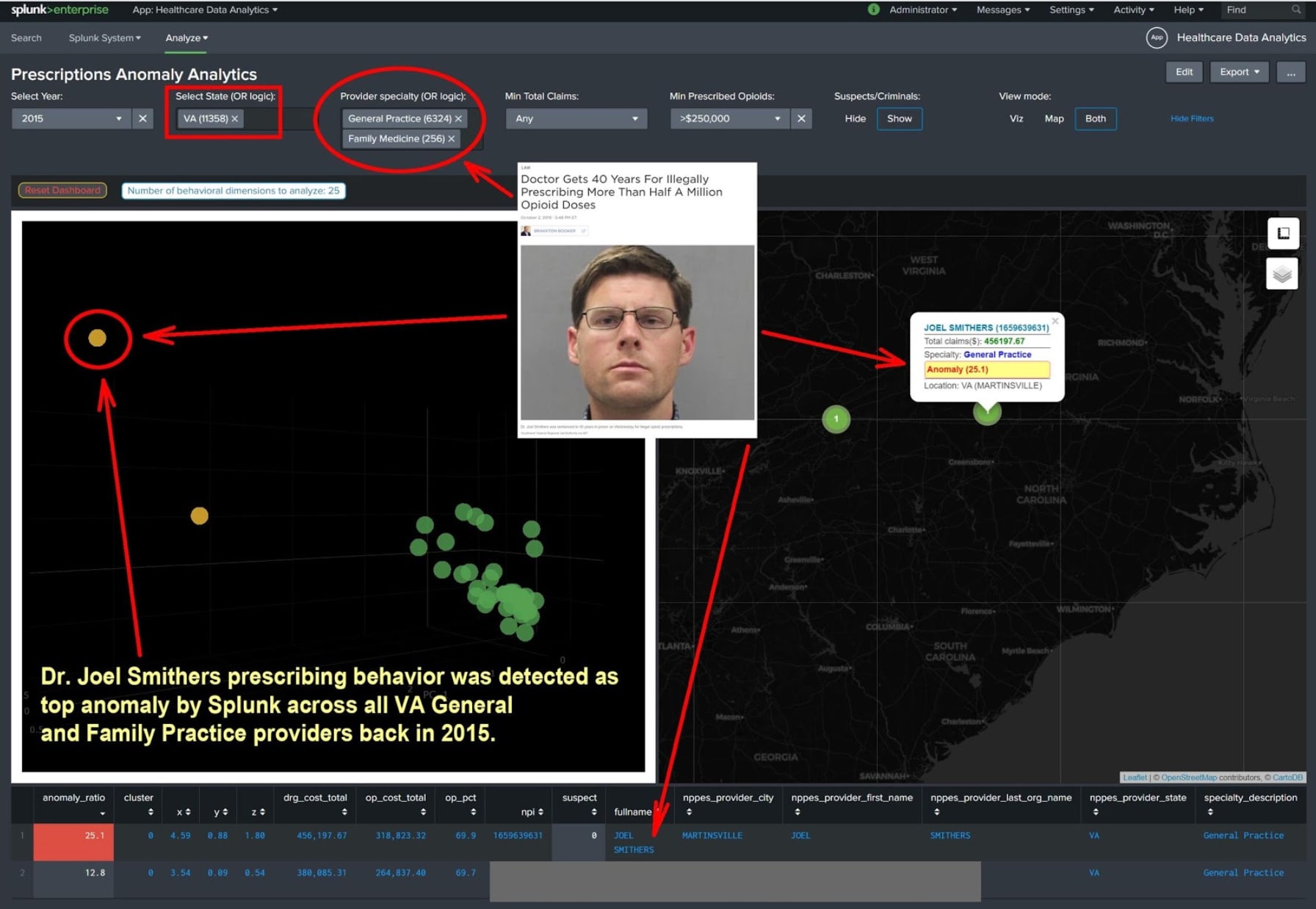

*Clustering technique used to identify fraudulent prescriptions on data from data.cms.gov. Red dots indicate providers that are already in prison or been investigated in some way for suspicious activity by DEA, Dept of Justice or Law Enforcement.

*Clustering technique used to identify fraudulent prescriptions on data from data.cms.gov. Red dots indicate providers that are already in prison or been investigated in some way for suspicious activity by DEA, Dept of Justice or Law Enforcement.

The good folks at New York Presbyterian hospital realised that many of the techniques commonly used for outlier detection in IT security could also be used to detect outliers in the handling of controlled substances.

The approach to detecting outliers at New York Presbyterian closely followed the chocolate ice cream technique in this anomaly detection blog. Although we can’t show you their results (that wouldn’t be ethical would it?) to demonstrate how it works, there are a few graphics here on open source data that we have run through Splunk where the same technique has been used to:

Many thanks to Gleb Esman for helping provide the details for these examples.

At Splunk we are always ready to support customers who are interested in using anomaly detection techniques or who want to use Splunk to detect fraud. A great place to start is the Splunk Security Essentials for Fraud Detection where some of the techniques in this blog are presented in more detail.

*Anomaly detection analysis of data published by data.cms.gov that contains aggregate details of prescriptions billed to Medicare by providers.

*Anomaly detection analysis of data published by data.cms.gov that contains aggregate details of prescriptions billed to Medicare by providers.

Machine learning isn’t just good at helping fight criminal activity, it can also be used to deliver other positive outcomes.

We have hundreds of universities across the globe who are using Splunk to monitor their IT from a security and operational perspective. One of these universities – the University of Nevada, Las Vegas (UNLV) – had a psychology professor called Matt Bernacki who realised that all of the data they were collecting from systems across campus also gave them a good insight into student engagement. He spear-headed a project that built a model to predict whether or not students looked like they were likely to pass or fail the course, helping lecturers and other academic staff make timely interventions to support students. In the first semester of using this system UNLV identified over 100 failing students that with interventions they turned around to getting top grades.

*Analysis of Open University data to demonstrate how predictions can be made on student outcomes based on their digital footprint from university IT systems.

*Analysis of Open University data to demonstrate how predictions can be made on student outcomes based on their digital footprint from university IT systems.

As well as working with a number of Universities in the US and UK to help build out similar use cases we’ve also just launched a Student Success Toolkit to apply a cookie cutter approach to building out these kind of predictive capabilities.

Note that in both of these use cases intervention and analysis is still required by a person in order to take an action. If you view AI as augmented intelligence, it is there to augment a person’s decision-making process rather than replacing it – people are much better at putting information into context than machines are (well at the moment anyway!).

As well as having someone in the loop, a secondary consideration when you’re looking to apply these types of techniques is to make sure that you’d be comfortable with the details being published. If you’re not it might be that the way you are using them doesn’t meet everyone’s standards for ethics: ultimately you need to maintain public trust while trying to deliver positive outcomes.

Finally, to follow up on the clue about product features I mentioned last time, we have now launched the Deep Learning Toolkit for Splunk. Amazing work by Philipp Drieger getting this together - it’s going to be an awesome way of delivering even more advanced use cases in Splunk.

Until next time,

Greg

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.