The Words of the Birds - Leveraging AI to Detect Songbirds

When was the last time you had the chance to listen to some of the most beautiful concerts that nature can play for you? From simple chirps and tweets to complex bird songs composed into a sophisticated soundscape, you may wish you could decrypt and understand their daily conversation. “Hey, good morning, how are you today?”, you might hear in the early hours, sometimes so loudly that you are awakened from the chirping. Alas, we are far from really understanding the stories birds tell each other, but what if you had an AI system that could identify the birds around you based on their sounds?

Start the AI and Catch a Bird

After a bit of research, I discovered the amazing project BirdNET by The Cornell Lab of Ornithology and the Chemnitz University of Technology (TU Chemnitz). It allows you to recognize birds from sound recordings with the help of a neural network that was trained to classify 984 species of birds. Luckily, the researchers published BirdNET on GitHub so you can easily get this recognition system up and running. It should just take you a few minutes and three simple steps:

- Clone the repo

- Build a docker container

- Run BirdNET on a recorded sound file and save the results

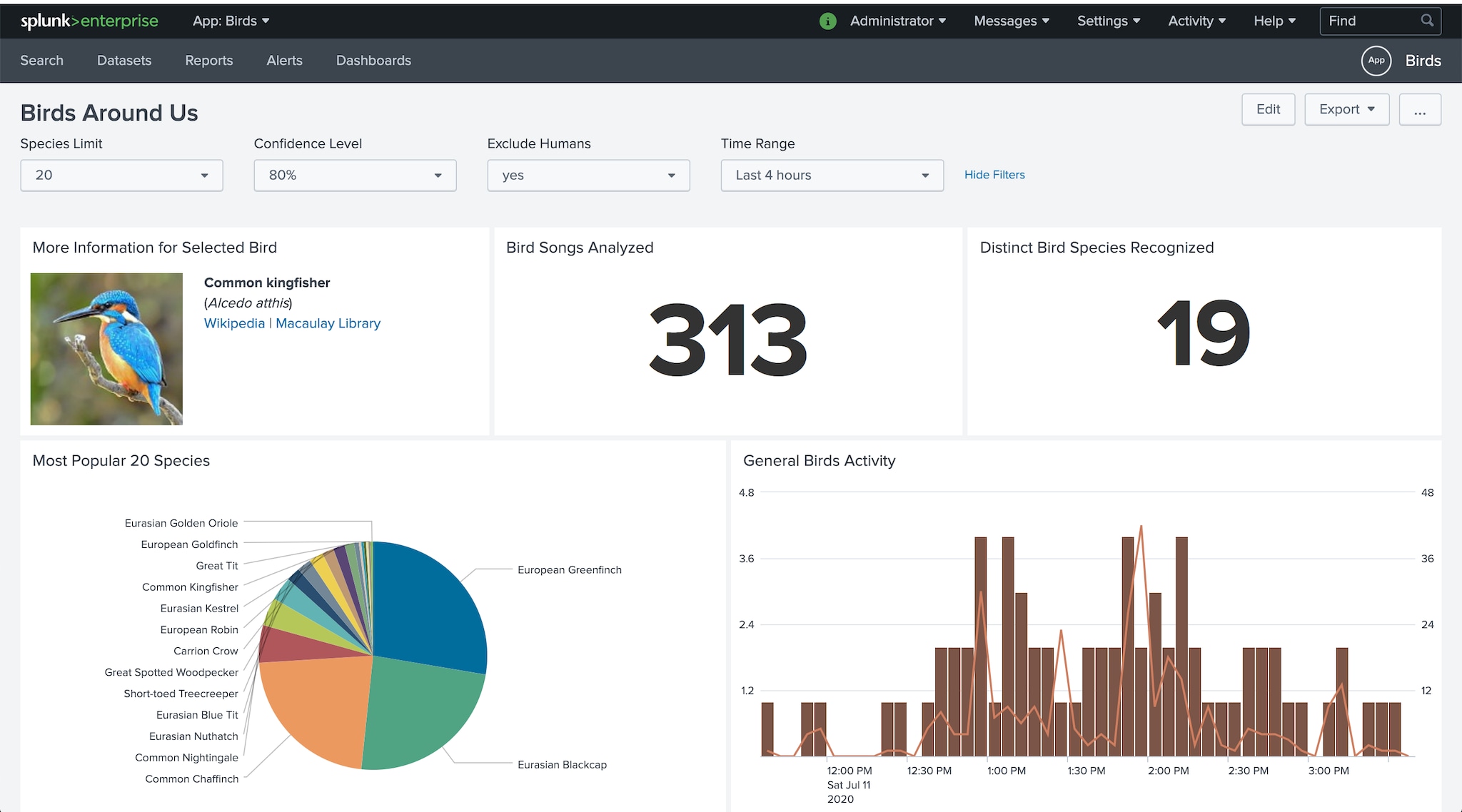

But wait, how do you actually analyze the results easily in real-time as well as over a longer period of time? This is where Splunk’s Data-to-Everything Platform allows you to find answers to any question and gain insight very quickly, of course. Again just three simple steps:

- Define a data input in Splunk and point to the TSV files generated by BirdNET

- Write a few searches to populate a dashboard like the one above

- Define a drill down to show a thumbnail picture and hyperlinks for more information about a bird species (this is the fun part!)

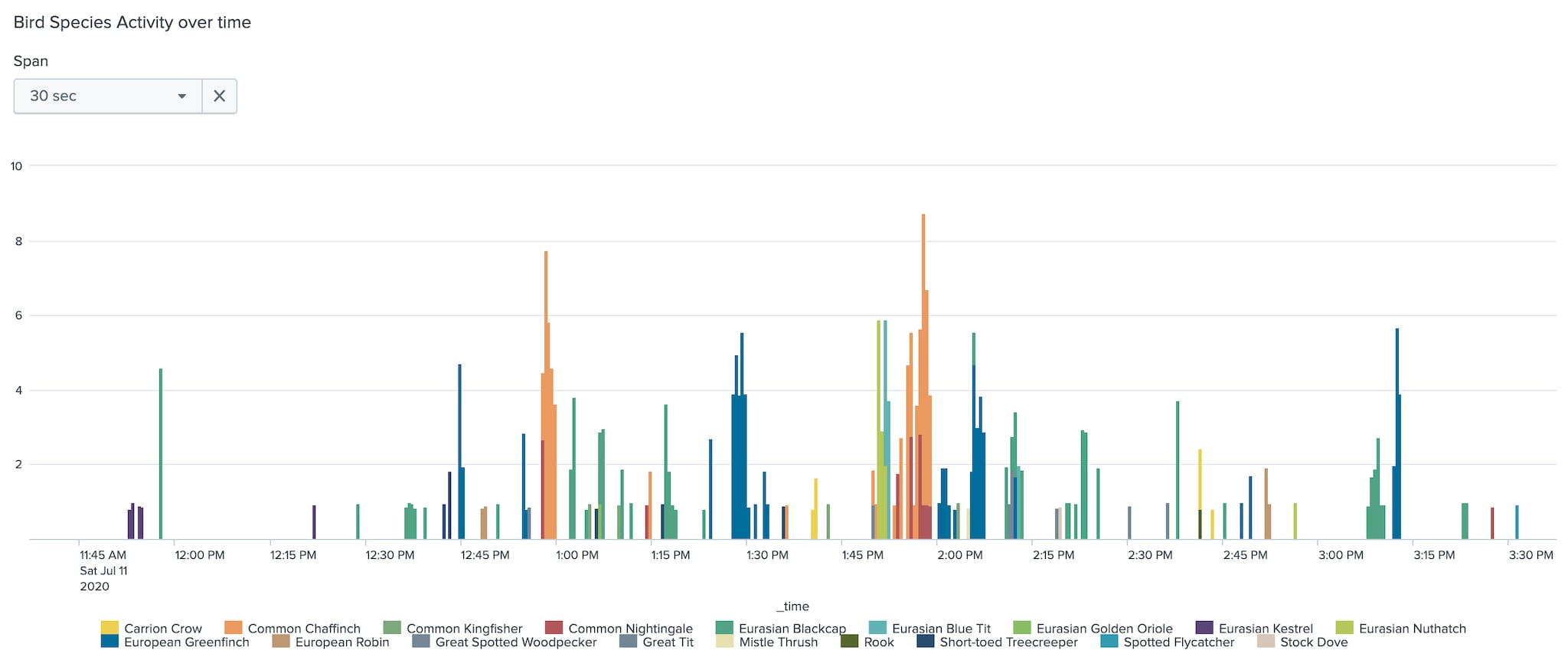

Now let’s answer a typical question first: “Which birds sing when and for how long?” The following search provides you with a simple time chart that shows which birds are the most active singers:

index="birds" sourcetype="birds-tsv" Confidence>0.8 NOT "Common Name"="Human"

| rename "Begin Time _s" as time_start, "Common Name" as name, Confidence as confidence

| eval _time=('_time' + time_start)

| timechart limit=20 useother=f span=30s sum(confidence) by name

Let’s visualize it as a time chart and find out which birds sing on a summer afternoon:

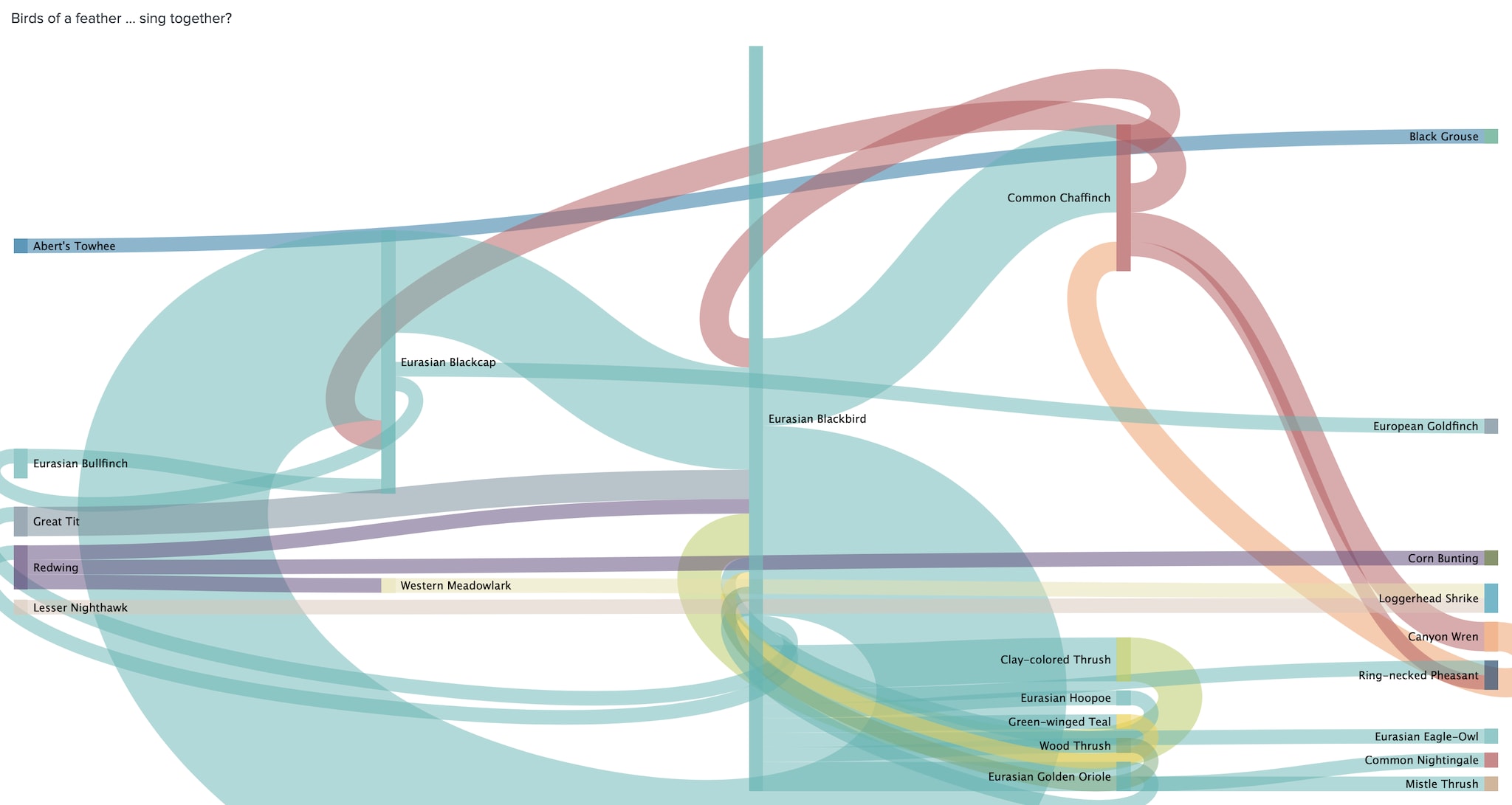

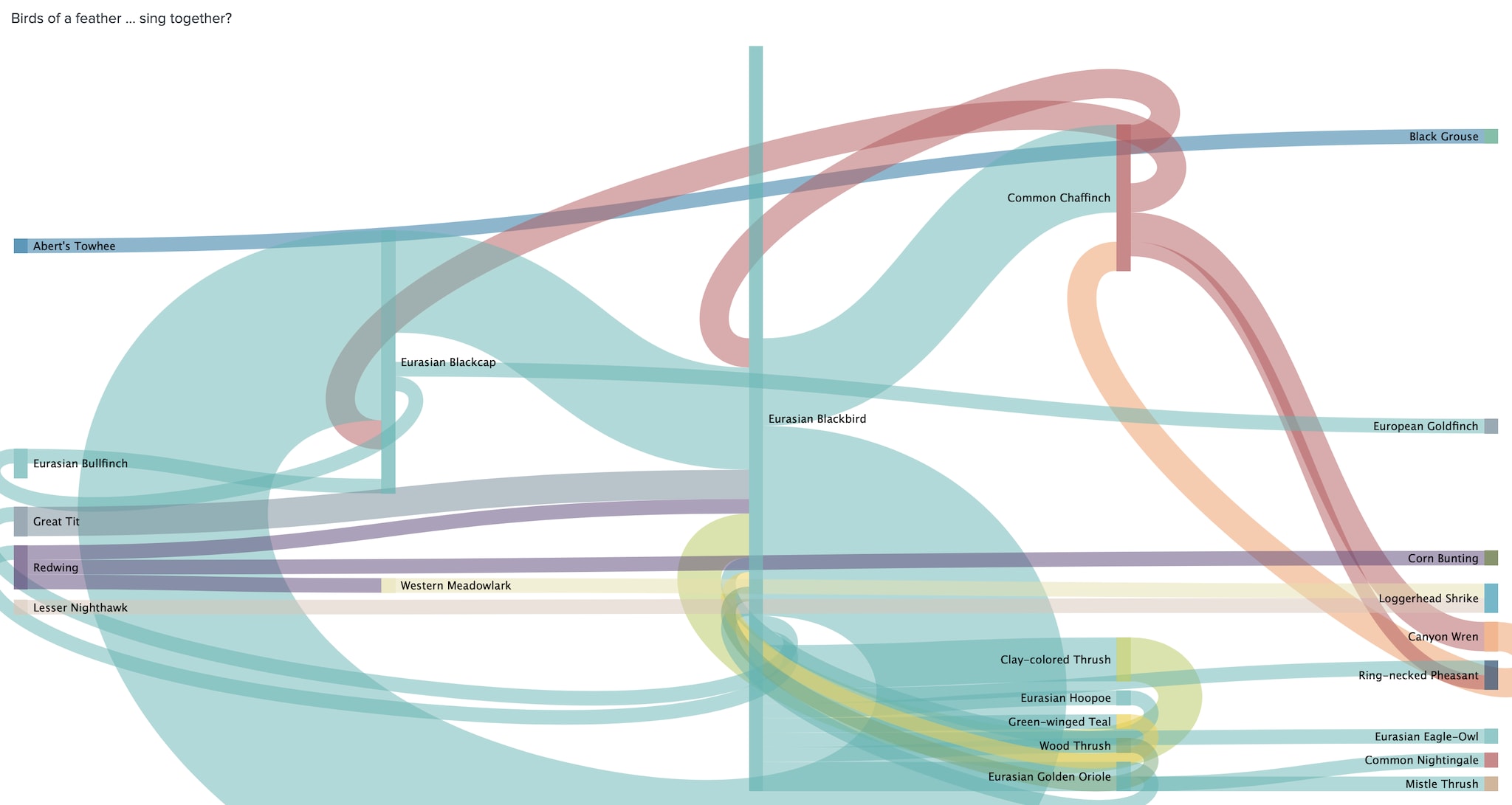

Birds of a Feather …Sing Together?

Of course, you can also do more sophisticated analysis. You certainly know the phrase “birds of a feather flock together” and you might ask another question “Which birds sing together?”. So let’s look at a search that can give an answer:

index="birds" sourcetype="birds-tsv"

| rename "Begin Time _s" as time_start, "End Time _s" as time_end,"Common Name" as name, "Confidence" as confidence, "Species Code" as code

| eval _time=_time+time_start

| eval duration=time_end-time_start

| search confidence>0.9 NOT name="Human"

| sort 0 _time

| streamstats dc(name) as dc_names first(name) as src last(name) as dest time_window=10

| table _time src dest dc_names

| where dc_names==2

| stats count by src dest

With the help of a Sankey diagram we can see which birds tend to sing together:

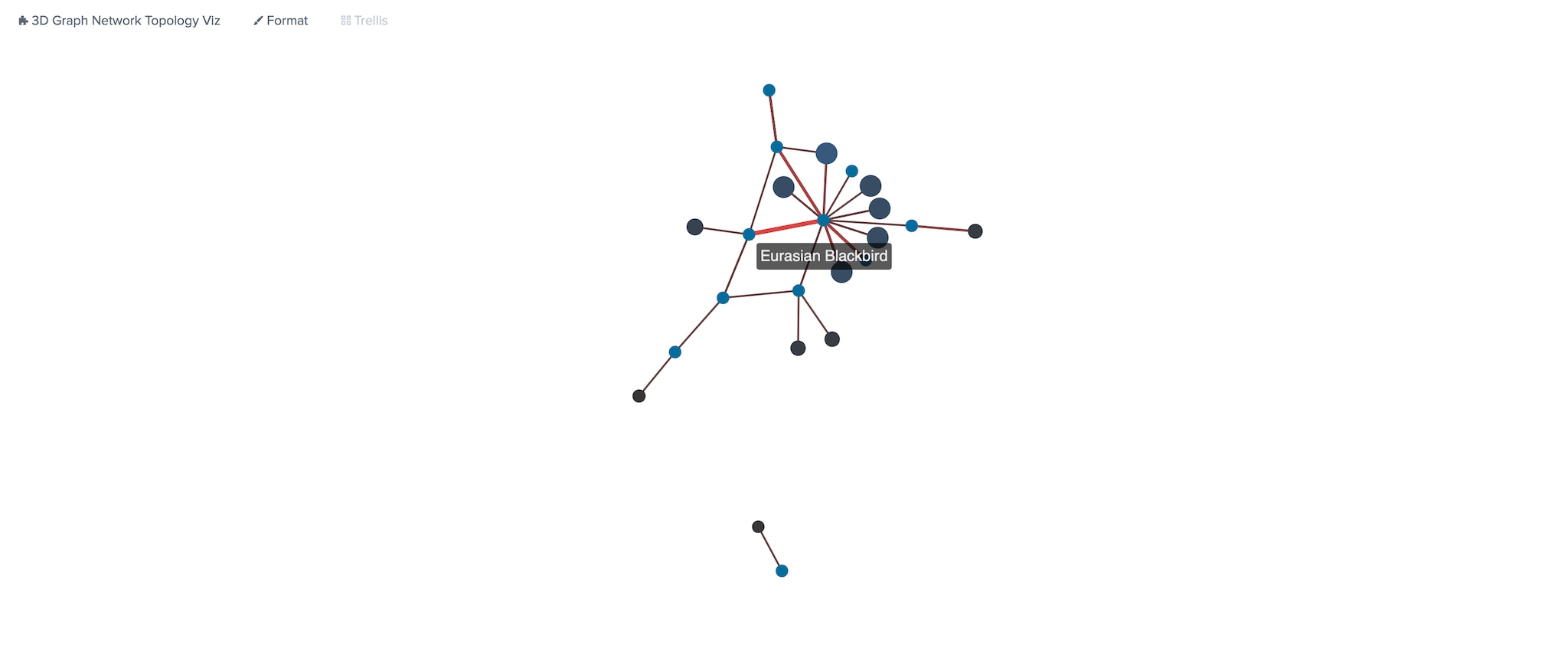

If this diagram is too complicated to read, we can get an even more abstract representation and draw a “BirdNET” using the 3D Graph Network Topology Visualization built-in Graph Analysis Framework. Eagle-eyed readers should spot the little meta bird depicted in this graph:

Trust the AI or the Bird?

Now before we wrap up, you may want to ask critical questions around AI systems. For example, let’s address one question you might have asked yourself: Can we rely on the predictions of BirdNET and how do we know that the results are 100% accurate? Well, we don’t, and in general, it’s pretty hard to get an AI system to 100% accuracy - you may have noticed the level of confidence selection on the dashboard above.

However, we can use the pre-trained model, in this case, built by a team of experts in ornithology, and evaluate its results. During my experiment, BirdNET detected an American robin here in Central Europe, which made me wonder if that can actually be true. And I learned that I can achieve much better results after adding my geoinformation. By adding this data, BirdNET is able to apply additional “knowledge” about bird species that are known to be domestic in certain regions. After fine-tuning the parameters, most of the findings were accurate in terms of the expected and observed reality. For example, I could easily verify the Eurasian blackbirds that I know very well. I used to watch them singing in a nearby tree or while they're chasing worms after the rain. Yet, I was really surprised by the diversity of other bird species around us. For instance, I had previously never seen nightingales in my region, but when I learned more about their complex and beautiful song, I was actually able to identify their sound easily and could verify the findings on the dashboard.

What’s your Bird?

If you’re wondering what AI and all these birds have to do with Splunk, it's worth looking at how open and flexible the Data-to-Everything platform can be to achieve fast time to value. I set out to reseach the types of birds I was surrounded by and a few days later I was able to draw some conclusions. Similarly, you can translate from birds to any entity from which you are able to collect data. This could be with the help of an AI system or in a classic data collection method of logs, metrics, and events from any system. My colleague Greg recently wrote about collaborations with the World Economic Forum, in which he discusses more possibilities to unlock the power of AI.

What worked in the bird example for sound analysis can work in the same way for image recognition systems or any other AI system. You can quickly analyze what’s going on over time and actually make actionable use of the results. Of course, you can easily combine those systems with Splunk’s Machine Learning Toolkit to apply a second layer of intelligence on top of the data. Last but not least, you could even build your very own domain-specific AI system using the Deep Learning Toolkit for Splunk. Now over to you: What’s your next AI adventure?

Happy Splunking,

Philipp

Many thanks to Mina, Jessica, and Emma for your continuous support and help not only on this post, but on my other articles on this blog as well!

Related Articles

About Splunk

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.