Upping the Auditing Game for Correlation Searches Within Enterprise Security — Part 1: The Basics

One question I get asked frequently is “how can I get deeper insight and audit correlation searches running inside my environment?”

One question I get asked frequently is “how can I get deeper insight and audit correlation searches running inside my environment?”

The first step in understanding our correlation searches, is creating a baseline of what is expected and identify what is currently enabled and running today.

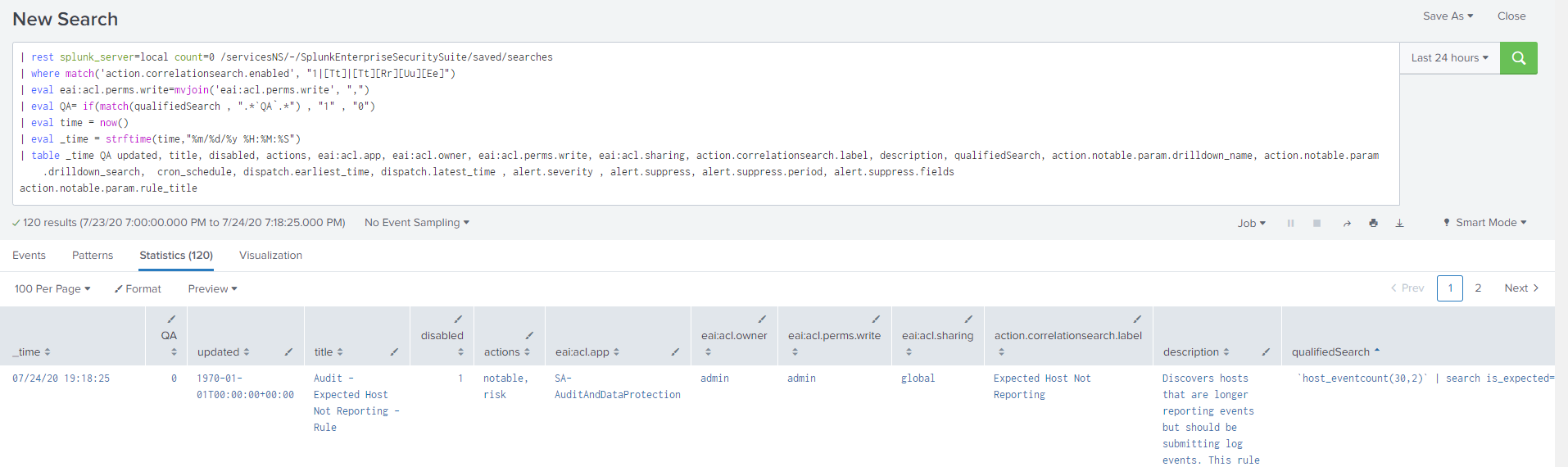

Content Management inside Splunk Enterprise Security is a quick way to filter on what is enabled (and it’s built into the UI and works out of the box). A second option, and perhaps more fun way to handle this, would be to use “| rest” search command, and pull the search configurations directly into the Splunk Search Results. By using this method, we have the option of saving the results as a summary index and doing additional analytics on them.

One of the best parts of Splunk is that we are able to leverage any data, including internal data, allowing us to create deeper insights. By grabbing and storing snapshots in time, it will allow us to manipulate and filter our data about our correlation searches, to gain more valuable information on what's running inside the environment. Let’s start with an example for option 2. Go to your Splunk Search Bar, and run the following search to grab key information around correlation searches.

| rest splunk_server=local count=0 /servicesNS/-/SplunkEnterpriseSecuritySuite/saved/searches

| where match('action.correlationsearch.enabled', "1|[Tt]|[Tt][Rr][Uu][Ee]")

| eval eai:acl.perms.write=mvjoin('eai:acl.perms.write', ",")

| eval QA= if(match(qualifiedSearch , ".*`QA`.*") , "1" , "0")

| eval time = now()

| eval _time = strftime(time,"%m/%d/%y %H:%M:%S")

| table _time QA updated, title, disabled, actions, eai:acl.app, eai:acl.owner, eai:acl.perms.write, eai:acl.sharing, action.correlationsearch.label, description, qualifiedSearch, action.notable.param.drilldown_name, action.notable.param.drilldown_search, cron_schedule, dispatch.earliest_time, dispatch.latest_time , alert.severity , alert.suppress, alert.suppress.period, alert.suppress.fields

action.notable.param.rule_title | collect index=correlationlogs

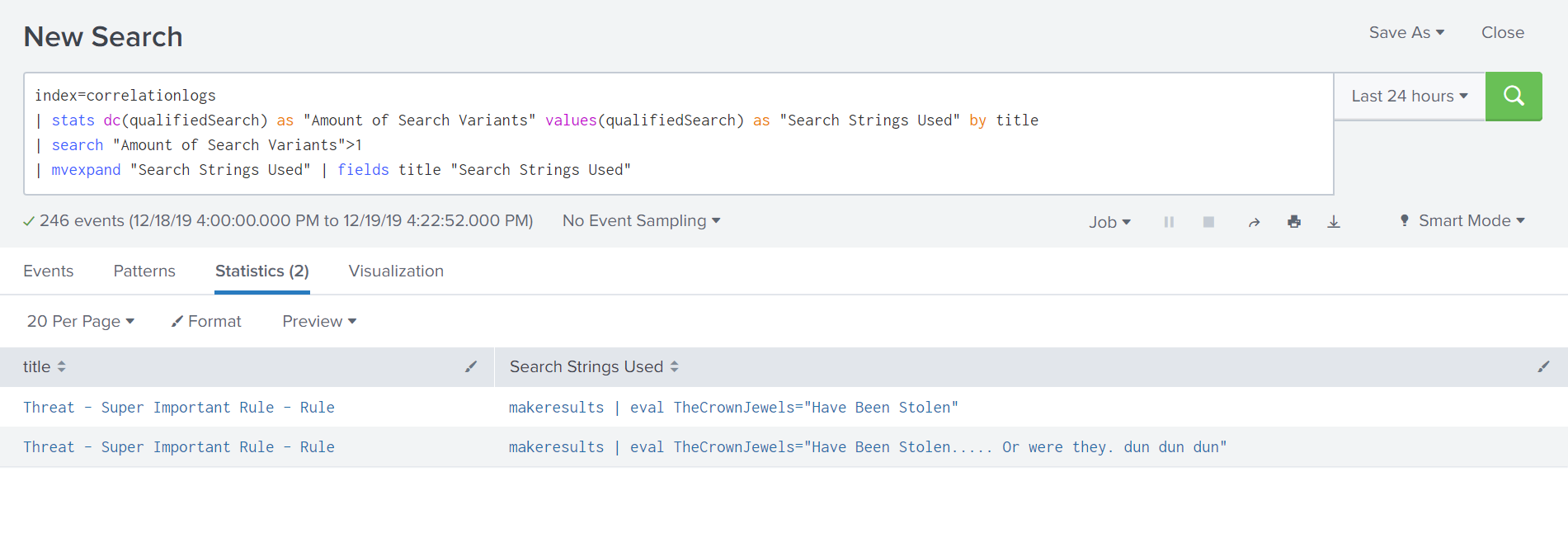

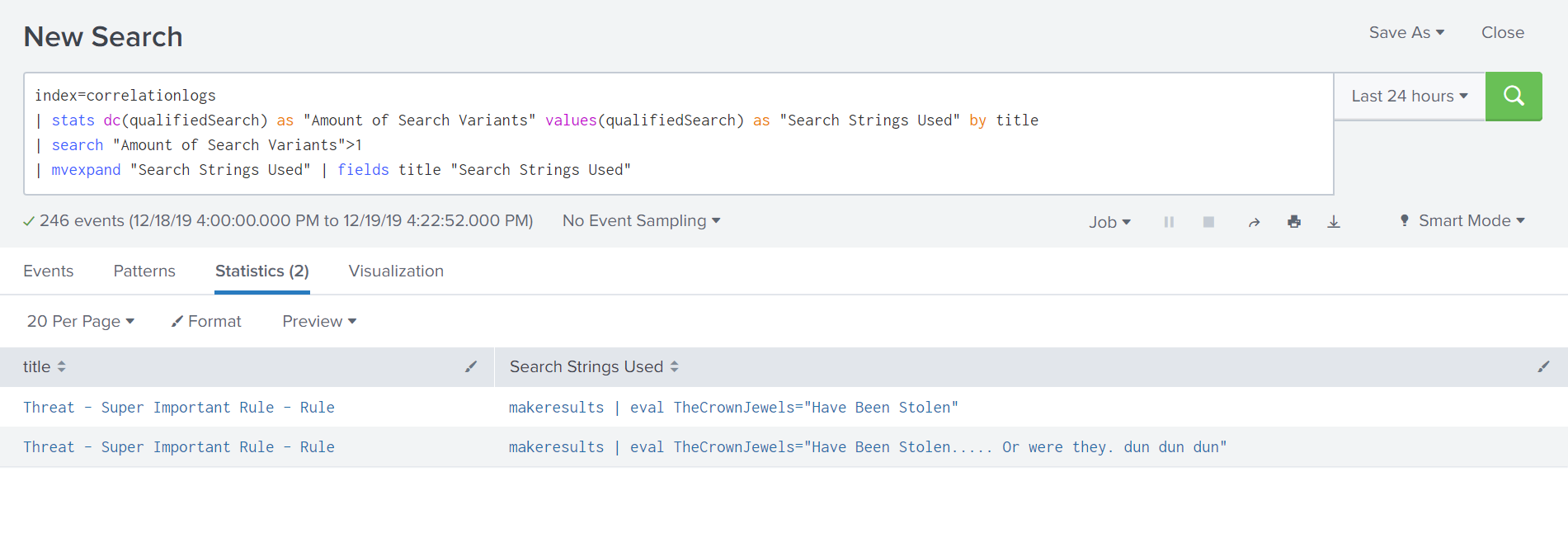

By running this search every hour (or whatever interval makes sense for you), we are able to get a snapshot in time of what rules were enabled and what SPL was being leveraged. Going a step further and leveraging the “| collect” search command at the end of the search, the data can be pushed to its own index where we can start doing additional analytics, like “was there a change in the amount of enabled searches?”, “was there a change to the SPL being leveraged?”, and “what changes in adaptive response actions were made?”, etc. Here’s an example of operating on that summarized data, where we search the new index for any correlation searches with more than one search variant seen in the last 24 hours:

By running this search every hour (or whatever interval makes sense for you), we are able to get a snapshot in time of what rules were enabled and what SPL was being leveraged. Going a step further and leveraging the “| collect” search command at the end of the search, the data can be pushed to its own index where we can start doing additional analytics, like “was there a change in the amount of enabled searches?”, “was there a change to the SPL being leveraged?”, and “what changes in adaptive response actions were made?”, etc. Here’s an example of operating on that summarized data, where we search the new index for any correlation searches with more than one search variant seen in the last 24 hours:

index=correlationlogs

| stats dc(qualifiedSearch) as "Amount of Search Variants" values(qualifiedSearch) as "Search Strings Used" by title

| search "Amount of Search Variants">1

| mvexpand "Search Strings Used" | fields title "Search Strings Used"

Since our initial “| rest” search is collecting a lot of additional information, we can easily swap out the “qualifiedSearch” field listed above with any of the other data points like cron schedule, severity, adaptive response, etc. to easily look for changes to our environment by simply scheduling the rest schedule to run on an hourly or daily basis, and have the audit searches run after looking changes as they come up.

Since our initial “| rest” search is collecting a lot of additional information, we can easily swap out the “qualifiedSearch” field listed above with any of the other data points like cron schedule, severity, adaptive response, etc. to easily look for changes to our environment by simply scheduling the rest schedule to run on an hourly or daily basis, and have the audit searches run after looking changes as they come up.

Stay tuned for part 2 where we will start adding audit logs and additional visualizations.

----------------------------------------------------

Thanks!

Chris Shobert

Related Articles

About Splunk

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.