Smart Ticket Insights App for Splunk

Some of you may have attended the recent webinar on how to simplify ticket remediation with ML-Powered Analysis. We’re thrilled to announce that we have packaged the new app shown in that demo – the Smart Ticket Insights App for Splunk – and it is now live on Splunkbase!

This app is built on top of the Machine Learning Toolkit (MLTK) and provides a guided workflow to help gain insight into ticket data using machine learning. The Smart Ticket Insights app is the first in our new “Smart Workflows” domain-specific workflow series. Smart Workflows are machine learning (ML) applications built using the Splunk Machine Learning Toolkit (MLTK) that allow users to surface insights for common challenges unique to their vertical without needing to know how to build a model from scratch. The ecosystem includes the new Smart Ticket Insights app. A Smart Education Insights app will also be available for download from Splunkbase soon. Stay tuned for future updates!

Identifying Frequently Occurring Types of Tickets

As we discussed on the webinar, IT Operations teams often face a huge variety of support tickets related to all aspects of the business.

Being able to rapidly triage and respond to this variety of requests can be a big challenge, and the Smart Ticket Insights app for Splunk is designed to help identify patterns in ticket data so that operations teams can:

- Quickly identify similar types of tickets, and;

- Remediate those tickets as rapidly as possible.

Once identified, tickets can be processed by further actions such as a playbook in Phantom to automate the remediation steps.

How to Use the App

Once the app and its dependencies have been installed it’s as simple as inputting ticket data – such as ticket data collected from ServiceNow using the Splunk Add-on for ServiceNow or from Jira using the Add-on for JIRA – selecting the appropriate fields from the dropdowns, and pressing “go.”

The app itself consists of three sections which I will take you through and show you how to use each of them in this blog.

Data Input

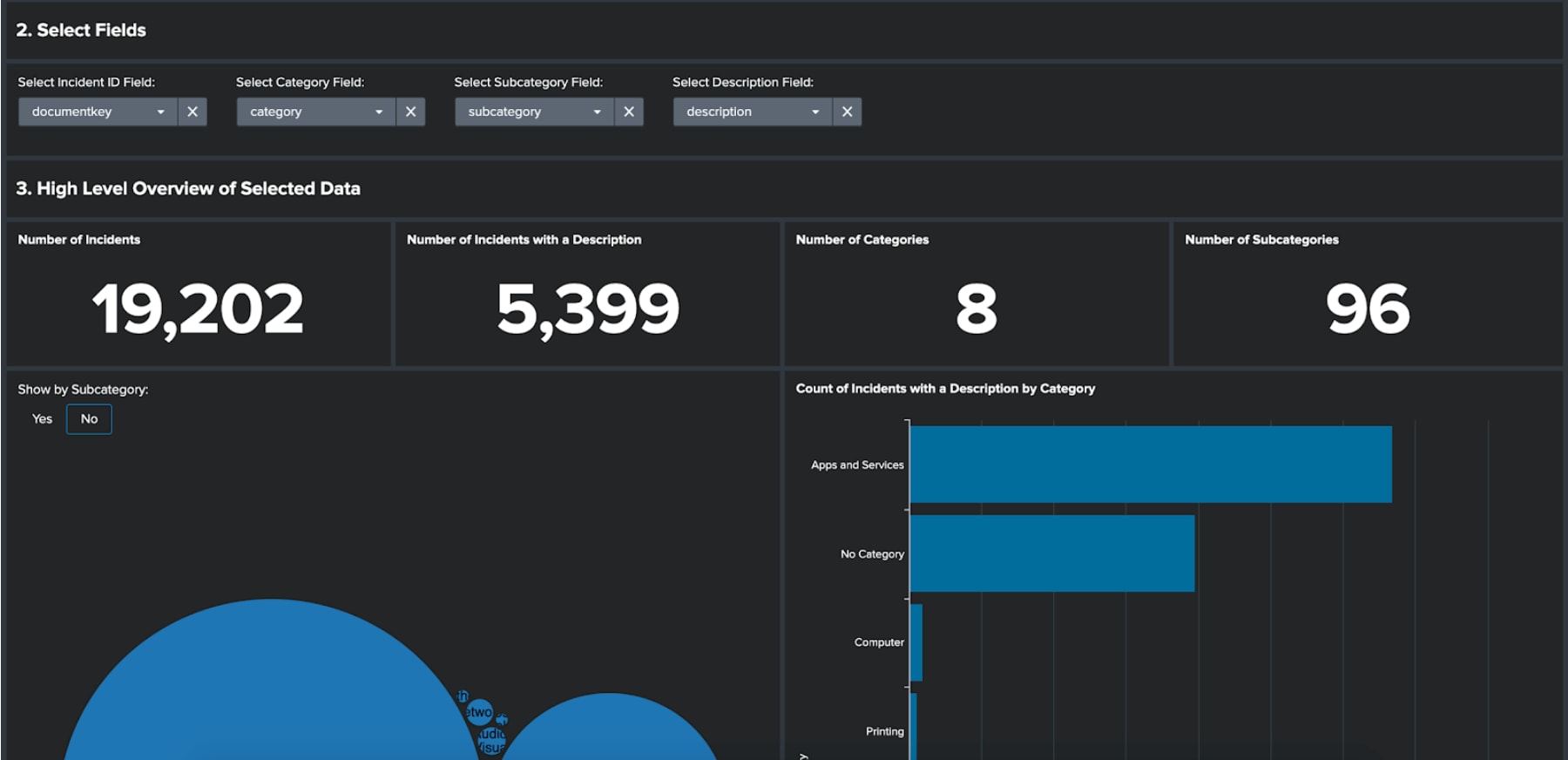

On this dashboard you first need to input a query that returns some ticket data. It is important that the data contains four fields: ID, category, subcategory and description. The ID should relate to the unique incident or ticket identifier. Once the query has run, you will be asked to select those four field types from a set of dropdowns.

Once the fields have been selected a series of dashboard panels will provide high-level insight about your ticket data; such as the number of incidents and those incidents that include a description.

The key chart that gets presented is the ‘Count of Incidents with a Description by Category.’ This chart is important as it displays for each category how many incidents have a description. In the following sections of the app we will be analysing the descriptions for each category in turn to find insight about the tickets. This means that if there is a low number of descriptions for a given category, we will be unlikely to identify any insight.

Once you have studied this chart, select the cut-off point for mining category descriptions, input this into the dropdown below and then click the button to identify frequently occurring types of tickets to move to the next section.

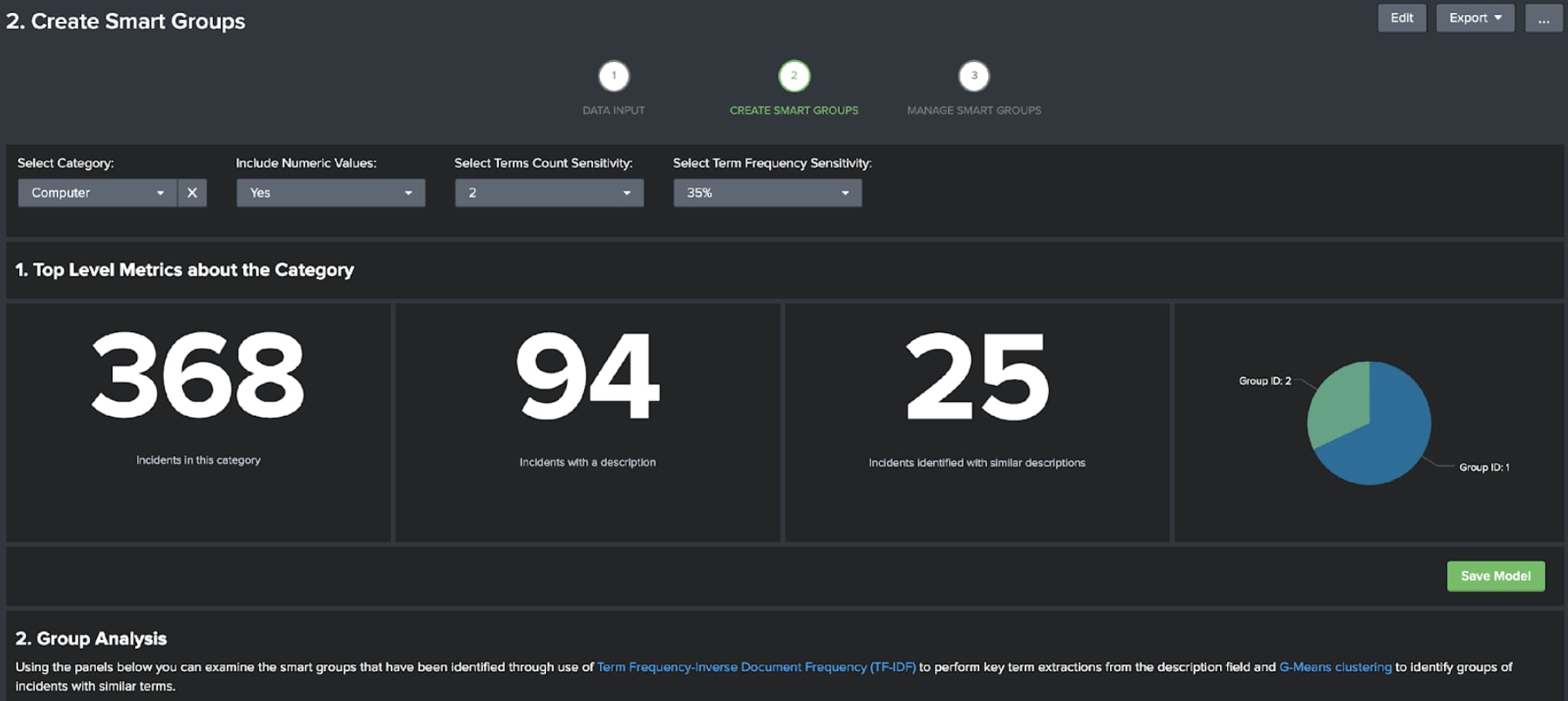

Create Smart Groups

This section of the app is designed to help train the models that are used to identify frequently occurring types of tickets. To train the models, all you need to do is select the category you want to analyse and confirm the model parameters: such as whether or not to include numeric values, how many terms need to be present in a cluster and the term sensitivity (35% meaning a term will be modelled only if it occurs in 35% or less of the descriptions).

Once you have made your selections the models will be trained. This consist of three main techniques:

- Term Frequency-Inverse Document Frequency (TFIDF), which is used to extract key terms in the descriptions by analysing all of the terms in all of the descriptions. In this app terms are sequences of 1-3 words.

- Principal Component Analysis (PCA), which is used to make a numerical representation of a large number of numerical fields – essentially reducing huge numbers of variables down to a smaller selection that contain the key features. As TFIDF can often generate large numbers of fields, PCA is used to reduce the risk of subsequent modelling being biased toward a small selection of the TFIDF generated fields.

- G-Means Clustering, which is used to identify groups of similar data points. This is a similar algorithm to K-Means, but crucially you do not need to tell the algorithm how many clusters to find in advance, it calculates that for you – making it ideal for exploratory analysis.

Once the models have been trained you can analyse the groups that have been identified, and provided you are satisfied that they are identifying similar types of tickets, you can save the models by clicking on the save model button.

Don’t worry if they aren’t perfect at this point – there are a few options for editing the groups in the next section of the app.

Manage Smart Groups

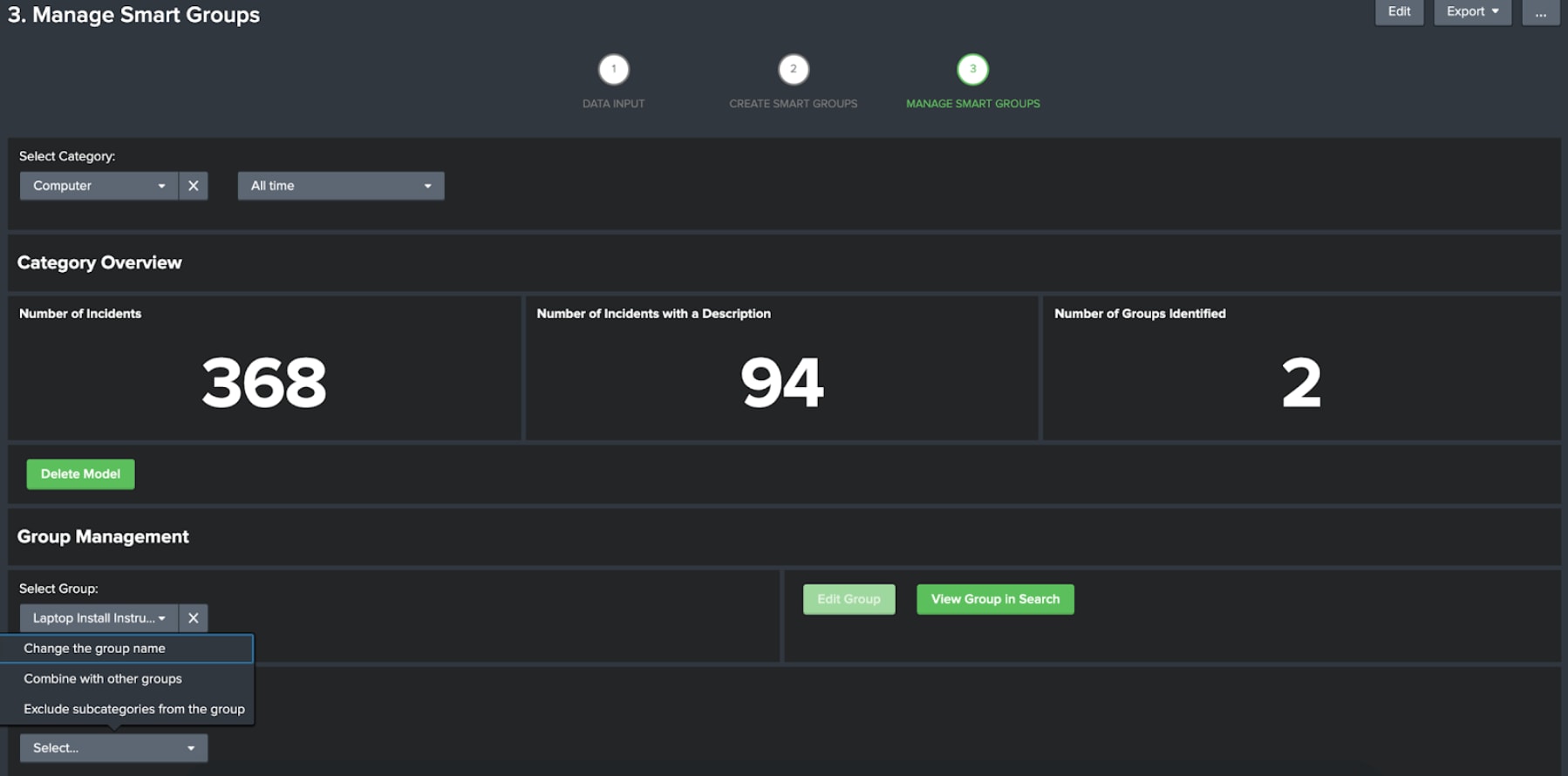

Once you have saved your models you can manage them from the manage smart groups dashboard.

On this dashboard you can select the category you want to manage. For each group you select you can edit the group and also open the group in search. There are three editing options:

- Change the group name. Given each group is provided with an ID rather than a name this option can be used to give the group a meaningful name, such a new hire process if all the tickets relate to hiring new joiners for example.

- Combine the group with others. Although the combination of TFIDF and G-Means can find unique groups, there are also cases where it finds multiple similar groups – using this option allows you to condense the groups if you see any similarity.

- Exclude subcategories from the group. Unsupervised clustering isn’t always perfect, and there may be cases where there are obviously incorrect entries in a group that you might be able to handle with subcategory filtering. This option allows you to omit subcategories from the group.

You can also choose to delete the models if you wish.

The open in search button will provide you with a search to identify the group you have selected. This search can be set to run on a schedule and actions can be triggered if results are found – just like any other Spunk search. This means that if you come across this type of ticket you can trigger remediation actions from Splunk, such as running a Phantom playbook to gain the necessary HR and line management approvals to hire a new joiner.

Where Next?

This is the first in a series of Smart Workflow apps we are aiming to produce on top of the Machine Learning Toolkit to help users gain insight into their data using machine learning without needing to be a data scientist. Stay tuned for more by attending .conf20, visiting our website and checking out future blogs for further announcements about these verticalized Smart Workflows!

Related Articles

About Splunk

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.