$SPLUNK_HOME is where the heart is

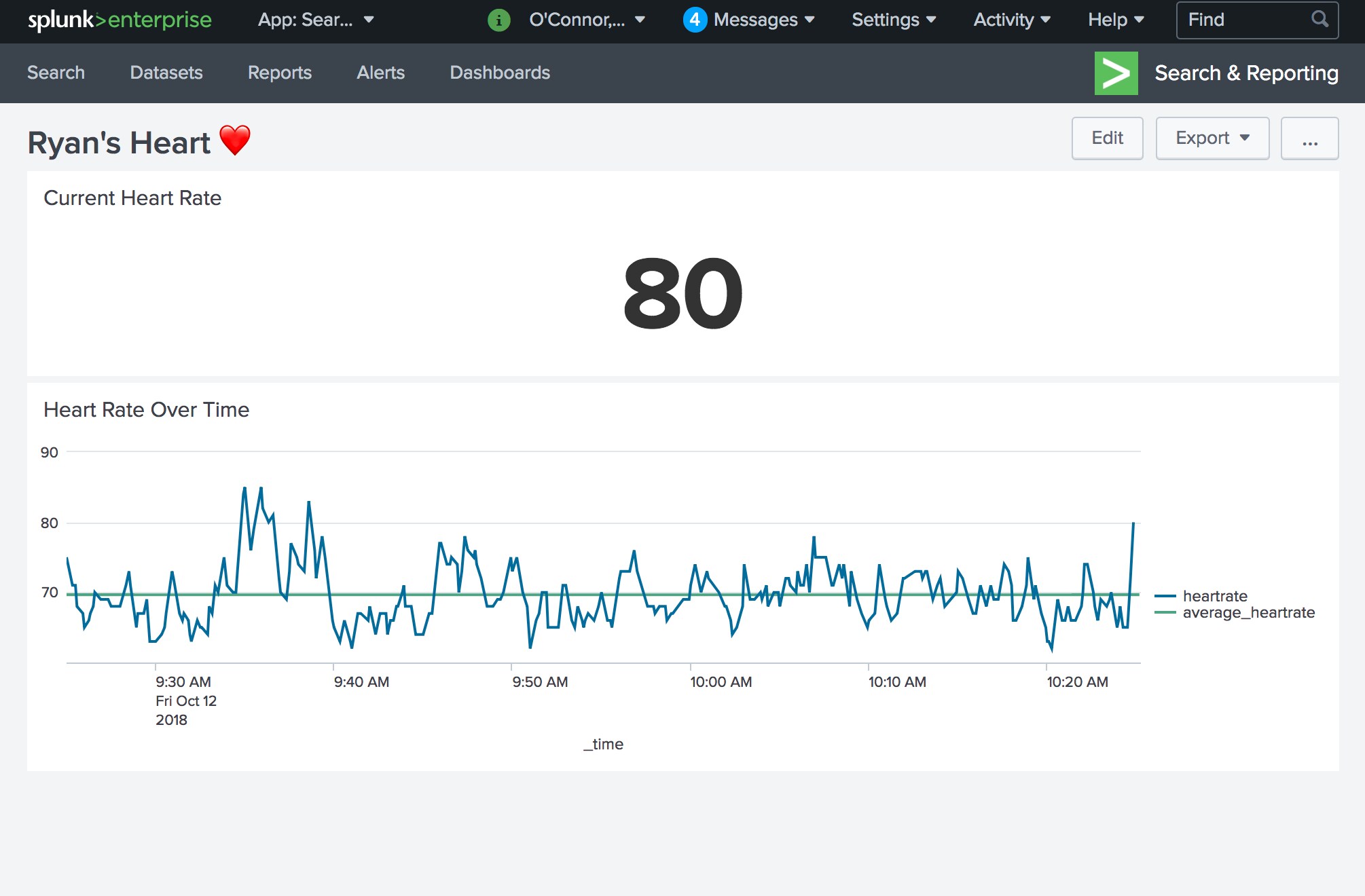

Wearables are one of the trendiest types of IoT devices on the market today. They’re lightweight, portable, and they give you insights about your own body. Getting up to the second information about our bodies into an Analytics platform is such a new and exciting concept to me - especially when I encounter and develop a way to capture this data that is brand new to me. For example, using Node.js to repurpose an existing website to create a dashboard about my heart like the one below.

The background here is that recently I’ve been in the process of helping a fellow Splunker with a project that he’s working on where he’ll be running the Marine Corps Marathon with the group Ainsley’s Angels. One of the metrics he wanted to collect during the race was heart rate data.

I offered up the Fitbit Add-on for Splunk to him, but unfortunately this wasn’t quite real-time enough. This is a terrific add-on. It’s used with some really great wearable devices that are extremely robust in the data points they provide. The limitation we encountered was that the Fitbit device itself didn’t seem to synchronize data with a phone as often as we required. It makes sense because that could be a real battery hog on the phone and the Fitbit device itself over a long period of time. Fitbit users don’t typically need up to the second information - as they want these devices to last for days, rather than hours. We required more frequent data over a shorter period of time. That time being some number of hours (I won’t give an exact time here so that Tony doesn’t feel pressured into completing his race in a certain number of hours).

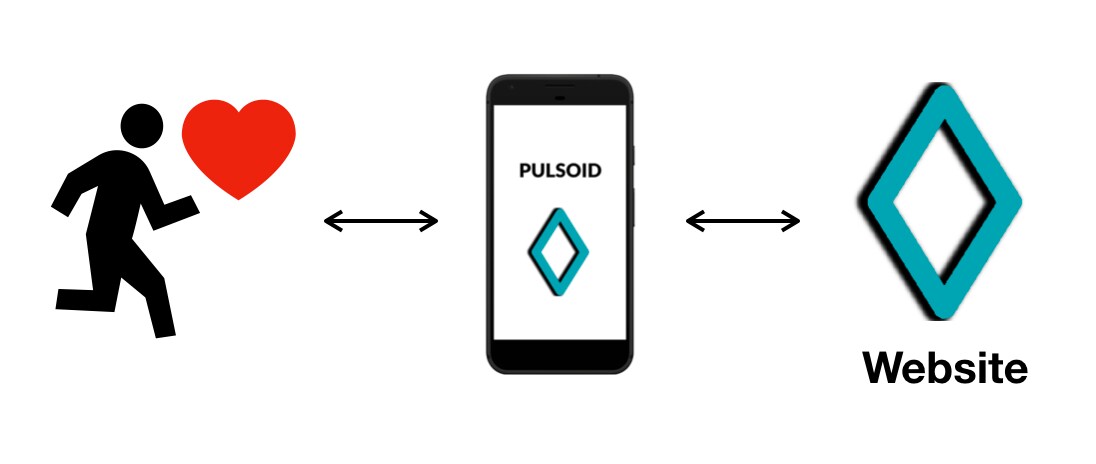

Tony and I went back to the drawing board. We wanted to find a way to get up to the second heart rate data. Oddly enough, we were pointed to a website called Pulsoid. This website aims to deliver up to the second heart rate data for live streams such as Twitch or YouTube Gaming.

One of the first nice features of Pulsoid, is that they have an app that you can download from your phone’s app store. The phone app pairs with a heart rate monitor, such as the Polar H10. That app/wearable combination constantly sends data to Pulsoid.

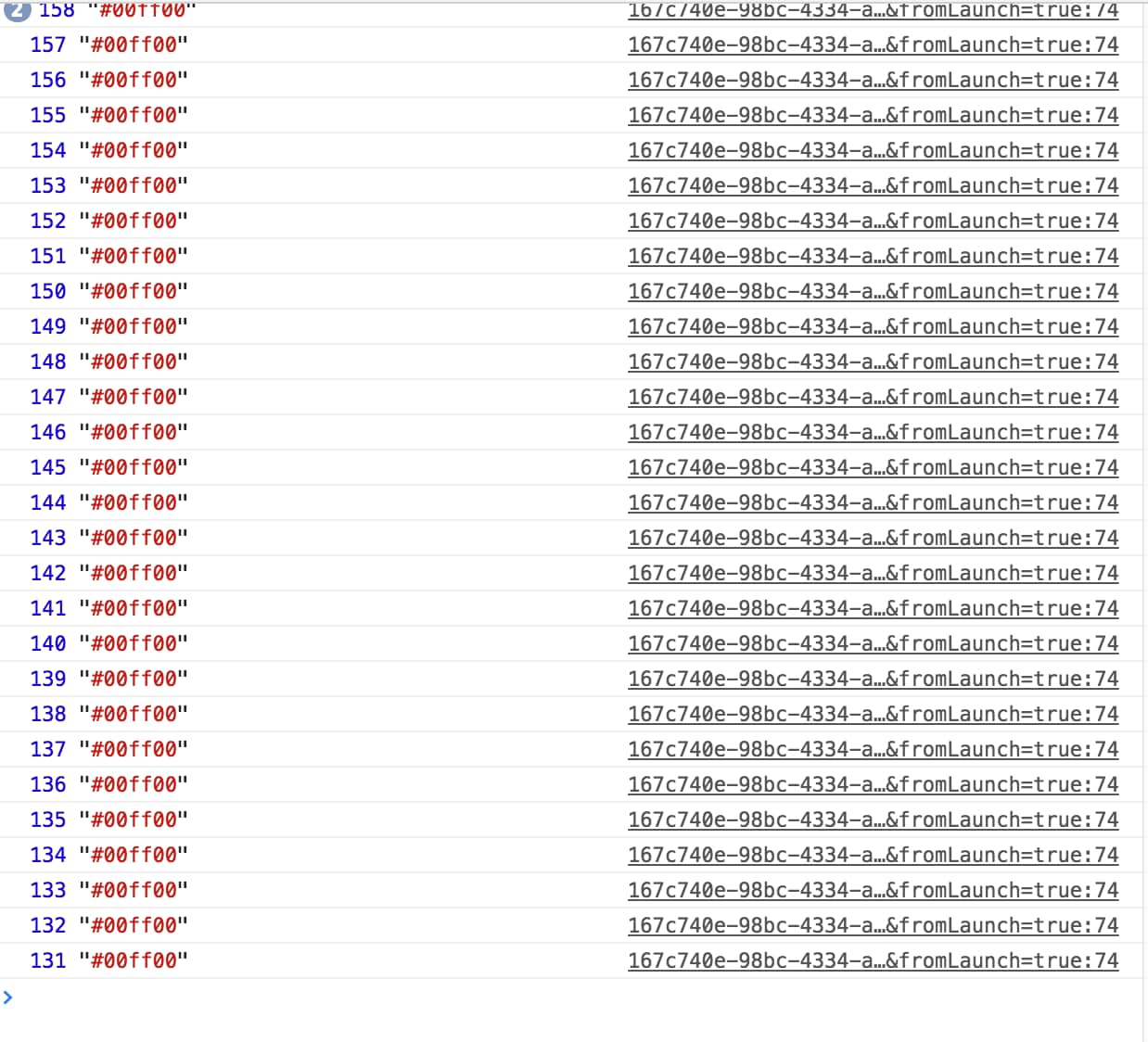

The end result is a web-based widget that you can overlay onto a livestream. And this data comes in extremely frequently. Opening up the javascript console in Google Chrome shows that the data points show up about every second or even more frequently than that.

Once Tony and I knew that we had a data collection method from our bodies, we still needed to find a way to get the data into Splunk. Here is where node.js and Google Chrome come in.

With Node.js, Google Chrome, the Splunk HTTP Event Collector, and a couple of node packages, we were able to query a Pulsoid widget URL and collect up to the second data points about our heart rates. The real nice part about collecting the data in this way, is that we can render javascript. We wanted to do this in a more simple manner (using either cURL or wget), but unfortunately those tools won’t render javascript. We needed to scrape data off of a page after the javascript was rendered. So even though we were running server versions of operating systems that don’t have a fancy GUI (Amazon Linux 2 and Ubuntu 16.04), we could still get Google Chrome installed, use it in headless mode to render a page, and then scrape the data off of it.

I even went as far as to turn this into a Github repository complete with instructions on setting the entire thing up on either tested operating system. I’d rather not completely re-type all the instructions here in this blog, in fear that they will go stale as I make updates and improvements. Suffice it to say that at the time of writing this, you need only a few things to get this data flowing into your Splunk instance.

A working installation of Splunk on a Supported OS with the HTTP Event Collector Turned on and Listening

Node.js installed

This Github Repository https://github.com/ryanwoconnor/HeartRate_Pulsoid

Google Chrome

A Pulsoid Account

Heart rate monitor and phone with the Pulsoid App installed

So what does this end up looking like? When all is said and done, we can run the command

node scrape.js

This will result in our heart rate data being sent to Splunk via the HTTP Event Collector at any interval we choose. As a result, our Splunk installations are the location for all of our heart rate data and $SPLUNK_HOME is where the heart is.

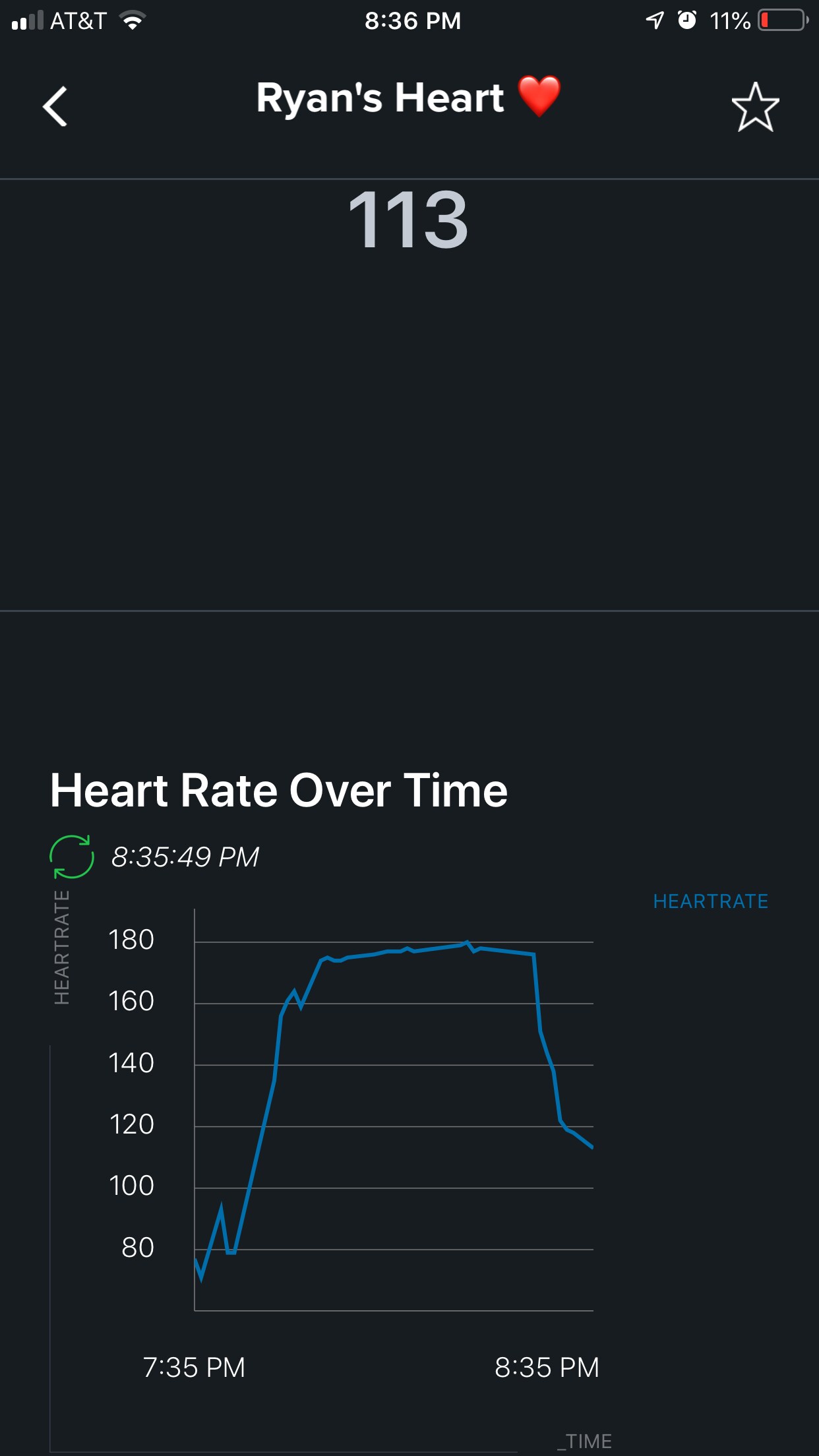

As an added bonus, by pairing a Splunk instance with the all new Splunk Mobile App that was announced recently at .conf 2018, I even have a portable heart rate monitor that I can use to watch my workouts in action.

----------------------------------------------------

Thanks!

Ryan O'Connor

Related Articles

About Splunk

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.