Digital Resilience Pays Off

Download this e-book to learn about the role of Digital Resilience across enterprises.

I’m really enjoying playing with all the new Developer hooks in Splunk 6.3 such as the HTTP Event Collector and the Modular Alerts framework. My mind is veritably fizzing with ideas for new and innovative ways to get data into Splunk and build compelling new Apps.

When 6.3 was released at our recent Splunk Conference I also released a new Modular Alert for sending SMS alerts using Twilio, which is very useful in it’s own right but also a really nice simple example for developers to reference to create their own Modular Alerts.

But after getting under the hood of the Modular Alerts framework, this also got me thinking about other ways to utilise Modular Alerts to fulfill other use cases beyond just alerting , one such of these is the scheduled export of indexed data.

This is not a new use case to me. Over the years I have worked with many developers who have the requirement to be able to periodically perform a dump of data indexed in Splunk and export it off to some third party location all in an automated manner. And the solution always required a custom program/script that is fired by your own scheduler (CRON) , integrates with Splunk via the REST API to perform the data export , then fires off the returned data to whatever destination (fie , ftp , another datastore etc..), or something along those lines anyway.

Now whilst this works, it is not ideal in my mind :

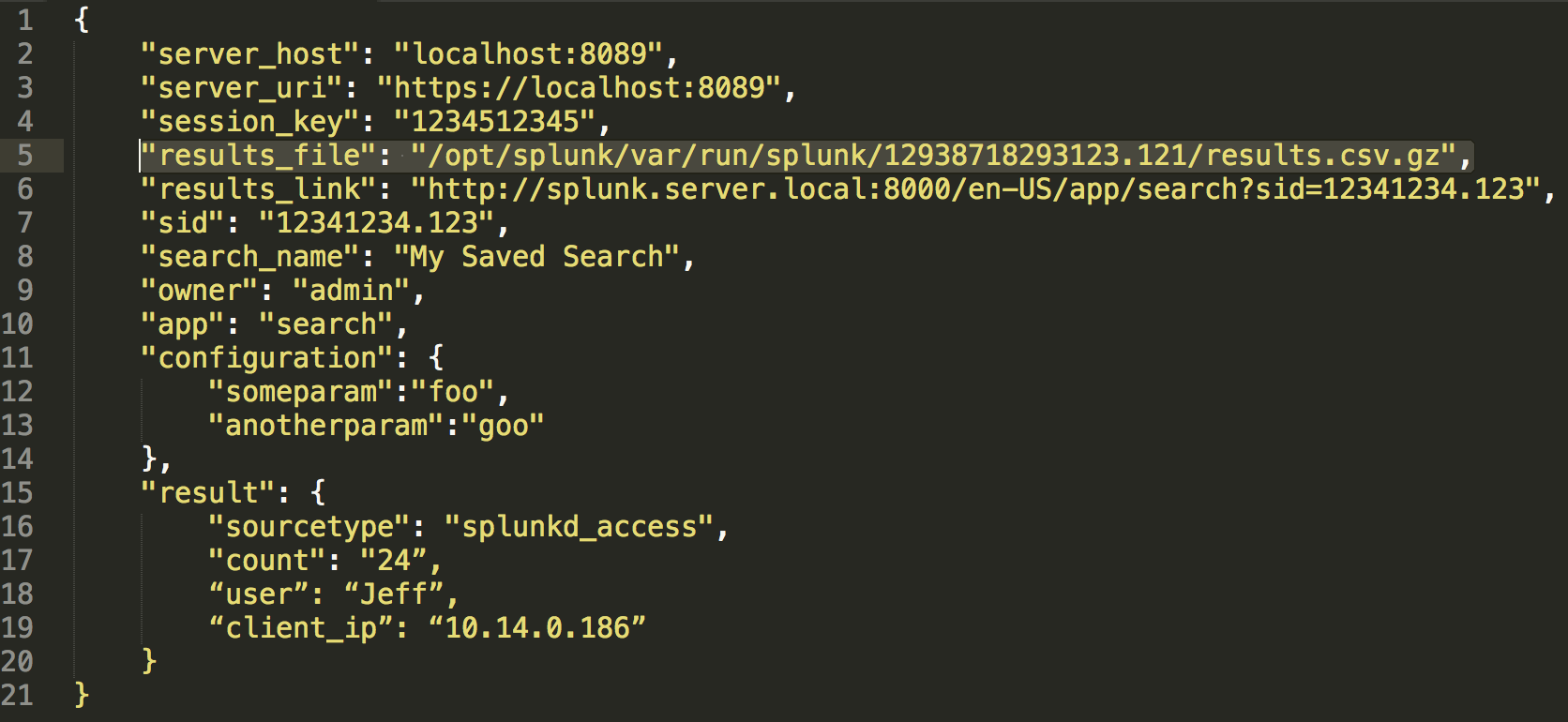

When you implement a Modular Alert script , a JSON or XML payload will get passed to your core script at invocation time. This payload contains several fields such as variables that the user has setup(in the configuration property) and various other fields pertaining to the runtime execution of the fired Alert.

But the field that I’m interested in is the results_file field.

This field gives you access to the search results from the scheduled firing of the Alert. So then all you have to do in your script is access this file , and then implement the logic to export the contents of the file to whatever your data destination is, couldn’t be simpler !

To get you started I have created a very simple example implementation and published it to Splunkbase. This example simply takes the results file and sends the data to some configurable location on the filesystem.

Hopefully this can seed your creativity and get you thinking about other use cases for scheduled export of data such as :

Now go forth and create some compelling new certified Apps/Add-ons for Splunkbase !

----------------------------------------------------

Thanks!

Damien Dallimore

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.