Digital Resilience Pays Off

Download this e-book to learn about the role of Digital Resilience across enterprises.

Quality, Quality, Quality

Because of quality-related product defects, three world-wide recalls by Toyota during late 2009 and early 2010 cost the company billions of dollars and decreased sales.

“Toyota has, for the past few years, been expanding its business rapidly. Quite frankly, I fear the pace at which we have grown may have been too quick. I would like to point out here that Toyota’s priority has traditionally been the following: First; Safety, Second; Quality, and Third; Volume. These priorities became confused, and we were not able to stop, think, and make improvements as much as we were able to before, and our basic stance to listen to customers’ voices to make better products has weakened somewhat. We pursued growth over the speed at which we were able to develop our people and our organization …” [1]

Splunk is working with customers in manufacturing industry to leverage large-scale data retrieval and analysis, and operational intelligence capabilities, and to facilitate early quality issue detection before it impacts the bottom line.

Legacy Systems are Here to Stay

Most Automated Test Equipment (ATE) manufacturing data, by far, comes from stations using legacy Microsoft OLE and COM/DCOM technologies. Furthermore, the OPC foundation has long established the industry standard of linking and embedding those protocols for PC communication in an ATE network. On the other hand, Splunk is committed to a cross-platform, big data environment. The question is how to leverage the Splunk cross-platform paradigm to collect data from such legacy systems, using off-the-shelf protocols.

Architecture

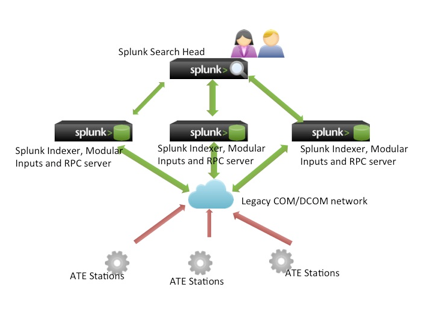

The Splunk solution is to adapt the java-to-DCOM Bridge concept to acquire the manufacturing data generated in a COM/DCOM ATE network. The following figure shows the Splunk system connected to an ATE network.

Search Head

The Splunk Search Head provides a centralized UI for managing data acquisition across the network. You can create configurable input items and use a Web UI to add, edit, enable, and disable inputs, which are

powerful facilities for managing the ATE stations’ data input life-cycle and for measuring station control and setup parameters.

Modular inputs

Modular Inputs on the Indexer extend the Splunk framework by supporting custom inputs and distributed computation capabilities. (See additional information about Modular Inputs Tools.)

Database Inputs

It is worthy to mention here too, that Splunk DB Connect is the other alternative for importing manufacturing data into Splunk from traditional database.

Cross language service framework

A “Cross Language Service Framework,” provides a way for Python functions to remotely call corresponding Java functions, using a Java-to-DCOM Bridge. For data acquisition, a high-performance Splunk RPC server based on fast-protocol Java NIO, such as Netty, streams binary-formatted data to Splunk. A customized command is defined in the commands.conf file to improve querying data acquisition through the bridge.

Java-to-DCOM Bridge java service

The Java-to-DCOM Bridge service is based on open source Utgard, which is a vendor-independent, 100% pure Java OPC Client API for connecting to OPC servers. It provides a pluggable framework for developing customized data acquisition plug-ins. Utgard is licensed under the LGPL.

Splunk uses the framework to provide OPC classic DA(-to-DCOM) and UA DA for connecting to OPC-standard compliance servers and stations.

Conclusion

Importing data from COM/DCOM based OPC servers into Splunk for analysis is proved to be practical and efficient. Splunk engine is optimized for quickly indexing, analysis and reporting engine for time-series data. Further interesting developments include CP/CPK process control [7][8] within the scope of six-sigma practices and combine the power of Splunk data analysis and visualization capability for the manufacturing industries to tackle their daily operation and overall quality issues.

References

[1] http://en.wikipedia.org/wiki/2009-11_Toyota_vehicle_recalls

[3] http://docs.splunk.com/Documentation/Splunk/5.0.4/AdvancedDev/ModInputs

[4] http://blogs.splunk.com/2013/04/16/modular-inputs-tools/

[5] http://apps.splunk.com/app/958

[6] http://openscada.org/projects/utgard/

[7] http://en.wikipedia.org/wiki/Six_sigma

[8] http://en.wikipedia.org/wiki/Process_capability_index

----------------------------------------------------

Thanks!

Bill Tsay

The Splunk platform removes the barriers between data and action, empowering observability, IT and security teams to ensure their organizations are secure, resilient and innovative.

Founded in 2003, Splunk is a global company — with over 7,500 employees, Splunkers have received over 1,020 patents to date and availability in 21 regions around the world — and offers an open, extensible data platform that supports shared data across any environment so that all teams in an organization can get end-to-end visibility, with context, for every interaction and business process. Build a strong data foundation with Splunk.